ComfyUI Batch Processing: How I Automate 1000+ Image Generations

Learn how to batch process thousands of images in ComfyUI using the Queue system, API automation, CSV/JSON inputs, and Python scripts for overnight production runs.

Last month I had a client who needed 1,200 product mockups generated in three different styles. I quoted them a two-day turnaround, went to bed, and woke up to 1,247 finished images neatly organized in folders by style and product name. The total hands-on time was about 45 minutes of setup. The rest was ComfyUI doing what it does best when you stop clicking "Queue Prompt" manually and let automation take over.

If you've ever sat at your desk clicking that queue button hundreds of times, or worse, manually changing prompts one by one, this guide is going to change the way you work. I've spent the better part of a year refining my ComfyUI batch processing pipeline, and I'm going to walk you through everything from the built-in queue system to full API-driven automation with Python scripts that handle thousands of images while you sleep.

Quick Answer: ComfyUI batch processing at scale requires moving beyond the built-in queue system to API-based automation. Using Python scripts that feed prompts from CSV or JSON files to ComfyUI's API endpoint, you can automate 1,000+ image generations with custom file naming, organized output folders, error handling, and retry logic. The key components are the /prompt API endpoint, WebSocket monitoring for job status, and a structured input format for variable prompts.

- The built-in Queue system handles up to 50-100 images efficiently, but API automation is essential for 1,000+ generations

- Python scripts feeding CSV/JSON prompt data to ComfyUI's API endpoint enable fully automated batch runs

- Proper error handling and retry logic prevent losing progress during long overnight batch jobs

- Organized output with structured file naming saves hours of manual sorting after generation completes

- Scheduling batch runs during off-peak hours on cloud GPUs can cut costs by 40-60%

Why Does Manual ComfyUI Batch Processing Break Down at Scale?

Most ComfyUI users start their batch processing journey by clicking "Queue Prompt" multiple times or using the built-in batch count feature. That approach works fine when you need 10 or 20 variations. It falls apart completely when you need hundreds or thousands of images with different prompts, settings, or parameters.

I learned this the hard way during my first big project. A print-on-demand shop needed 500 unique designs, each with a different text overlay and color scheme. I tried queuing them manually by adjusting the prompt each time. After about 30 minutes and 40 images, I realized I'd accidentally duplicated several prompts, skipped others entirely, and had no way to track which ones had completed successfully. That was the night I started building my automation scripts.

The fundamental problem is that ComfyUI's UI was designed for interactive creative work, not production-line processing. There's nothing wrong with that. It's an incredible tool for experimentation and fine-tuning. But when you need to go from "I found the perfect settings" to "now give me 1,000 variations," you need a different approach entirely.

Here's what breaks down at scale:

- No prompt tracking. You can't easily verify which prompts have been processed and which haven't.

- No automatic file naming. Everything dumps into the output folder with sequential numbers, making it impossible to match outputs to inputs.

- No error recovery. If ComfyUI crashes at image 347, you have to figure out where to restart manually.

- No variable substitution. You can't automatically swap out parts of a prompt without manually editing the workflow each time.

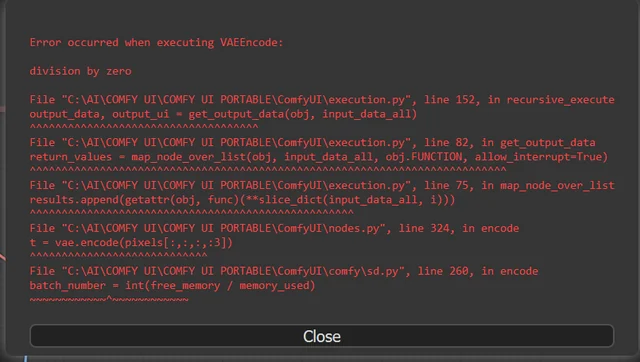

- VRAM leaks. Extended sessions sometimes accumulate memory that doesn't get released, causing crashes around the 200-300 image mark.

If you're new to ComfyUI batch processing in general, I'd recommend starting with the basics in our ComfyUI batch processing workflow guide before diving into the advanced automation covered here.

Understanding ComfyUI's Queue System and Its Limits

Before we jump into API automation, it's worth understanding what the built-in queue system can and can't do, because for smaller jobs it's still the fastest way to work.

ComfyUI's queue system operates on a simple FIFO (first in, first out) model. When you click "Queue Prompt," it adds the current workflow state to the queue. You can queue multiple jobs by clicking the button repeatedly or by setting the batch count in the Extra Options panel. Each queued job is independent, meaning ComfyUI captures the entire workflow state at the moment you click queue.

This has an important implication that trips people up. If you change a prompt and then queue 10 jobs, all 10 will use the new prompt. But if you queued 5 jobs, then changed the prompt, and queued 5 more, the first 5 use the old prompt and the last 5 use the new one. The queue captures state at queue time, not execution time.

For straightforward batch work, here's what I recommend:

- Set your batch size in the KSampler node to generate multiple images per queue execution (4-8 depending on VRAM).

- Use the Auto Queue feature if available in your ComfyUI version to automatically re-queue after completion.

- Set the seed to -1 (random) so each batch produces unique outputs.

- Use the Queue Front/Back options to prioritize urgent jobs.

The practical ceiling for manual queue-based batching is around 50-100 images. Beyond that, you'll spend more time managing the queue than doing creative work. And you'll have zero ability to dynamically change prompts across the batch without sitting at your computer the whole time.

The ComfyUI queue panel works well for small batches but becomes a bottleneck above 100 images.

How Do You Set Up API-Based Batch Processing?

This is where things get interesting. ComfyUI runs a local HTTP server, and every single thing you do in the UI can be done programmatically through its API. When you click "Queue Prompt" in the browser, the UI is literally just sending a POST request to http://127.0.0.1:8188/prompt with your workflow encoded as JSON. We can do the same thing from a Python script, and that opens up everything.

I remember the first time I got API-based batching working. I had been up until 2 AM fighting with the workflow JSON format, and when I finally ran the script and watched images start appearing in my output folder without me touching the UI, it felt like unlocking a cheat code. Suddenly, I wasn't limited by how fast I could click or how long I could stay awake.

Exporting Your Workflow as API JSON

First, you need your workflow in API format. In ComfyUI, enable Dev Mode in the settings panel, then click "Save (API Format)." This gives you a JSON file where each node is represented by its ID number with all its inputs and outputs spelled out explicitly.

The API JSON format looks different from the standard workflow format. Instead of visual layout information, it's a flat dictionary of nodes keyed by their ID number. Here's a simplified example:

{

"3": {

"class_type": "KSampler",

"inputs": {

"seed": 42,

"steps": 20,

"cfg": 7.5,

"sampler_name": "euler",

"scheduler": "normal",

"denoise": 1.0,

"model": ["4", 0],

"positive": ["6", 0],

"negative": ["7", 0],

"latent_image": ["5", 0]

}

},

"6": {

"class_type": "CLIPTextEncode",

"inputs": {

"text": "a professional photo of a red sports car",

"clip": ["4", 1]

}

}

}

The key insight is that you can modify any value in this JSON before sending it to the API. Want to change the prompt? Update the text field in node 6. Want a different seed? Change node 3's seed value. Want to swap models? Change the checkpoint loader node's input.

The Core Python Batch Script

Here's the Python script I use as my foundation for all batch processing jobs. I've refined this over dozens of projects and hundreds of thousands of generated images:

import json

import urllib.request

import urllib.parse

import time

import os

import csv

import random

import sys

from datetime import datetime

COMFYUI_URL = "http://127.0.0.1:8188"

def queue_prompt(workflow_json):

"""Send a prompt to ComfyUI and return the prompt ID."""

data = json.dumps({"prompt": workflow_json}).encode("utf-8")

req = urllib.request.Request(

f"{COMFYUI_URL}/prompt",

data=data,

headers={"Content-Type": "application/json"}

)

response = urllib.request.urlopen(req)

result = json.loads(response.read())

return result.get("prompt_id")

def check_progress(prompt_id):

"""Poll the history endpoint until the job completes."""

while True:

try:

response = urllib.request.urlopen(

f"{COMFYUI_URL}/history/{prompt_id}"

)

history = json.loads(response.read())

if prompt_id in history:

return history[prompt_id]

except Exception:

pass

time.sleep(1)

def load_workflow(filepath):

"""Load the API-format workflow JSON."""

with open(filepath, "r") as f:

return json.load(f)

def batch_generate(workflow_path, csv_path, output_dir,

prompt_node_id="6", seed_node_id="3"):

"""Run batch generation from a CSV of prompts."""

os.makedirs(output_dir, exist_ok=True)

base_workflow = load_workflow(workflow_path)

with open(csv_path, "r") as f:

reader = csv.DictReader(f)

rows = list(reader)

total = len(rows)

completed = 0

failed = []

log_path = os.path.join(output_dir, "batch_log.json")

for i, row in enumerate(rows):

workflow = json.loads(json.dumps(base_workflow))

# Set the prompt text

workflow[prompt_node_id]["inputs"]["text"] = row["prompt"]

# Randomize seed

workflow[seed_node_id]["inputs"]["seed"] = random.randint(

0, 2**32 - 1

)

print(f"[{i+1}/{total}] Generating: {row['prompt'][:60]}...")

try:

prompt_id = queue_prompt(workflow)

result = check_progress(prompt_id)

completed += 1

print(f" Completed ({completed}/{total})")

except Exception as e:

print(f" FAILED: {e}")

failed.append({"index": i, "prompt": row["prompt"],

"error": str(e)})

# Write summary log

summary = {

"total": total,

"completed": completed,

"failed": len(failed),

"failures": failed,

"timestamp": datetime.now().isoformat()

}

with open(log_path, "w") as f:

json.dump(summary, f, indent=2)

print(f"\nBatch complete: {completed}/{total} succeeded")

if failed:

print(f" {len(failed)} failures logged to {log_path}")

if __name__ == "__main__":

batch_generate(

workflow_path="workflow_api.json",

csv_path="prompts.csv",

output_dir="./batch_output",

prompt_node_id="6",

seed_node_id="3"

)

This script is intentionally simple. It uses only standard library modules so you don't need to install anything extra. The check_progress function polls the history endpoint until ComfyUI reports the job as complete, then moves on to the next prompt.

Hot take: most people over-engineer their batch scripts with async WebSocket connections and complex threading. For 99% of use cases, simple sequential processing with polling is more reliable and easier to debug. I've tried the fancy async approaches, and they introduce race conditions and connection management headaches that aren't worth it unless you're running multiple ComfyUI instances in parallel.

How Should You Structure Input Data for Variable Prompts?

The CSV approach works well for simple prompt variations, but real production work often requires changing multiple parameters simultaneously. You might need different prompts, different aspect ratios, different models, or different ControlNet settings for each image.

I use two formats depending on the complexity of the job. For straightforward text variations, CSV is perfect. For complex multi-parameter jobs, JSON gives you much more flexibility.

CSV Format for Simple Prompt Batches

Your CSV file needs a header row and one row per image. At minimum, include a prompt column, but I always add an id column for tracking and a negative column for negative prompts:

id,prompt,negative,style

prod_001,"professional product photo of wireless headphones on marble surface","blurry, low quality, cartoon","product"

prod_002,"professional product photo of smartwatch on dark background","blurry, low quality, cartoon","product"

prod_003,"lifestyle photo of person wearing wireless earbuds at gym","blurry, low quality, cartoon, deformed hands","lifestyle"

JSON Format for Complex Multi-Parameter Batches

When you need to change model checkpoints, resolution, CFG values, or other node-specific parameters per image, JSON is the way to go:

{

"defaults": {

"steps": 25,

"cfg": 7.5,

"width": 1024,

"height": 1024,

"negative_prompt": "blurry, low quality, artifacts"

},

"jobs": [

{

"id": "hero_banner_01",

"prompt": "epic fantasy landscape with floating islands",

"width": 1920,

"height": 1080,

"cfg": 8.0,

"steps": 35

},

{

"id": "social_square_01",

"prompt": "minimalist product flat lay on white background",

"width": 1080,

"height": 1080

},

{

"id": "story_vertical_01",

"prompt": "atmospheric portrait with neon city background",

"width": 768,

"height": 1344,

"cfg": 6.5

}

]

}

The defaults section provides fallback values, and each job can override any parameter. Here's the enhanced script that handles JSON input:

def batch_from_json(workflow_path, json_path, output_dir):

"""Run batch generation from a JSON config with per-job overrides."""

os.makedirs(output_dir, exist_ok=True)

base_workflow = load_workflow(workflow_path)

with open(json_path, "r") as f:

config = json.load(f)

defaults = config.get("defaults", {})

jobs = config["jobs"]

node_map = {

"prompt": ("6", "inputs", "text"),

"negative_prompt": ("7", "inputs", "text"),

"steps": ("3", "inputs", "steps"),

"cfg": ("3", "inputs", "cfg"),

"width": ("5", "inputs", "width"),

"height": ("5", "inputs", "height"),

}

for i, job in enumerate(jobs):

workflow = json.loads(json.dumps(base_workflow))

merged = {**defaults, **job}

for param, path in node_map.items():

if param in merged:

node_id, section, key = path

workflow[node_id][section][key] = merged[param]

workflow["3"]["inputs"]["seed"] = random.randint(0, 2**32 - 1)

job_id = merged.get("id", f"job_{i:04d}")

print(f"[{i+1}/{len(jobs)}] {job_id}: {merged.get('prompt', '')[:50]}...")

try:

prompt_id = queue_prompt(workflow)

check_progress(prompt_id)

print(f" Done.")

except Exception as e:

print(f" Failed: {e}")

The node_map dictionary is where you connect your parameter names to the actual node IDs in your workflow. You'll need to update these IDs to match your specific workflow. Open your API JSON file and find the node numbers for your KSampler, CLIP Text Encode, and Empty Latent Image nodes.

A typical batch processing run showing progress tracking and completion status for each job.

Free ComfyUI Workflows

Find free, open-source ComfyUI workflows for techniques in this article. Open source is strong.

What Is the Best Way to Handle Errors During Long Batch Runs?

Error handling is the difference between waking up to 1,000 finished images and waking up to 47 finished images and a crashed ComfyUI instance. I've had both experiences, and trust me, the second one ruins your morning.

The most common failures during batch processing are VRAM out-of-memory errors, ComfyUI server crashes, network timeouts (for remote instances), and corrupted outputs from interrupted generations. Your automation needs to handle all of these gracefully.

Here's my battle-tested error handling module that I've built up after losing several overnight runs to preventable crashes:

import time

import subprocess

import os

import signal

MAX_RETRIES = 3

RETRY_DELAY = 10 # seconds

HEALTH_CHECK_INTERVAL = 30 # seconds

VRAM_CLEAR_INTERVAL = 50 # clear VRAM every N images

def clear_vram():

"""Tell ComfyUI to free unused VRAM."""

try:

req = urllib.request.Request(

f"{COMFYUI_URL}/free",

data=json.dumps({"unload_models": True}).encode("utf-8"),

headers={"Content-Type": "application/json"}

)

urllib.request.urlopen(req)

time.sleep(3)

except Exception:

pass

def is_server_alive():

"""Check if ComfyUI is responding."""

try:

urllib.request.urlopen(f"{COMFYUI_URL}/system_stats")

return True

except Exception:

return False

def wait_for_server(timeout=120):

"""Wait for ComfyUI to become available."""

start = time.time()

while time.time() - start < timeout:

if is_server_alive():

return True

time.sleep(5)

return False

def robust_generate(workflow, job_id, retries=MAX_RETRIES):

"""Generate with automatic retry and error recovery."""

for attempt in range(retries):

if not is_server_alive():

print(f" Server down. Waiting for recovery...")

if not wait_for_server():

return {"status": "server_dead", "attempts": attempt + 1}

try:

prompt_id = queue_prompt(workflow)

result = check_progress(prompt_id)

# Verify output exists

outputs = result.get("outputs", {})

if not outputs:

raise Exception("No outputs in result")

return {"status": "success", "outputs": outputs,

"attempts": attempt + 1}

except Exception as e:

print(f" Attempt {attempt + 1}/{retries} failed: {e}")

if attempt < retries - 1:

clear_vram()

time.sleep(RETRY_DELAY)

return {"status": "failed", "attempts": retries}

There are a few critical patterns here worth explaining. The clear_vram function hits ComfyUI's /free endpoint, which unloads models from GPU memory. I call this every 50 images or so during long runs. Without it, some models slowly leak VRAM until you get an OOM crash around image 200-300.

The wait_for_server function handles the scenario where ComfyUI actually crashes and gets restarted (either manually or by a process manager like supervisord). Instead of killing your entire batch, the script pauses and waits for the server to come back.

One thing I started doing after a particularly frustrating lost batch is maintaining a checkpoint file. After each successful generation, I append the job ID to a checkpoint file. When the script starts, it reads this file and skips already-completed jobs. This way, if anything goes wrong, you just re-run the same script and it picks up where it left off:

def load_checkpoint(checkpoint_path):

"""Load set of completed job IDs."""

if not os.path.exists(checkpoint_path):

return set()

with open(checkpoint_path, "r") as f:

return set(line.strip() for line in f if line.strip())

def save_checkpoint(checkpoint_path, job_id):

"""Append a completed job ID to the checkpoint file."""

with open(checkpoint_path, "a") as f:

f.write(f"{job_id}\n")

Hot take: if you're running batch jobs over 500 images and you don't have checkpoint/resume functionality, you're gambling. It's not a question of if ComfyUI will crash during a long run, it's when. The VRAM management in most custom nodes isn't designed for marathon sessions, and even stock ComfyUI can hit edge cases during extended runs.

Automated File Naming and Output Organization

By default, ComfyUI dumps everything into a single output folder with names like ComfyUI_00001_.png. That's fine for experimentation but useless for production work. When you're generating 1,000 images for a client, you need to know exactly which prompt produced which image, and they need to be organized logically.

The trick is to use ComfyUI's SaveImage node prefix feature through the API. You can set the filename prefix dynamically for each generation:

def set_output_naming(workflow, save_node_id, job_id, subfolder=""):

"""Configure the SaveImage node for organized output."""

if save_node_id in workflow:

prefix = job_id

if subfolder:

prefix = f"{subfolder}/{job_id}"

workflow[save_node_id]["inputs"]["filename_prefix"] = prefix

return workflow

For the product mockup project I mentioned earlier, I organized outputs into subfolders by style and named each file with the product ID:

output/

product_photos/

headphones_001_00001_.png

headphones_002_00001_.png

smartwatch_001_00001_.png

lifestyle/

gym_earbuds_001_00001_.png

office_laptop_001_00001_.png

flat_lay/

accessories_spread_001_00001_.png

The client could immediately find any image they needed, and I could trace any output back to its source prompt in my CSV. This level of organization isn't optional for professional work. It's what separates hobbyist generation from production-grade delivery. If you're building this kind of pipeline, you'll find that turning ComfyUI into a production API is the natural next step.

At Apatero.com, we've built a lot of this organization logic into our workflows so you get clean, structured output without writing custom scripts. But if you're running your own ComfyUI instance, these techniques are essential.

How Do You Schedule Overnight Batch Runs?

Running large batches overnight is the most cost-effective way to handle production workloads. GPU time during off-peak hours on cloud platforms like RunPod is significantly cheaper, and you're not tying up your workstation during productive hours.

I schedule most of my big batch runs to start at 11 PM and run through the night. The script starts processing, sends me a Slack notification when it begins, and sends another one when it finishes or if it encounters a critical failure. Here's how I set that up:

Want to skip the complexity? Apatero gives you professional AI results instantly with no technical setup required.

Linux/Mac Scheduling with Cron

# Edit your crontab

crontab -e

# Run batch job at 11 PM every weekday

0 23 * * 1-5 cd /home/user/comfyui-batch && python3 batch_run.py >> batch.log 2>&1

Adding Notifications

I use a simple webhook approach for notifications. This works with Slack, Discord, or any service that accepts incoming webhooks:

def send_notification(webhook_url, message):

"""Send a notification via webhook."""

data = json.dumps({"text": message}).encode("utf-8")

req = urllib.request.Request(

webhook_url,

data=data,

headers={"Content-Type": "application/json"}

)

try:

urllib.request.urlopen(req)

except Exception:

pass # Don't let notification failures kill the batch

def batch_with_notifications(webhook_url, **kwargs):

"""Wrapper that sends start/end notifications."""

send_notification(webhook_url,

f"Batch started: {kwargs.get('csv_path', 'unknown')}")

start = time.time()

try:

results = batch_generate(**kwargs)

elapsed = (time.time() - start) / 3600

send_notification(webhook_url,

f"Batch complete in {elapsed:.1f}h. "

f"{results['completed']}/{results['total']} succeeded.")

except Exception as e:

send_notification(webhook_url,

f"BATCH FAILED: {e}")

raise

Cloud GPU Cost Optimization

If you're running on cloud GPUs, timing matters. I've tracked my RunPod costs across different scheduling patterns and the savings are substantial:

- Peak hours (9 AM to 6 PM): Full price, high contention for GPUs

- Evening (6 PM to midnight): 10-20% cheaper, moderate availability

- Overnight (midnight to 6 AM): 30-50% cheaper, excellent GPU availability

- Weekends: Similar to overnight pricing with better availability

For a 1,000 image batch on an A5000 that takes roughly 4-5 hours, the cost difference between peak and overnight can be $3-5 versus $6-10. That adds up fast if you're running batches regularly.

Advanced Techniques for Production Scale

Once you have the basics working, there are several techniques that separate a good batch pipeline from a great one. These are the refinements I've added over months of running production jobs.

Parallel Instance Processing

If you have access to multiple GPUs (or multiple cloud instances), you can split your batch across them. The approach is simple: divide your job list into chunks and run a separate script instance targeting each ComfyUI server:

def split_batch(jobs, num_workers):

"""Split job list into roughly equal chunks."""

chunk_size = len(jobs) // num_workers

remainder = len(jobs) % num_workers

chunks = []

start = 0

for i in range(num_workers):

end = start + chunk_size + (1 if i < remainder else 0)

chunks.append(jobs[start:end])

start = end

return chunks

I ran a test last year with four A5000 instances processing a 2,000 image batch. The total time dropped from about 8 hours on a single GPU to just over 2 hours across four. Linear scaling isn't quite achievable because of overhead, but it's close enough that parallelization is always worth it for large jobs.

Dynamic Quality Control

One technique I started using recently is automatic quality checking during batch runs. After each image generates, the script checks the output for common artifacts (solid black images, completely white images, extremely low file sizes that indicate failed generations):

def validate_output(image_path, min_size_kb=10, max_size_kb=50000):

"""Basic quality validation for generated images."""

if not os.path.exists(image_path):

return False, "File not found"

size_kb = os.path.getsize(image_path) / 1024

if size_kb < min_size_kb:

return False, f"File too small ({size_kb:.1f}KB)"

if size_kb > max_size_kb:

return False, f"File too large ({size_kb:.1f}KB)"

return True, "OK"

This is a simple check, but it catches the most common failure modes. You can extend it with actual image analysis using PIL to check for uniform color (indicating a blank image) or other anomalies.

Template Workflows with Variable Slots

For recurring batch jobs with similar structures, I create template workflows with clearly marked variable slots. Instead of modifying random node IDs, I use a naming convention in the workflow that makes the script self-documenting:

{

"prompt_template": "professional photo of {product} on {background}, studio lighting",

"variables": {

"product": ["wireless headphones", "smartwatch", "laptop stand"],

"background": ["white marble", "dark wood", "gradient gray"]

},

"combinations": "all"

}

The script then generates the cartesian product of all variables (or specific combinations if you specify them), producing every possible combination automatically. For the example above, that's 9 images (3 products times 3 backgrounds) from a single template. Scale that up with more variables and you can generate hundreds of unique combinations from a compact config file.

This approach is particularly powerful for e-commerce and product photography workflows. The 25 ComfyUI tips and tricks article covers some complementary optimization techniques that work well alongside batch processing.

A well-organized batch output structure makes it easy to deliver results to clients and trace any image back to its source prompt.

Earn Up To $1,250+/Month Creating Content

Join our exclusive creator affiliate program. Get paid per viral video based on performance. Create content in your style with full creative freedom.

Real-World Performance Numbers

I want to share some actual performance data because most guides give you the theory without the numbers. These benchmarks come from my real production runs over the past year:

| Setup | Images/Hour | Cost/1000 Images | Reliability |

|---|---|---|---|

| RTX 3090, SDXL, 25 steps | ~120 | $0 (local) | 95%+ |

| A5000 (RunPod), SDXL, 25 steps | ~140 | ~$4.50 | 98%+ |

| A100 (RunPod), SDXL, 25 steps | ~220 | ~$8.00 | 99%+ |

| RTX 4090, Flux Dev, 28 steps | ~40 | $0 (local) | 93%+ |

| A100 (RunPod), Flux Dev, 28 steps | ~70 | ~$12.00 | 97%+ |

| 4x A5000 parallel, SDXL | ~520 | ~$18.00 | 96%+ |

The reliability column reflects how many images successfully complete without intervention. The lower numbers for local GPUs come from the fact that desktop systems are more likely to run into thermal throttling, other processes competing for resources, and the occasional display driver crash.

Notice that the A100 isn't always the best value. For SDXL workloads, the A5000 offers better cost per image because the A100's extra power doesn't fully translate to proportionally faster SDXL generation. The A100 really shines with larger models like Flux where its 80GB VRAM prevents the memory swapping that slows down smaller GPUs.

If you want a managed solution that handles all of this infrastructure, Apatero.com provides production-ready batch processing without the DevOps overhead. But for those who want full control, running your own pipeline is absolutely viable with the scripts and techniques covered here.

Putting It All Together: A Complete Batch Processing Pipeline

Let me walk you through how I actually set up a real project from start to finish. This is the exact workflow I followed for that 1,200 product mockup job.

Step 1: Build and test the workflow in ComfyUI's UI. I generated 5-10 test images manually, tweaking prompts, CFG, steps, and sampler until the output quality was exactly right. This creative phase shouldn't be automated.

Step 2: Export the workflow in API format. Saved it as product_mockup_api.json and noted the node IDs for the prompt (node 6), negative prompt (node 7), KSampler (node 3), and SaveImage (node 9).

Step 3: Prepare the input data. Created a CSV with 1,200 rows, each containing a product name, prompt text, style category, and negative prompt. I generated this CSV from the client's product database with a simple Python script.

Step 4: Configure the batch script. Set the node IDs, output directory structure, checkpoint file location, and notification webhook.

Step 5: Test with a small batch. Ran 10 images first to verify everything worked. Caught two issues, a wrong node ID and a typo in my prompt template.

Step 6: Launch the full batch. Started at 10:30 PM, received a Slack notification at 11:15 PM confirming the first 50 images were clean, and went to bed.

Step 7: Morning review. Woke up to a "Batch complete: 1247/1247 succeeded" notification. The extra 47 images were from a second pass I'd configured for alternate color variations.

The entire pipeline, from first test image to 1,247 delivered outputs, took about 14 hours of compute time and 45 minutes of my actual work. That's the power of proper automation.

Troubleshooting Common Batch Processing Issues

Even with robust error handling, you'll run into issues. Here are the problems I see most frequently and how to solve them:

ComfyUI runs out of VRAM after 100-200 images. Add the clear_vram() call every 50 images. Some custom nodes don't properly release GPU memory between runs, and the /free endpoint forces cleanup.

Images are all identical despite random seeds. Make sure you're deep-copying the workflow JSON for each iteration. If you're modifying the same dictionary object, all jobs share the last-set seed value. Use json.loads(json.dumps(workflow)) for a proper deep copy.

The script hangs indefinitely on one image. Add a timeout to your check_progress function. If a job hasn't completed in 5 minutes (or whatever's appropriate for your workflow), mark it as failed and move on.

Output file names don't match your prefix. ComfyUI appends a counter to your prefix. If your prefix is product_001, the output will be product_001_00001_.png. The counter resets per prefix, so each job gets a clean _00001_ suffix.

The API returns a 400 error. Your workflow JSON has an invalid node connection or missing input. Test the workflow in the UI first. The most common cause is referencing a custom node that isn't installed on the target ComfyUI instance.

FAQ

How Many Images Can ComfyUI Batch Process in One Session?

There's no hard limit built into ComfyUI, but practical limits exist. With proper VRAM management (clearing every 50-100 images) and error recovery, I've run sessions of 5,000+ images without major issues. The key is the checkpoint/resume system so that any crash doesn't lose progress.

Does Batch Processing Affect Image Quality Compared to Manual Generation?

No. The API sends identical parameters to the same processing pipeline. An image generated through the API is byte-for-byte identical to one generated through the UI with the same seed and settings. Quality differences only occur if your script modifies parameters unintentionally.

Can I Batch Process with ControlNet or IP-Adapter Inputs?

Yes, but it requires additional setup. Your script needs to handle input image paths for each job, setting the appropriate LoadImage node's input to point to the correct reference image. I recommend organizing your input images in a folder with consistent naming that maps to your CSV/JSON job definitions.

What's the Best GPU for Large-Scale ComfyUI Batch Processing?

For SDXL workloads, the RTX 4090 offers the best price-to-performance ratio for local processing. For cloud, the A5000 is the sweet spot for cost-per-image. If you're running Flux or other large models, the A100 80GB is worth the premium because it avoids VRAM-related slowdowns.

How Do I Batch Process with Different Models for Different Images?

Include a checkpoint path in your CSV/JSON config and add logic to update the CheckpointLoaderSimple node's input for each job. Be aware that model switching takes 10-30 seconds per swap, so group your jobs by model to minimize loading time.

Can I Run Batch Processing on a Remote ComfyUI Instance?

Absolutely. Change the COMFYUI_URL variable to point to your remote server's IP and port. If you're running on RunPod or another cloud platform, make sure the API port (8188) is exposed. Our guide on turning ComfyUI into a production API covers the remote setup in detail.

How Do I Handle Rate Limiting When Batch Processing Through Cloud APIs?

If you're using a hosted ComfyUI service rather than your own instance, add a delay between requests (1-3 seconds is usually sufficient). Most self-hosted instances don't have rate limiting, but cloud services might throttle rapid requests.

Is It Possible to Preview Images During a Batch Run?

Yes. The history endpoint returns output file information. You can add a step to your script that copies or symlinks the latest output to a "preview" folder, or set up a simple web server that displays the most recent generation. I usually just keep the ComfyUI UI open in a browser tab while the API script runs.

How Do I Resume a Failed Batch from Where It Stopped?

Use the checkpoint file approach described in this guide. Each completed job ID gets appended to a text file. When the script starts, it loads this file and skips any job IDs that are already present. This gives you automatic resume capability with zero duplicate work.

What's the Maximum Practical Batch Size Before I Should Split into Multiple Runs?

I split batches at around 2,000-3,000 images per run. Beyond that, the log files get unwieldy and the risk of accumulating issues increases. For very large jobs (10,000+ images), I split into chunks of 1,000 and run them as separate scheduled jobs with independent checkpoints. On Apatero.com, batch jobs are automatically chunked and distributed, which removes this consideration entirely.

Final Thoughts

Batch processing in ComfyUI transforms it from a creative tool into a production powerhouse. The built-in queue works for small jobs, but once you need hundreds or thousands of images, API-based automation with proper error handling, checkpoint recovery, and organized output is the only way to work efficiently.

The scripts and techniques in this guide represent about a year of iteration and real production use. Start with the basic batch script, add error handling once you've confirmed the core flow works, and then layer on features like notifications, quality validation, and parallel processing as your needs grow.

The most important piece of advice I can give is this. Always test with 10 images before launching 1,000. Every time I've skipped that step to save a few minutes, I've ended up wasting hours fixing issues that would have been obvious in a small test run. Take the time to verify, and then let automation do the heavy lifting while you do something more interesting than clicking buttons.

Ready to Create Your AI Influencer?

Join 115 students mastering ComfyUI and AI influencer marketing in our complete 51-lesson course.

Related Articles

10 Most Common ComfyUI Beginner Mistakes and How to Fix Them in 2025

Avoid the top 10 ComfyUI beginner pitfalls that frustrate new users. Complete troubleshooting guide with solutions for VRAM errors, model loading...

25 ComfyUI Tips and Tricks That Pro Users Don't Want You to Know in 2025

Discover 25 advanced ComfyUI tips, workflow optimization techniques, and pro-level tricks that expert users use.

360 Anime Spin with Anisora v3.2: Complete Character Rotation Guide ComfyUI 2025

Master 360-degree anime character rotation with Anisora v3.2 in ComfyUI. Learn camera orbit workflows, multi-view consistency, and professional...