FLUX GGUF Quantization: Run FLUX Models on 8GB VRAM Cards

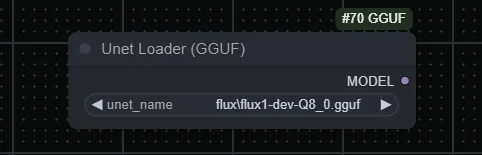

Complete guide to running FLUX image generation models on 8GB VRAM GPUs using GGUF quantization. Covers Q4, Q5, Q8 quantization levels, ComfyUI setup, quality comparisons, and optimization tips.