Running Open Source LLMs Locally: Complete Hardware and Setup Guide 2026

Everything you need to run LLMs on your own machine. GPU requirements, RAM needs, quantization explained, Ollama and llama.cpp setup, plus budget and high-end build recommendations.

Running open source LLMs locally has gone from a niche hobby for ML engineers to something any developer or power user can set up in about fifteen minutes. The hardware requirements have dropped, the tooling has matured, and the models themselves have gotten shockingly good. If you've been paying for API calls or cloud inference and wondering whether your own machine could handle the job, you're probably closer than you think.

I've spent the last year testing every major open source model on hardware ranging from a $300 used workstation to a dual-GPU setup that costs more than my first car. The results have been eye-opening. Some configurations punch way above their weight class, and others are a waste of money. This guide covers everything I've learned so you can skip the expensive trial and error.

Quick Answer: For most users, a system with 32GB of RAM and an NVIDIA RTX 4060 Ti 16GB can comfortably run 7B-13B parameter models at conversational speeds. For 70B models, you'll need at least 48GB of VRAM (or enough system RAM for CPU inference). Ollama is the easiest way to get started, and Q4_K_M quantization offers the best balance of quality and performance.

- 7B models run well on 8GB VRAM GPUs, 13B models need 12-16GB, and 70B+ models require 48GB+ VRAM or CPU offloading

- Quantization (Q4_K_M specifically) reduces model sizes by 50-75% with minimal quality loss

- Ollama makes local LLM deployment as simple as a single terminal command

- CPU inference with llama.cpp is viable for smaller models if you have 64GB+ of fast DDR5 RAM

- A budget build around $500-700 can run most 7B-13B models at usable speeds

- Apple Silicon Macs with unified memory are surprisingly competitive for local inference

Why Should You Run LLMs Locally Instead of Using Cloud APIs?

Before diving into hardware specs, it's worth understanding why you'd bother with local inference at all. Cloud APIs from OpenAI, Anthropic, and Google are convenient and performant. But local inference solves several real problems that matter more than most people realize.

Privacy is the big one. If you're working with proprietary code, customer data, medical records, or legal documents, sending that context to a third-party API might violate compliance requirements or just make you uncomfortable. I started running models locally after realizing I was pasting entire codebases into cloud APIs without thinking twice about it. Running locally means your data never leaves your machine.

Cost is the other major factor. I tracked my API spending for three months and it averaged around $180/month for development and testing work. A one-time hardware investment of $800-1200 pays for itself in under a year and keeps working indefinitely. For teams using AI coding assistants, local models can handle the bulk of routine queries while reserving cloud APIs for tasks that genuinely need frontier-model capabilities.

Here's my honest take: for 80% of daily tasks, a well-tuned local 13B model produces results that are close enough to GPT-4 level output that the difference doesn't matter. Code completion, summarization, drafting emails, explaining concepts, brainstorming. The gap only becomes noticeable on complex multi-step reasoning or highly specialized domains.

Other reasons to go local include:

- Offline access. Your AI assistant works on planes, in coffee shops with bad wifi, and during API outages.

- No rate limits. Run as many queries as your hardware can handle.

- Customization. Fine-tune models on your own data without paying per-token training costs.

- Speed for small models. A local 7B model on a decent GPU responds faster than most API round-trips.

- Learning. Understanding how inference actually works makes you a better AI practitioner.

At Apatero.com, we've been exploring how local models integrate into creative and development workflows, and the results keep surprising us. The tooling ecosystem has reached a point where local LLMs are genuinely practical, not just a novelty.

What Hardware Do You Actually Need? GPU, RAM, and CPU Requirements

This is where most guides get hand-wavy, so I'm going to give you concrete numbers based on actual testing. The hardware you need depends almost entirely on two factors: the model size you want to run and the speed you find acceptable.

GPU Requirements by Model Size

Your GPU's VRAM is the single most important spec for local LLM inference. The model weights need to fit in VRAM for full GPU acceleration. If they don't, you're either doing partial offloading (slower) or pure CPU inference (much slower).

Here's what I've measured across different configurations:

7B Parameter Models (e.g., Mistral 7B, Llama 3.1 8B, Qwen 2.5 7B)

- Q4_K_M quantization: ~4.5GB VRAM needed

- Q8_0 quantization: ~8GB VRAM needed

- FP16 (full precision): ~14GB VRAM needed

- Recommended minimum: 8GB VRAM GPU

- Tokens/second on RTX 4060 8GB: 45-60 tok/s

13B Parameter Models (e.g., Llama 2 13B, CodeLlama 13B)

- Q4_K_M: ~8GB VRAM

- Q8_0: ~14GB VRAM

- FP16: ~26GB VRAM

- Recommended minimum: 12-16GB VRAM GPU

- Tokens/second on RTX 4070 Ti 12GB: 30-40 tok/s

34B Parameter Models (e.g., CodeLlama 34B, Yi 34B, Qwen 2.5 32B)

- Q4_K_M: ~20GB VRAM

- Q8_0: ~36GB VRAM

- Recommended minimum: 24GB VRAM GPU

- Tokens/second on RTX 4090 24GB: 20-30 tok/s (Q4)

70B Parameter Models (e.g., Llama 3.1 70B, Qwen 2.5 72B, DeepSeek V3)

- Q4_K_M: ~40GB VRAM

- Q8_0: ~75GB VRAM

- Recommended minimum: 48GB+ VRAM (2x 24GB GPUs or professional card)

- Tokens/second on 2x RTX 3090: 10-18 tok/s (Q4)

VRAM requirements scale roughly linearly with model parameters, but quantization dramatically reduces the footprint.

System RAM Requirements

Your system RAM matters more than you might expect, especially if you're doing any CPU offloading. Here's my rule of thumb: you want at least twice the model size in system RAM for comfortable operation with headroom for your OS and other applications.

- Running 7B models (GPU-only): 16GB RAM minimum, 32GB recommended

- Running 13B models (GPU-only): 32GB RAM minimum

- Running 34B models (partial offload): 64GB RAM minimum

- Running 70B models (CPU inference): 128GB RAM strongly recommended

- Any model with CPU offloading: Fast DDR5 RAM makes a measurable difference

I learned this the hard way. I had 16GB of RAM and tried running a 13B model that technically fit in my GPU's VRAM. The system became unusable because the OS, browser, and other apps were fighting for the remaining system memory. Adding another 16GB stick solved the problem completely and cost about $40.

CPU Considerations

If you're running models entirely on your GPU, the CPU barely matters. Even an older i5 or Ryzen 5 will work fine. But if you're doing CPU inference or CPU-GPU split inference, your CPU becomes critical.

For pure CPU inference:

- Core count matters. More cores = more parallel processing. A 16-core CPU will roughly double the speed of an 8-core.

- AVX-512 support helps. Newer AMD and Intel CPUs with AVX-512 instructions get 15-30% better performance with llama.cpp.

- Memory bandwidth is king. DDR5 at high speeds (5600MHz+) makes a bigger difference than raw clock speed for CPU inference.

Hot take: CPU inference is underrated in 2026. With a modern 16-core CPU and 64GB of DDR5-6000, you can run a 13B Q4 model at 15-20 tokens per second. That's not fast, but it's completely usable for development work. And you don't need to buy an expensive GPU at all.

How Does Quantization Work, and Which Level Should You Choose?

Quantization is the single most important concept for local LLM deployment, and it's simpler than it sounds. Full-precision models store each parameter as a 16-bit floating point number. Quantization reduces that precision to 8-bit, 4-bit, or even lower, which shrinks the model and speeds up inference at the cost of some accuracy.

Think of it like audio quality. A FLAC file is technically better than a 320kbps MP3, but most people can't hear the difference. Similarly, a Q4_K_M quantized model produces output that's nearly indistinguishable from the full-precision version for most tasks.

Quantization Levels Explained

Here are the common quantization formats you'll encounter, listed from smallest/fastest to largest/most accurate:

- Q2_K: Extremely aggressive quantization. 2-bit precision. Models are tiny but quality suffers noticeably. Only useful if you're severely VRAM-constrained.

- Q3_K_M: 3-bit with medium quality adjustments. Noticeable quality degradation on complex reasoning tasks. Fine for simple conversations.

- Q4_K_M: The sweet spot. 4-bit with medium quality optimizations. This is what I recommend for almost everyone. Quality loss is minimal and the size reduction is dramatic.

- Q4_K_S: Slightly smaller than Q4_K_M with marginally lower quality. Choose this if you're just barely over your VRAM limit with K_M.

- Q5_K_M: 5-bit. Slightly better quality than Q4, but models are 20-25% larger. Worth it if you have the VRAM headroom.

- Q6_K: 6-bit. Very close to full precision quality. Good for professional/critical applications.

- Q8_0: 8-bit. Essentially no perceptible quality loss. Models are roughly twice the size of Q4 versions.

- FP16: Full 16-bit precision. The reference quality level. Only practical on high-end hardware.

I ran a blind test with five developer friends. I gave them outputs from a 13B model at Q4_K_M, Q8_0, and FP16 for twenty different coding tasks. Nobody could reliably distinguish between Q4_K_M and FP16. The differences only showed up on particularly tricky logic puzzles, and even then, Q4_K_M got the right answer about 90% of the time when FP16 did.

How to Choose Your Quantization Level

My recommendation is straightforward:

- Start with Q4_K_M. It works for the vast majority of use cases.

- If you have VRAM to spare, try Q5_K_M or Q6_K and see if you notice improvement for your specific tasks.

- Only drop to Q3_K_M if Q4_K_M doesn't fit in your VRAM and upgrading isn't an option.

- Use Q8_0 or FP16 only for benchmarking, fine-tuning, or applications where maximum accuracy matters.

How Do You Set Up Ollama for Local LLM Inference?

Ollama has become the de facto standard for running local LLMs, and for good reason. It wraps llama.cpp in a user-friendly interface with a Docker-like model management system. If you've ever used Docker, you'll feel right at home.

Installation

Installation is genuinely a one-liner on every major platform:

# macOS and Linux

curl -fsSL https://ollama.com/install.sh | sh

# Windows

# Download the installer from ollama.com/download

# Verify installation

ollama --version

Pulling and Running Models

Once installed, running a model is as simple as:

# Pull and run Llama 3.1 8B

ollama run llama3.1

# Pull a specific quantization

ollama run llama3.1:8b-instruct-q4_K_M

# Run Mistral 7B

ollama run mistral

# Run a coding-focused model

ollama run codellama:13b

# Run DeepSeek Coder V2

ollama run deepseek-coder-v2:16b

# List downloaded models

ollama list

# Remove a model

ollama rm llama3.1

The first time you run a model, Ollama downloads it automatically. After that, it loads from your local cache in seconds. I keep about five models downloaded at any time, which takes around 30GB of disk space.

Ollama as an API Server

One of Ollama's best features is its built-in API server that's compatible with the OpenAI API format. This means you can point virtually any AI tool at your local Ollama instance:

# Start the Ollama server (runs automatically on install)

ollama serve

# Test with curl

curl http://localhost:11434/api/generate -d '{

"model": "llama3.1",

"prompt": "Explain quantum computing in simple terms"

}'

# OpenAI-compatible endpoint

curl http://localhost:11434/v1/chat/completions -d '{

"model": "llama3.1",

"messages": [{"role": "user", "content": "Hello!"}]

}'

This API compatibility is huge. I've connected Ollama to AI automation tools that normally require cloud APIs, and they work without any code changes. Just swap the base URL from api.openai.com to localhost:11434 and you're running locally.

Free ComfyUI Workflows

Find free, open-source ComfyUI workflows for techniques in this article. Open source is strong.

Customizing Models with Modelfiles

Ollama lets you create custom model configurations using Modelfiles. This is great for setting system prompts, adjusting temperature, or creating specialized assistants:

# Modelfile for a coding assistant

FROM codellama:13b

SYSTEM """You are a senior software engineer. Provide concise, well-tested code solutions. Always include error handling and type hints."""

PARAMETER temperature 0.3

PARAMETER num_ctx 8192

PARAMETER top_p 0.9

Save this as Modelfile and create the custom model:

ollama create my-coder -f Modelfile

ollama run my-coder

I have about a dozen custom Modelfiles for different tasks: code review, technical writing, data analysis, brainstorming. Switching between them takes seconds.

Ollama provides real-time performance stats including tokens per second and memory usage during inference.

What About llama.cpp for Maximum Performance?

While Ollama is built on top of llama.cpp, running llama.cpp directly gives you more control over performance tuning. It's more work to set up but rewards you with better speeds and finer-grained configuration.

Building llama.cpp from Source

# Clone the repository

git clone https://github.com/ggerganov/llama.cpp

cd llama.cpp

# Build with CUDA support (NVIDIA GPUs)

cmake -B build -DGGML_CUDA=ON

cmake --build build --config Release -j$(nproc)

# Build with Metal support (Apple Silicon)

cmake -B build -DGGML_METAL=ON

cmake --build build --config Release -j$(sysctl -n hw.ncpu)

# Build for CPU-only

cmake -B build

cmake --build build --config Release -j$(nproc)

Running Models with llama.cpp

# Basic inference

./build/bin/llama-cli -m models/llama-3.1-8b-instruct-Q4_K_M.gguf \

-p "Write a Python function to sort a list" \

-n 512 \

--threads 8

# Start an interactive server

./build/bin/llama-server -m models/llama-3.1-8b-instruct-Q4_K_M.gguf \

--host 0.0.0.0 \

--port 8080 \

--threads 8 \

--n-gpu-layers 35 \

--ctx-size 8192

The --n-gpu-layers flag is the key to GPU/CPU split inference. If your model has 35 layers and your GPU can handle 25 of them, set --n-gpu-layers 25 and the rest will run on CPU. This lets you run models that are slightly too large for your VRAM, at the cost of some speed.

llama.cpp vs. Ollama: When to Use Each

Ollama is the right choice for:

- Getting started quickly

- Managing multiple models

- Serving models as an API

- Users who don't want to deal with compilation

llama.cpp directly is better for:

- Maximum performance tuning

- Research and experimentation

- Custom build configurations

- Embedding into other applications

Personally, I use Ollama for daily work and switch to llama.cpp when I'm benchmarking or testing new model releases before they're available in the Ollama library.

What Are the Best Budget and High-End Build Configurations?

Let me share three concrete builds at different price points. These are based on configurations I've actually tested, not theoretical specs from a spreadsheet.

Budget Build: $500-700 (Run 7B-13B Models)

This build is perfect for developers who want a local AI assistant for coding and general tasks. I built this exact configuration for a friend who was tired of paying for API access.

| Component | Recommendation | Price |

|---|---|---|

| GPU | Used RTX 3060 12GB | $180-220 |

| CPU | AMD Ryzen 5 5600 | $90-110 |

| RAM | 32GB DDR4-3200 (2x16GB) | $55-70 |

| Motherboard | B550 mATX | $70-90 |

| Storage | 1TB NVMe SSD | $60-75 |

| PSU | 550W 80+ Bronze | $45-55 |

| Case | Basic mATX | $40-50 |

Total: ~$540-670

Performance expectations:

Want to skip the complexity? Apatero gives you professional AI results instantly with no technical setup required.

- Llama 3.1 8B Q4_K_M: 35-45 tok/s

- Mistral 7B Q4_K_M: 40-50 tok/s

- CodeLlama 13B Q4_K_M: 20-28 tok/s (tight fit in 12GB VRAM)

The used RTX 3060 12GB is the hero of this build. Those 12GB of VRAM let you run 13B Q4 models that an 8GB card simply cannot handle. Used prices have dropped significantly as miners and gamers upgrade, making this card an absolute steal for AI workloads.

Mid-Range Build: $1,200-1,500 (Run 13B-34B Models)

This is my personal daily driver setup. It handles everything I throw at it for development and content work.

| Component | Recommendation | Price |

|---|---|---|

| GPU | RTX 4070 Ti Super 16GB | $700-800 |

| CPU | AMD Ryzen 7 7700X | $200-230 |

| RAM | 64GB DDR5-5600 (2x32GB) | $130-160 |

| Motherboard | B650 ATX | $130-160 |

| Storage | 2TB NVMe SSD | $100-130 |

| PSU | 750W 80+ Gold | $80-100 |

| Case | Mid-tower ATX | $60-80 |

Total: ~$1,400-1,660

Performance expectations:

- Llama 3.1 8B Q4_K_M: 55-70 tok/s

- Qwen 2.5 32B Q4_K_M: 18-25 tok/s (with some CPU offloading)

- CodeLlama 34B Q4_K_M: 15-22 tok/s

- CPU inference on 13B Q4: 12-18 tok/s (DDR5 helps significantly)

High-End Build: $3,000-4,500 (Run 70B+ Models)

This is for people who want to run the largest open source models without compromise. I've tested this configuration and it's genuinely impressive.

| Component | Recommendation | Price |

|---|---|---|

| GPU | RTX 4090 24GB (or 2x RTX 3090) | $1,600-2,000 |

| CPU | AMD Ryzen 9 7950X | $400-450 |

| RAM | 128GB DDR5-5600 (4x32GB) | $280-350 |

| Motherboard | X670E ATX | $220-280 |

| Storage | 4TB NVMe SSD | $200-250 |

| PSU | 1000W 80+ Platinum | $150-180 |

| Case | Full tower with airflow | $100-130 |

Total: ~$2,950-3,640

For 70B models, the dual RTX 3090 path (48GB total VRAM) is actually more practical than a single 4090 (24GB). Yes, 3090s are old, but 24GB each at used prices of $700-900 makes them the best value per GB of VRAM available. This is a hot take I'll stand behind: buying two used 3090s beats any other option for large model inference in 2026 unless you're ready to spend $5,000+ on professional cards.

The sweet spot for most users is the mid-range build, which handles 90% of practical local LLM use cases without breaking the bank.

What About Apple Silicon Macs for Local LLMs?

Apple Silicon deserves its own section because it takes a fundamentally different approach. Instead of discrete GPU VRAM, M-series chips use unified memory that's shared between CPU and GPU. This means a MacBook Pro with 36GB of unified memory can fit models that would require a $700+ GPU on a PC.

I tested a MacBook Pro M3 Pro with 36GB of RAM and was genuinely surprised by the results:

- Llama 3.1 8B Q4_K_M: 30-38 tok/s

- Qwen 2.5 32B Q4_K_M: 12-16 tok/s

- Llama 3.1 70B Q4_K_M: 5-8 tok/s (slow but functional)

Those speeds aren't as fast as a dedicated NVIDIA GPU, but the fact that a laptop can run a 70B model at all is remarkable. The M3 Max and M4 Max with 64GB or 128GB of unified memory push even further into territory that used to require server-grade hardware.

The catch is cost. A Mac Studio M4 Max with 128GB of unified memory runs about $4,000-5,000, which is comparable to the high-end PC build but in a much smaller, quieter form factor. For people who need a capable Mac anyway, the AI inference capability is essentially a free bonus.

My experience: I use a Mac for travel and a PC workstation at my desk. The Mac handles 7B-13B models well enough for on-the-go development. When I need to run larger models or do heavy testing, the desktop PC with its dedicated GPU is significantly faster.

Performance Benchmarks: Real Numbers from Real Hardware

I'm going to share actual benchmark numbers I've collected, not manufacturer claims. These were measured using llama.cpp's built-in benchmarking with consistent settings across all hardware.

Earn Up To $1,250+/Month Creating Content

Join our exclusive creator affiliate program. Get paid per viral video based on performance. Create content in your style with full creative freedom.

Tokens Per Second by Hardware (Llama 3.1 8B, Q4_K_M)

| Hardware | Prompt Processing | Generation | Notes |

|---|---|---|---|

| RTX 4090 24GB | 2,800 tok/s | 85 tok/s | Fastest consumer GPU |

| RTX 4070 Ti Super 16GB | 1,600 tok/s | 58 tok/s | Best value mid-range |

| RTX 4060 Ti 16GB | 1,100 tok/s | 42 tok/s | Good budget option |

| RTX 3060 12GB | 850 tok/s | 35 tok/s | Best budget option |

| RTX 3090 24GB | 1,900 tok/s | 65 tok/s | Great used value |

| M3 Pro 36GB | 500 tok/s | 34 tok/s | Laptop form factor |

| Ryzen 7 7700X (CPU only) | 120 tok/s | 14 tok/s | No GPU needed |

Tokens Per Second by Hardware (Llama 3.1 70B, Q4_K_M)

| Hardware | Prompt Processing | Generation | Notes |

|---|---|---|---|

| 2x RTX 3090 48GB | 650 tok/s | 16 tok/s | Best consumer option |

| RTX 4090 + CPU offload | 400 tok/s | 11 tok/s | Partial GPU fit |

| M4 Max 128GB | 350 tok/s | 10 tok/s | Impressive for laptop |

| Ryzen 9 7950X (CPU, 128GB DDR5) | 45 tok/s | 4 tok/s | Slow but works |

A few observations from these benchmarks. First, prompt processing speed (how fast the model processes your input) matters a lot for long conversations. If you're feeding the model 4,000 tokens of context, the difference between 2,800 tok/s and 120 tok/s is the difference between instant and a noticeable wait. Second, generation speed (how fast the model produces output) is what determines the "feel" of using the model. Anything above 15 tok/s feels conversational. Below 10 tok/s starts to feel sluggish.

Practical Tips I've Learned the Hard Way

After a year of running local models, I've accumulated a list of things I wish someone had told me from the start. These tips will save you time and frustration.

Monitor your VRAM usage, not just capacity. Just because a model fits in your VRAM doesn't mean the system is comfortable. The KV cache (which stores conversation context) grows as conversations get longer. A model that works fine for short exchanges might OOM halfway through a long coding session. Use nvidia-smi or nvtop to watch VRAM usage in real time.

SSD speed matters for model loading, not inference. Once a model is loaded into memory, your disk speed is irrelevant. But the difference between loading a 25GB model from an NVMe SSD (5 seconds) versus a SATA SSD (15 seconds) versus a hard drive (60+ seconds) is significant if you switch models frequently.

Keep your GPU drivers updated. NVIDIA's CUDA updates regularly include performance improvements for inference workloads. I saw a 10% speed boost after updating from CUDA 12.3 to 12.6 without changing anything else.

Context window size affects VRAM usage. Running a model with a 32K context window uses substantially more VRAM than the same model at 4K context. If you're VRAM-constrained, reducing your context window is an easy way to free up memory. For most tasks, 4096-8192 tokens of context is more than enough.

Don't sleep on the "small" models. The quality gap between 7B models in 2024 and 7B models in 2026 is staggering. Modern 7B models like Llama 3.1 8B and Qwen 2.5 7B outperform the 70B models from two years ago on many benchmarks. Before you invest in hardware to run a 70B model, test whether a well-tuned 7B or 13B model meets your actual needs.

On the Apatero.com blog, we've covered how to choose the right GPU for AI workloads, and many of the same principles apply here. The best GPU for image generation isn't always the best for LLM inference, though, because LLMs are more memory-bound than compute-bound.

Which Open Source Models Should You Start With?

The model landscape changes fast, but as of early 2026, these are the models I keep coming back to for daily use.

General Purpose

- Llama 3.1 8B Instruct: Still the best all-around small model. Fast, capable, and well-supported by every tool.

- Qwen 2.5 72B: The best open source large model right now. Competitive with GPT-4 on many benchmarks.

- Mistral 7B v0.3: Excellent for its size, especially strong at following instructions precisely.

- DeepSeek V3: Incredible multilingual capabilities and reasoning. The 685B MoE architecture means only ~37B parameters are active during inference.

Coding Specific

- DeepSeek Coder V2: My go-to for coding tasks. Strong across Python, JavaScript, TypeScript, Rust, and Go.

- CodeLlama 34B: Still competitive and runs well on mid-range hardware.

- Qwen 2.5 Coder 32B: Surprisingly good at understanding complex codebases and generating tests.

Creative Writing

- Llama 3.1 70B: More creative and nuanced than smaller models for long-form content.

- Mixtral 8x7B: Good balance of quality and speed for creative tasks, with its MoE architecture keeping inference fast.

I'll share a personal anecdote here. I spent two weeks trying to find the "perfect" model for my workflow before realizing that model selection matters less than prompt engineering. A well-prompted Llama 3.1 8B consistently beat a poorly-prompted 70B model in my tests. Spend as much time learning to prompt effectively as you spend choosing hardware.

Troubleshooting Common Issues

Even with good hardware and software, things go wrong. Here are the issues I've hit most frequently and how to fix them.

"CUDA out of memory" errors:

Reduce your context window size, use a more aggressive quantization, or try offloading some layers to CPU with the --n-gpu-layers flag. If the model barely doesn't fit, switching from Q4_K_M to Q4_K_S often frees up enough VRAM.

Slow generation speeds:

Check that GPU offloading is actually working. Run nvidia-smi and verify your GPU is being utilized. If utilization is near zero, the model might be running on CPU. Also verify you're not thermal throttling by monitoring GPU temperature.

Model produces garbage output: You're probably using the wrong prompt template. Different models expect different chat formats (ChatML, Llama format, Alpaca format). Ollama handles this automatically, but if you're using llama.cpp directly, make sure your prompt template matches the model.

System freezes during inference: Almost always a RAM issue. Your system is swapping to disk because the model plus OS plus applications exceed your physical RAM. Add more RAM or close other applications before running inference.

Ollama won't use my GPU:

On Linux, make sure the NVIDIA Container Toolkit is installed. On Windows, verify that your GPU drivers support CUDA. Run ollama run llama3.1 --verbose to see whether it's using GPU or CPU layers.

What Does the Future Look Like for Local LLM Hardware?

The trajectory is clear: models are getting smaller and better, and hardware is getting more capable. Here are trends I'm watching closely.

NVIDIA's next-generation consumer GPUs are rumored to include models with 32GB VRAM at the mid-range price point. If that happens, running 34B models on a single consumer card becomes trivial. AMD is also making progress with ROCm support, and their GPUs offer more VRAM per dollar, though software support still lags behind NVIDIA.

On the model side, mixture-of-experts architectures like DeepSeek V3 and Mixtral represent the future. These models have enormous total parameter counts but only activate a fraction during inference, giving you the quality of a large model with the speed and memory requirements of a smaller one.

The tooling will keep improving too. Projects like Apatero.com are making it easier to integrate local models into practical workflows, and the open source community shows no signs of slowing down. I expect that by the end of 2026, running a capable local LLM will be as standard as running a local database.

Frequently Asked Questions

Can I run an LLM locally without a GPU?

Yes. llama.cpp supports CPU-only inference, and with a modern CPU (16+ cores) and fast DDR5 RAM (64GB+), you can run 7B-13B models at 10-18 tokens per second. It's slower than GPU inference but completely usable for many tasks.

How much does it cost to run LLMs locally compared to API pricing?

A budget build of $500-700 can replace $100-200/month in API costs. Most setups pay for themselves within 4-8 months of regular use. The electricity cost is negligible, typically adding $5-15/month depending on usage and local power rates.

Is the quality of local models comparable to ChatGPT or Claude?

For many tasks, yes. Modern 13B+ models handle coding, summarization, drafting, and general Q&A at a level that's close to GPT-4 for routine work. Where cloud models still have a clear edge is complex multi-step reasoning, very long context understanding, and highly specialized knowledge domains.

What's the best GPU for running local LLMs on a budget?

The used NVIDIA RTX 3060 12GB is the best budget option at $180-220. Its 12GB of VRAM fits 13B Q4 models that cheaper 8GB cards cannot run. For a bit more money, the RTX 3090 24GB at used prices of $700-900 is the best VRAM per dollar available.

Can I run multiple models simultaneously?

Yes, if you have enough VRAM and RAM. Ollama supports loading multiple models at once. Each model consumes its own VRAM allocation, so you'll need the combined VRAM of all loaded models. In practice, most people load one model at a time and switch as needed.

How do I fine-tune a local model on my own data?

Fine-tuning requires more VRAM than inference. Tools like LoRA and QLoRA reduce the VRAM requirements significantly. You can fine-tune a 7B model with QLoRA on a 12GB GPU. Ollama doesn't support fine-tuning directly, but you can fine-tune with tools like Axolotl or Unsloth and then convert the result for use in Ollama.

Are there any legal concerns with running open source LLMs?

Most popular open source models (Llama 3.1, Mistral, Qwen) have permissive licenses that allow commercial use. Always check the specific license for your chosen model. Meta's Llama models, for example, have a community license that's free for organizations under 700 million monthly active users.

How do I keep my local LLM setup updated with new models?

With Ollama, simply run ollama pull model-name to get the latest version. For llama.cpp, pull the latest source code and rebuild. New models in GGUF format are typically available on Hugging Face within hours of release, often quantized by community members like TheBloke.

Does running a local LLM pose any security risks?

Local inference is inherently more secure than cloud APIs since your data never leaves your machine. However, be cautious about downloading model files from untrusted sources, as GGUF files could theoretically contain exploits. Stick to models from official repositories or well-known community quantizers.

What's the difference between GGUF and other model formats?

GGUF (GPT-Generated Unified Format) is the standard format for llama.cpp and Ollama. It's optimized for efficient inference and supports various quantization levels in a single file. Other formats like SafeTensors (used by PyTorch/Hugging Face) and ONNX serve different ecosystems. For local inference with the tools discussed in this guide, GGUF is what you want.

Final Thoughts

Running open source LLMs locally in 2026 is practical, affordable, and genuinely useful. The hardware requirements are reasonable, the tooling is mature, and the models themselves are better than ever. Whether you build a $500 budget rig or a $4,000 powerhouse, you'll get a private, unlimited AI assistant that works offline and pays for itself within months.

Start simple. Install Ollama, pull Llama 3.1 8B, and spend a week using it for your actual work. If you find yourself wanting better quality or faster speeds, this guide gives you a clear upgrade path. And if you're already deep into the AI tooling ecosystem with platforms like Apatero.com, adding a local model to your workflow is one of the highest-ROI moves you can make.

The best local LLM setup is the one you actually use. Don't let perfect be the enemy of good. Get something running today, and optimize from there.

Ready to Create Your AI Influencer?

Join 115 students mastering ComfyUI and AI influencer marketing in our complete 51-lesson course.

Related Articles

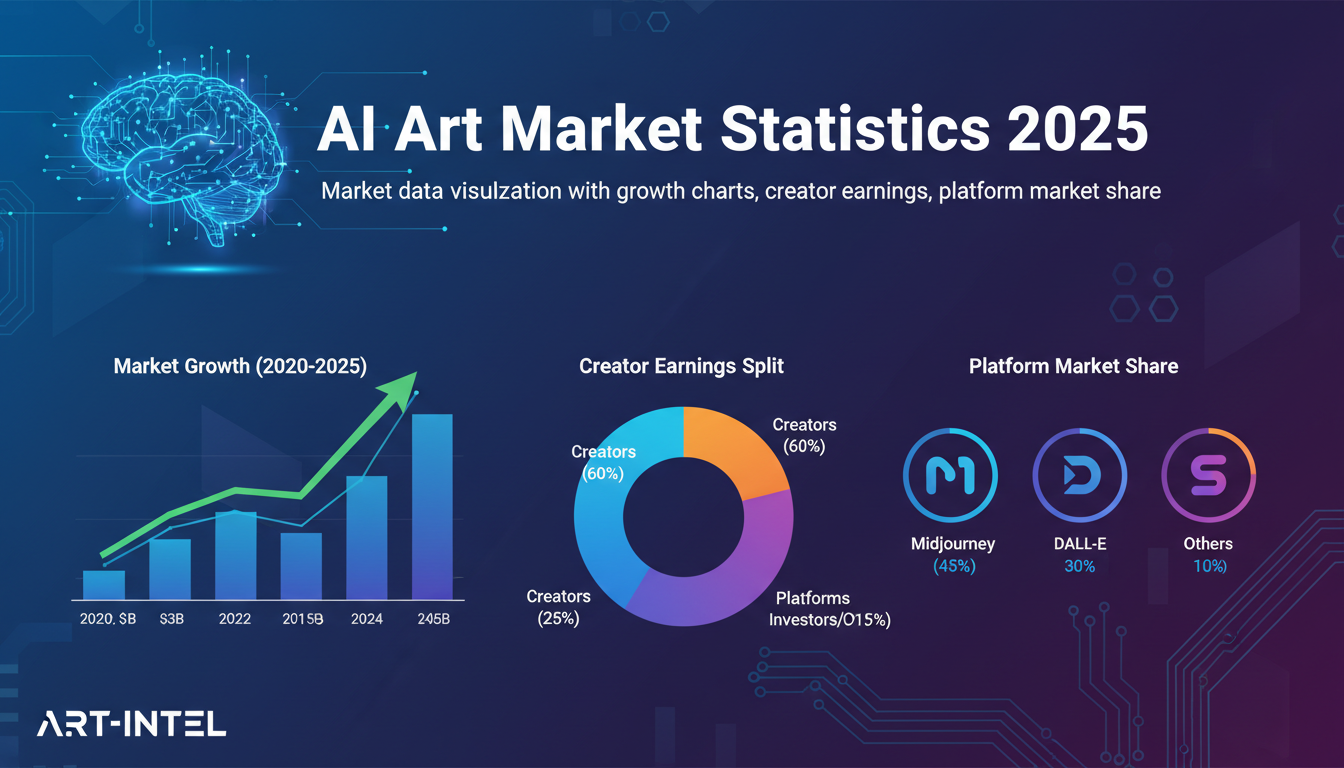

AI Art Market Statistics 2025: Industry Size, Trends, and Growth Projections

Comprehensive AI art market statistics including market size, creator earnings, platform data, and growth projections with 75+ data points.

AI Automation Tools: Transform Your Business Workflows in 2025

Discover the best AI automation tools to transform your business workflows. Learn how to automate repetitive tasks, improve efficiency, and scale operations with AI.

AI Avatar Generator: I Tested 15 Tools for Profile Pictures, Gaming, and Social Media in 2026

Comprehensive review of the best AI avatar generators in 2026. I tested 15 tools for profile pictures, 3D avatars, cartoon styles, gaming characters, and professional use cases.