ComfyUI V3 Schema: How to Update and Build Custom Nodes

Complete guide to ComfyUI V3 custom node schema. Learn how to migrate V1 nodes to V3, build new nodes with define_schema and comfy_entrypoint, publish subgraphs, and test your V3 nodes.

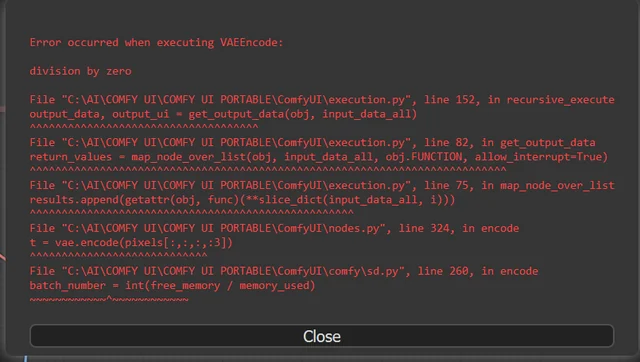

I've been building ComfyUI custom nodes since early 2024, and I'll be honest: the V1 node system was always held together with duct tape and good intentions. You'd define inputs through nested dictionaries, hope your type hints worked, and pray that the next ComfyUI update didn't quietly break your NODE_CLASS_MAPPINGS. I had a custom upscaling node that broke three times in four months because of internal API changes. No deprecation warnings, no migration path. Just red boxes in the UI and confused users filing GitHub issues at 2 AM.

That era is over. The ComfyUI V3 schema is a proper, versioned public API that treats custom node developers like first-class citizens instead of afterthoughts. It introduces creative type definitions, stateless execution, async support, and real backward compatibility guarantees. If you've been putting off the migration because you thought it would be painful, I've got good news: it's surprisingly mechanical once you understand the pattern. I migrated a pack of 12 nodes in a single afternoon.

Quick Answer: The ComfyUI V3 schema replaces the old dictionary-based node definitions with a clean, object-oriented system built on io.ComfyNode, io.Schema, and io.NodeOutput. Instead of NODE_CLASS_MAPPINGS, you export nodes through an async comfy_entrypoint() function. The execute method becomes a stateless classmethod, inputs use typed objects like io.Image.Input() and io.Int.Input(), and the entire API is versioned so future ComfyUI updates won't break your nodes. Migration from V1 is mostly mechanical: restructure your class, move your logic to a classmethod execute, and define your schema in define_schema().

- V3 schema uses versioned imports from `comfy_api.latest` or pinned versions like `comfy_api.v0_0_3`, guaranteeing backward compatibility

- Nodes inherit from `io.ComfyNode` and define their interface through a `define_schema()` classmethod returning an `io.Schema` object

- The execute function is now a stateless classmethod (receives `cls` instead of `self`), enabling future process isolation and parallel execution

- V1's `IS_CHANGED` is renamed to `fingerprint_inputs`, and `NODE_CLASS_MAPPINGS` is replaced by `comfy_entrypoint()`

- Subgraphs can be published to the node library starting from ComfyUI 0.3.63, creating reusable blueprint nodes

- All new node features and extensions will only be added to V3, so migrating now prevents falling behind

If you're just getting into the custom node ecosystem, start with my guide on essential ComfyUI custom nodes to understand what's already available before building your own. And if you're building nodes for production use, you'll want to know how to turn ComfyUI into a production API so your nodes work in headless environments too.

Why Did ComfyUI Need a V3 Schema in the First Place?

The V1 custom node system worked, but it worked the way a Jenga tower works: precariously, and with the constant threat of collapse if anyone bumped the table. Let me explain the specific problems that made V3 necessary.

First, there was no separation between internal and public APIs. Custom node developers were reaching into ComfyUI's internals because there was no other way to accomplish certain tasks. When the ComfyUI team refactored those internals, nodes broke. I watched the ComfyUI subreddit light up at least once a month with posts like "Updated ComfyUI, now half my custom nodes show red boxes." That's not a user problem. That's an architecture problem.

Second, Python dependency conflicts between node packs could render your entire ComfyUI installation unusable. I once installed a node pack that required a specific version of OpenCV that conflicted with another pack's Pillow dependency. The result? Neither pack worked, and I spent two hours untangling the mess in my virtual environment. V3's solution to this is process isolation. Each node pack running the public API can eventually live in its own Python process, so one pack's dependencies can't poison another's.

Third, dynamic inputs and outputs were technically possible in V1, but the implementation was fragile. If you wanted a node that could accept a variable number of inputs or change its output type based on configuration, you were essentially hacking around the system. V3 gives these features first-class support with proper APIs.

Here's my hot take: the V1 system lasted as long as it did because the ComfyUI community is extraordinarily patient and technically skilled. In any other ecosystem, developers would have revolted much earlier. The fact that people were building production-quality node packs on top of an API that could break with any minor update says more about the community's resilience than it does about the quality of the V1 architecture.

V3 introduces creative versioning, typed inputs/outputs, and process isolation that V1 never had.

What Does the V3 Node Structure Actually Look Like?

Let's get into the code. The best way to understand V3 is to see a complete node side by side with what V1 looked like. I'll walk through each component and explain why it changed.

V1 (Legacy) Node Structure

Here's what a simple image inversion node looked like in V1:

class InvertImageV1:

@classmethod

def INPUT_TYPES(cls):

return {

"required": {

"image": ("IMAGE",),

}

}

RETURN_TYPES = ("IMAGE",)

FUNCTION = "execute"

CATEGORY = "image/transform"

def execute(self, image):

inverted = 1.0 - image

return (inverted,)

NODE_CLASS_MAPPINGS = {

"InvertImageV1": InvertImageV1

}

NODE_DISPLAY_NAME_MAPPINGS = {

"InvertImageV1": "Invert Image"

}

Notice how the node's identity is scattered across multiple places: the class name, INPUT_TYPES as a classmethod returning a dictionary, RETURN_TYPES as a class variable, FUNCTION pointing to the execute method by string name, and the mappings at the module level. It works, but it's messy. Type information is encoded as strings in tuples. There's no validation. The execute method is an instance method, implying state that the node system doesn't actually support well.

V3 Node Structure

Here's the same node in V3:

from comfy_api.latest import ComfyExtension, io, ui

class InvertImage(io.ComfyNode):

@classmethod

def define_schema(cls):

return io.Schema(

node_id="InvertImage",

display_name="Invert Image",

category="image/transform",

description="Inverts the colors of an input image",

inputs=[

io.Image.Input("image"),

],

outputs=[

io.Image.Output(display_name="inverted"),

],

)

@classmethod

def execute(cls, image):

inverted = 1.0 - image

return io.NodeOutput(inverted)

class MyExtension(ComfyExtension):

async def get_node_list(self):

return [InvertImage]

async def comfy_entrypoint():

return MyExtension()

Everything is in one place. The schema definition tells you exactly what this node does, what it takes in, and what it puts out. The execute method is a classmethod, making the stateless nature creative. And instead of module-level dictionaries, you have a proper extension class with an async entrypoint.

I remember the first time I refactored one of my V1 nodes to V3. It took about 15 minutes, and when I was done, I just stared at the code thinking "why wasn't it always like this?" The clarity improvement is dramatic.

The Import System

One of the smartest decisions the ComfyUI team made was versioning the API imports. You can either use the latest version:

from comfy_api.latest import ComfyExtension, io, ui

Or pin to a specific version for stability:

from comfy_api.v0_0_3 import ComfyExtension, io, ui

Pinning means your node will keep working even if the API evolves. The latest import always points to the newest version, which is fine for development but risky for published nodes. My recommendation: develop with latest, ship with a pinned version. That way you get the best of both worlds.

How Do You Migrate Existing V1 Nodes to V3?

Migration is more mechanical than creative. Once you've done two or three nodes, it becomes muscle memory. Here's the step-by-step process I follow, refined after migrating dozens of my own nodes and helping a few other developers migrate theirs.

Step 1: Set Up Your Imports

Replace whatever imports you had at the top with the V3 API:

from comfy_api.latest import ComfyExtension, io, ui

If your node uses built-in UI helpers for saving or previewing images, you'll need the ui module. If not, you can leave it out, but I typically import it anyway since it's lightweight.

Step 2: Convert Your Class to Inherit from io.ComfyNode

Change your class declaration from a plain class to an io.ComfyNode subclass:

# Before

class MyCustomNode:

...

# After

class MyCustomNode(io.ComfyNode):

...

Step 3: Replace INPUT_TYPES, RETURN_TYPES, and Class Variables with define_schema

This is where the bulk of the work happens. You need to translate your dictionary-based input definitions into typed io objects. Here's the mapping for the most common types:

# V1 → V3 Type Mapping

# ("IMAGE",) → io.Image.Input("name")

# ("INT", {...}) → io.Int.Input("name", default=0, min=0, max=100)

# ("FLOAT", {...}) → io.Float.Input("name", default=1.0, step=0.01)

# ("STRING", {...}) → io.String.Input("name", default="", multiline=False)

# ("BOOLEAN", {...}) → io.Boolean.Input("name", default=True)

# ("MODEL",) → io.Model.Input("name")

# ("VAE",) → io.Vae.Input("name")

# ("CLIP",) → io.Clip.Input("name")

# ("CONDITIONING",) → io.Conditioning.Input("name")

# ("LATENT",) → io.Latent.Input("name")

# (["option1", ...]) → io.Combo.Input("name", options=["option1", ...])

Optional inputs that were in the "optional" dictionary in V1 simply get optional=True:

io.Image.Input("mask", optional=True)

io.Vae.Input("vae", optional=True)

Step 4: Convert execute to a Classmethod

This is the change that trips people up the most. In V1, execute was an instance method receiving self. In V3, it's a classmethod receiving cls. If your V1 node stored state on self, you need to rethink that pattern.

# Before (V1)

def execute(self, image, strength=1.0):

result = process(image, strength)

return (result,)

# After (V3)

@classmethod

def execute(cls, image, strength=1.0):

result = process(image, strength)

return io.NodeOutput(result)

Notice that return values change from tuples to io.NodeOutput. This is cleaner and more creative about what you're returning.

Step 5: Replace NODE_CLASS_MAPPINGS with comfy_entrypoint

Delete the module-level NODE_CLASS_MAPPINGS and NODE_DISPLAY_NAME_MAPPINGS dictionaries. Replace them with an extension class and entrypoint:

class MyNodePack(ComfyExtension):

async def get_node_list(self):

return [MyCustomNode, AnotherNode, YetAnotherNode]

async def comfy_entrypoint():

return MyNodePack()

Step 6: Handle IS_CHANGED (Now fingerprint_inputs)

If your V1 node used IS_CHANGED to control caching behavior, rename it to fingerprint_inputs:

# Before (V1)

@classmethod

def IS_CHANGED(cls, **kwargs):

return float("nan") # Always re-execute

# After (V3)

@classmethod

def fingerprint_inputs(cls, **kwargs):

return float("nan") # Always re-execute

The behavior is the same. The name just makes more sense now. The function signals to the execution engine whether the node's inputs have meaningfully changed since the last run.

The V1 to V3 migration follows a predictable pattern that becomes routine after a few nodes.

Free ComfyUI Workflows

Find free, open-source ComfyUI workflows for techniques in this article. Open source is strong.

What Are the Advanced V3 Features Worth Knowing About?

Once you've got the basics down, V3 opens up capabilities that were either impossible or extremely hacky in V1. These are the features that make the migration worthwhile beyond just future-proofing.

Async Execute Methods

V3 supports async execution natively. If your node makes API calls, reads from disk, or performs any I/O-bound work, you can declare execute as async and use await:

@classmethod

async def execute(cls, prompt, api_key):

response = await some_async_api_call(prompt, api_key)

return io.NodeOutput(response)

This is genuinely useful for nodes that call external services. I built a node that sends prompts to an LLM API and used to block the entire ComfyUI execution pipeline while waiting for the response. With async execute, other parts of the workflow can proceed while the API call resolves. It's a meaningful performance improvement for complex workflows.

API Nodes

If you're building nodes that interact with external services (which I do frequently for Apatero.com integrations), V3 has a dedicated is_api_node flag:

@classmethod

def define_schema(cls):

return io.Schema(

node_id="MyAPINode",

display_name="My API Node",

category="api",

is_api_node=True,

inputs=[

io.String.Input("prompt"),

],

outputs=[

io.Image.Output(display_name="result"),

],

)

API nodes automatically receive authentication tokens, which eliminates the need to manually handle auth in your node logic. This is a small but important quality-of-life improvement.

Output Nodes with UI Helpers

V3 provides built-in UI helpers through the ui module for common patterns like saving and previewing images. Instead of manually constructing UI response dictionaries (which was error-prone in V1), you can use helper classes:

@classmethod

def execute(cls, images, filename_prefix="ComfyUI"):

save_results = ui.ImageSaveHelper.save_images(

images, filename_prefix

)

return io.NodeOutput(

ui=ui.ImageSaveHelper.get_save_images_ui(save_results)

)

I used to have a 30-line helper function in every node pack that handled image saving and preview generation. Now it's two lines. That alone justified the migration for me.

Dynamic Inputs and ControlAfterGenerate

V3 formalizes dynamic input patterns that were fragile hacks in V1. The control_after_generate parameter for Int and Combo inputs now uses a proper enum:

io.Int.Input(

"seed",

default=0,

control_after_generate=io.ControlAfterGenerate.RANDOMIZE

)

In V1, this was a plain boolean with no documentation about what values were valid. The enum makes the intent clear and prevents bugs from typos or incorrect values.

How Do You Build a Complete V3 Node From Scratch?

Let me walk you through building a practical V3 node from the ground up. I'll create an image blending node that takes two images and a blend factor, since it demonstrates multiple inputs, typed parameters, and clean output handling.

from comfy_api.latest import ComfyExtension, io, ui

import torch

class ImageBlender(io.ComfyNode):

"""Blends two images together with adjustable strength."""

@classmethod

def define_schema(cls):

return io.Schema(

node_id="ImageBlender_v1",

display_name="Image Blender",

category="image/composite",

description="Blend two images together using adjustable strength",

inputs=[

io.Image.Input("image_a"),

io.Image.Input("image_b"),

io.Float.Input(

"blend_factor",

default=0.5,

min=0.0,

max=1.0,

step=0.01,

),

io.Combo.Input(

"blend_mode",

options=["normal", "multiply", "screen", "overlay"],

default="normal",

),

io.Image.Input("mask", optional=True),

],

outputs=[

io.Image.Output(display_name="blended"),

],

)

@classmethod

def execute(cls, image_a, image_b, blend_factor=0.5,

blend_mode="normal", mask=None):

if blend_mode == "normal":

result = image_a * (1.0 - blend_factor) + image_b * blend_factor

elif blend_mode == "multiply":

result = image_a * image_b * blend_factor + image_a * (1.0 - blend_factor)

elif blend_mode == "screen":

screen = 1.0 - (1.0 - image_a) * (1.0 - image_b)

result = image_a * (1.0 - blend_factor) + screen * blend_factor

elif blend_mode == "overlay":

low = 2.0 * image_a * image_b

high = 1.0 - 2.0 * (1.0 - image_a) * (1.0 - image_b)

overlay = torch.where(image_a < 0.5, low, high)

result = image_a * (1.0 - blend_factor) + overlay * blend_factor

else:

result = image_a

if mask is not None:

mask = mask.unsqueeze(-1) if mask.dim() == 3 else mask

result = image_a * (1.0 - mask) + result * mask

return io.NodeOutput(result.clamp(0.0, 1.0))

class ImageBlenderExtension(ComfyExtension):

async def get_node_list(self):

return [ImageBlender]

async def comfy_entrypoint():

return ImageBlenderExtension()

A few things to note about this example. The node_id includes a version suffix (_v1). I started doing this after a painful experience where I changed my node's behavior but kept the same ID, and users with cached workflows got unexpected results. Versioning the ID makes it creative when behavior changes.

The optional mask input has optional=True and defaults to None in the execute signature. This is the clean way to handle optional inputs in V3. No more checking if keys exist in a dictionary.

The blend modes demonstrate how a Combo input works with string matching in the execute method. In V1, you'd have to use a list literal in the INPUT_TYPES dictionary. In V3, you pass options as a proper parameter.

How Do You Publish Subgraphs to the Node Library?

This is a feature that many developers overlook, but it's incredibly powerful for sharing reusable workflow components without writing Python code. Starting with ComfyUI version 0.3.63, you can package complex node arrangements into subgraph blueprints and publish them to the node library.

Want to skip the complexity? Apatero gives you professional AI results instantly with no technical setup required.

I discovered subgraph publishing by accident. I had a complex face restoration pipeline that I was copying and pasting across multiple workflows. Seven nodes, carefully wired together, with specific settings I'd tuned over weeks of testing. Every time I needed it in a new workflow, I'd open an old one, select the nodes, copy, switch to the new workflow, paste, and then reconnect everything. It was tedious and error-prone.

Then I learned you could select those nodes, click the book icon in the selection toolbox (or use "Add Subgraph to Library"), and turn the whole thing into a reusable blueprint node. Now my face restoration pipeline shows up in the node library under "Subgraph Blueprints," and I can drag it in like any other node. One input for the source image, one output for the restored result, and all seven internal nodes are hidden inside.

Here's how to do it:

- Build your node arrangement in a regular workflow. Get it working exactly the way you want it.

- Select all the nodes you want to package together.

- Define your inputs and outputs by marking which connections should be exposed as the subgraph's external interface.

- Click the publish icon (the book icon) in the selection toolbox, or open the toolbox menu and choose "Add Subgraph to Library."

- Name your subgraph and add a description. Be specific. Future you will thank present you.

After publishing, your subgraph appears under "Node Library" and then "Subgraph Blueprints." You can search for it, drag it onto any workflow, and use it just like a native node. If you need to update it later, you can edit the subgraph internals and the changes propagate to all instances.

Subgraphs also support nesting, which means you can create subgraphs within subgraphs. I use this for building hierarchical workflows where each level of abstraction handles a different concern. My top-level workflow might have a "Generate Character" subgraph that internally contains a "Face Detail" sub-subgraph. It keeps complex workflows manageable without sacrificing flexibility.

For anyone building workflows on Apatero.com and then exporting them to ComfyUI for local customization, subgraphs make the transition much smoother. You can package your tuned pipeline components and reuse them across projects.

How Should You Test V3 Custom Nodes?

Testing is where a lot of custom node developers cut corners, and I get it. When you're excited about a new node idea, writing tests feels like eating your vegetables. But I've learned the hard way that untested nodes lead to support nightmares. I once published a color grading node that worked perfectly with 512x512 images but threw silent errors on non-square images. It took three weeks and 14 GitHub issues before someone pinpointed the bug.

Here's my testing approach for V3 nodes:

Unit Testing the Execute Method

Since execute is a stateless classmethod in V3, it's trivially testable. You don't need to instantiate the node class or set up any ComfyUI infrastructure. Just call the method directly:

import torch

import pytest

def test_image_blender_normal():

white = torch.ones(1, 64, 64, 3)

black = torch.zeros(1, 64, 64, 3)

result = ImageBlender.execute(

image_a=white,

image_b=black,

blend_factor=0.5,

blend_mode="normal",

mask=None,

)

expected = torch.ones(1, 64, 64, 3) * 0.5

assert torch.allclose(result.args[0], expected, atol=1e-6)

def test_image_blender_with_mask():

white = torch.ones(1, 64, 64, 3)

black = torch.zeros(1, 64, 64, 3)

# Mask: left half = 1 (blend), right half = 0 (keep original)

mask = torch.zeros(1, 64, 64)

mask[:, :, :32] = 1.0

result = ImageBlender.execute(

image_a=white,

image_b=black,

blend_factor=1.0,

blend_mode="normal",

mask=mask,

)

# Left half should be black (fully blended)

assert torch.allclose(result.args[0][:, :, :32, :], black[:, :, :32, :])

# Right half should be white (masked out)

assert torch.allclose(result.args[0][:, :, 32:, :], white[:, :, 32:, :])

This is a massive improvement over V1 testing. In V1, you often had to mock the ComfyUI execution context or run your tests inside a running ComfyUI instance. With V3's classmethod pattern, your node logic is just a function that takes inputs and returns outputs. Standard pytest works perfectly.

Schema Validation Testing

You should also test that your schema definition is valid:

def test_schema_valid():

schema = ImageBlender.define_schema()

assert schema.node_id == "ImageBlender_v1"

assert len(schema.inputs) == 5

assert len(schema.outputs) == 1

assert any(

inp.optional for inp in schema.inputs

if hasattr(inp, 'optional')

)

Integration Testing

For full integration testing, I recommend keeping a test workflow JSON that exercises your nodes in ComfyUI's queue prompt API. You can automate this with a simple script:

import json

import requests

def test_node_in_workflow():

with open("test_workflow.json") as f:

workflow = json.load(f)

response = requests.post(

"http://127.0.0.1:8188/prompt",

json={"prompt": workflow}

)

assert response.status_code == 200

result = response.json()

assert "error" not in result

This catches issues that unit tests miss: type mismatches between nodes, incorrect tensor shapes, and compatibility problems with other node packs. I run these integration tests against every ComfyUI update before publishing a new version of my node pack.

For more ComfyUI tips and tricks around workflow management and testing, check out my comprehensive guide.

Earn Up To $1,250+/Month Creating Content

Join our exclusive creator affiliate program. Get paid per viral video based on performance. Create content in your style with full creative freedom.

A solid testing pipeline catches issues before your users do. Unit test the execute method directly, then run integration tests against real workflows.

Common Migration Pitfalls and How to Avoid Them

After migrating my own nodes and watching other developers go through the process, I've noticed the same mistakes coming up repeatedly. Here's what to watch out for.

Pitfall 1: Storing State on the Class

In V1, some developers used __init__ to set up state that persisted between executions. V3 nodes are stateless by design. The execute classmethod receives cls, not self, and there's no instance to store state on.

If you need to cache expensive computations between runs, use the caching API provided on the cls parameter rather than instance variables. The reasoning behind this design is to enable process isolation. Your node might eventually run in a separate Python process from ComfyUI itself, so local state wouldn't survive anyway.

Pitfall 2: Returning Tuples Instead of NodeOutput

V1 nodes returned tuples: return (result,). V3 nodes return io.NodeOutput(result). If you forget this change, you'll get confusing errors because the execution engine expects the new return type.

This one bit me on my first migration because I was doing find-and-replace on the class structure but forgot about the return statements. My node loaded fine but produced no output. Always check your return statements.

Pitfall 3: Forgetting the Async Entrypoint

The comfy_entrypoint function must be either async or not, but the get_node_list method inside your extension class must be defined as async. I've seen developers write def get_node_list (without async) and wonder why their nodes don't appear in ComfyUI.

# Wrong

class MyExtension(ComfyExtension):

def get_node_list(self): # Missing async!

return [MyNode]

# Right

class MyExtension(ComfyExtension):

async def get_node_list(self):

return [MyNode]

Pitfall 4: Not Pinning Your API Version for Published Nodes

During development, using comfy_api.latest is fine. But when you publish your node pack to the Comfy Node Registry, pin to a specific version. The latest import will track API changes, and while the ComfyUI team promises backward compatibility, there's no reason to take that risk with published code.

Pitfall 5: Ignoring the UI Module

V3's ui module provides helpers for common patterns that developers used to implement manually. Image saving, preview generation, and file management all have built-in helpers. Using them means your nodes follow the same patterns as the core nodes, which makes them more reliable and easier to maintain.

Hot Take: V3 Will Split the Custom Node Ecosystem

Here's something nobody's talking about openly, but I think it's inevitable. The V3 migration is going to create a two-tier custom node ecosystem. Actively maintained node packs will migrate and gain access to new features, process isolation, and stability guarantees. Abandoned or hobby-project node packs will stay on V1 and gradually break as ComfyUI evolves.

This isn't necessarily bad. The V1 node ecosystem has a long tail of abandoned packs that haven't been updated in over a year. Some of them are security risks. The V3 migration is a natural filter that separates active development from digital archaeology. But it does mean that if you rely on a V1 node pack that the author has abandoned, you either need to fork it and migrate it yourself, or find an alternative.

My second hot take: within 12 months, the major node managers will start flagging V1-only nodes as "legacy" or "compatibility mode." Users will gravitate toward V3 nodes because they'll be more stable, better tested, and have access to features that V1 nodes simply can't use. If you're a node developer, the migration isn't optional. It's the difference between being relevant and being a footnote.

For workflow builders who'd rather skip the node management headaches entirely, platforms like Apatero.com handle all of this infrastructure complexity. You get the creative power without needing to manage dependencies, versions, or compatibility issues.

Production Tips for V3 Node Development

After shipping V3 nodes in production for several months, I've accumulated practical wisdom that doesn't fit neatly into migration guides. These are the things I wish someone had told me before I started.

Version your node IDs from day one. I mentioned this earlier, but it bears repeating. Once a user saves a workflow with your node, the node_id becomes a permanent contract. If you change behavior, create a new ID. ImageBlender_v1, ImageBlender_v2, and so on. Keep the old versions available for backward compatibility.

Write descriptive schema descriptions. The description field in io.Schema actually shows up in ComfyUI's node search and documentation. A good description saves you support tickets. "Blends two images using configurable blend modes with optional mask support" is far more helpful than "Image blender node."

Use pinned API versions in your requirements. Add comfy_api>=0.0.3,<0.1.0 to your requirements.txt or pyproject.toml. This gives you a range of compatible versions without exposing your pack to breaking changes in future major versions.

Test with multiple image sizes. I can't stress this enough. The number one bug in custom image nodes is hard-coded assumptions about tensor dimensions. Test with square images, portrait images, landscape images, tiny images, and large images. Your node should handle all of them gracefully.

Provide clear error messages. When your node receives invalid input, raise a descriptive exception rather than letting PyTorch throw a cryptic tensor shape error. Your users will encounter these messages when they wire nodes incorrectly, and a message like "ImageBlender requires both images to have the same dimensions, got 512x512 and 768x1024" is infinitely more helpful than "RuntimeError: The size of tensor a (512) must match the size of tensor b (768) at non-singleton dimension 2."

Frequently Asked Questions

Do I have to migrate my V1 nodes to V3 right now?

Not immediately. V1 nodes continue to work in current ComfyUI versions. However, all new features and extensions will only be added to the V3 schema. If you want dynamic inputs, process isolation, or async support, you need V3. I'd recommend starting migration now rather than waiting until V1 support is deprecated.

Can V1 and V3 nodes coexist in the same workflow?

Yes. ComfyUI supports both schemas simultaneously. A workflow can mix V1 and V3 nodes without any issues. The execution engine handles the translation between the two systems transparently. This means you can migrate incrementally, converting nodes one at a time.

How long does migration typically take per node?

For a simple node with a few inputs and outputs, expect 10 to 20 minutes. For complex nodes with dynamic inputs, custom UI, or heavy state management, it could take an hour or more. In my experience, the first node takes the longest because you're learning the patterns. By the third or fourth node, it becomes mostly mechanical.

Will V1 nodes eventually stop working?

The ComfyUI team hasn't announced a hard deprecation date for V1, but the direction is clear. V1 is frozen. No new features, no bug fixes (beyond critical security issues), and eventually, the execution engine will likely drop V1 support. My educated guess is this happens sometime in late 2026 or early 2027, with plenty of advance notice.

How do I handle custom frontend widgets in V3?

V3 makes custom Vue widgets significantly easier. Instead of writing JavaScript that hooks into ComfyUI's internal widget system, you can define widget behavior through the schema. For fully custom widgets, V3 provides a proper extension API that's documented and stable, unlike the V1 approach of reaching into internal DOM manipulation functions.

Can V3 nodes run in separate Python processes?

This is the eventual goal, and it's one of the main reasons V3 enforces stateless execution. Nodes that use only the public API can be isolated in their own Python process, preventing dependency conflicts between node packs. As of early 2026, this feature is still being rolled out incrementally, but the architecture is in place.

What happens to my node's state management in V3?

Since V3 nodes are stateless classmethods, any state you stored on self in V1 needs a different approach. The cls parameter in V3 provides a caching API for storing computed values between executions. For persistent storage beyond a single session, use standard file I/O or a database.

Is there a tool to auto-convert V1 nodes to V3?

There are community-contributed Claude Code skills and scripts that can assist with migration, but fully automated conversion isn't reliable due to the architectural differences (especially around state management). I recommend manual migration with the help of the official V3 migration guide.

How do I test V3 nodes without running ComfyUI?

Since execute is a stateless classmethod, you can import your node class directly and call execute with test inputs in pytest. No ComfyUI instance required for unit testing. For integration testing, you'll want a running instance with the queue prompt API.

Can I publish subgraphs as part of my node pack?

Currently, subgraph blueprints are stored locally in the ComfyUI node library. They're not distributed through the Node Registry like Python-based custom nodes. However, you can share subgraph workflow files that users import and then publish to their local library. I expect the Registry to support subgraph distribution in a future update.

Wrapping Up

The ComfyUI V3 schema isn't just a cleanup. It's a foundation for the next generation of the custom node ecosystem. Versioned APIs, stateless execution, async support, process isolation, and proper type safety are all things that serious development platforms need, and ComfyUI finally has them.

If you're maintaining a custom node pack, start migrating now. The process is more mechanical than creative, and the benefits are immediate: cleaner code, better testability, and access to features that V1 will never get. If you're building new nodes, go straight to V3. There's no reason to start with the legacy system.

And if you're a workflow builder who uses custom nodes but doesn't develop them, pay attention to which node packs migrate to V3. That migration is a signal of active maintenance and long-term reliability. The packs that stay on V1 aren't going anywhere good. Meanwhile, platforms like Apatero.com continue to abstract away these infrastructure concerns, letting you focus on what actually matters: creating great content with AI tools.

The ComfyUI ecosystem is maturing, and V3 is the clearest evidence of that. Build on it.

Ready to Create Your AI Influencer?

Join 115 students mastering ComfyUI and AI influencer marketing in our complete 51-lesson course.

Related Articles

10 Most Common ComfyUI Beginner Mistakes and How to Fix Them in 2025

Avoid the top 10 ComfyUI beginner pitfalls that frustrate new users. Complete troubleshooting guide with solutions for VRAM errors, model loading...

25 ComfyUI Tips and Tricks That Pro Users Don't Want You to Know in 2025

Discover 25 advanced ComfyUI tips, workflow optimization techniques, and pro-level tricks that expert users use.

360 Anime Spin with Anisora v3.2: Complete Character Rotation Guide ComfyUI 2025

Master 360-degree anime character rotation with Anisora v3.2 in ComfyUI. Learn camera orbit workflows, multi-view consistency, and professional...