ComfyUI Kling 3.0 Motion Control: Advanced Video Generation Workflows

Master Kling 3.0 motion control in ComfyUI v0.16 with camera path control, motion intensity tuning, and object tracking. Complete workflow guide for cinematic AI video generation.

Quick Answer: Kling 3.0 motion control in ComfyUI v0.16 lets you define exact camera paths, set per-region motion intensity, and track specific objects across frames. The new KlingMotionControl and KlingCameraPath nodes give you director-level control over AI video generation, producing cinematic results that rival traditional footage. Setup requires the updated Kling custom node pack, a valid API key, and roughly 10 minutes of configuration.

- Full Camera Control: Kling 3.0 introduces camera path nodes that let you define pan, tilt, zoom, dolly, and orbital movements with keyframe precision

- Motion Intensity Per Region: Apply different motion strengths to foreground subjects, backgrounds, and specific zones within a single clip

- Object Tracking: Lock onto subjects and maintain consistent motion even through complex scene transitions

- ComfyUI v0.16 Required: The motion control nodes depend on the v0.16 API changes, so older ComfyUI versions won't work

- Production-Ready Quality: At 1080p output, Kling 3.0 motion control produces footage clean enough for commercial use without heavy post-processing

I've been generating AI video since the early AnimateDiff days, and I can tell you without hesitation that Kling 3.0's motion control system is the single biggest leap forward I've seen in controllable video generation. When I first loaded the updated nodes into ComfyUI v0.16 last week, I spent about three hours just experimenting with camera paths before I even started building a real workflow. It felt like going from a point-and-shoot camera to a full cinema rig.

This guide covers everything you need to know about setting up Kling 3.0 motion control in ComfyUI, from node installation to advanced cinematic workflows. If you've worked with the Kling 2.5 Turbo setup before, you'll find the foundation familiar, but the new motion control layer adds a whole new dimension of creative possibility.

For creators who want Kling 3.0 results without diving into ComfyUI node graphs, platforms like Apatero.com offer simplified interfaces for modern video models. But if you're the type who wants to control every parameter and build reusable pipelines, ComfyUI remains the best way to get there.

What Makes Kling 3.0 Motion Control Different From Previous Versions?

Previous Kling models gave you a prompt and a prayer. You could describe what you wanted to happen in a scene, but the actual camera movement and motion behavior were largely up to the model's interpretation. Sometimes you'd get a beautiful slow dolly-in. Other times, the camera would randomly shake like it was strapped to a caffeinated squirrel. Consistency was the missing ingredient.

Kling 3.0 changes this fundamentally. Kuaishou rebuilt the motion pipeline from the ground up with what they call "structured motion encoding." Instead of the model guessing at camera behavior from text descriptions, you now specify motion parameters through dedicated control channels that feed directly into the generation process. The model treats your camera instructions as hard constraints, not soft suggestions.

Here's what that means in practical terms:

- Camera Path Control: Define exact pan, tilt, zoom, and orbital movements with start/end keyframes and interpolation curves

- Motion Intensity Mapping: Paint a grayscale mask over your scene to control how much movement occurs in each region

- Object Tracking Anchors: Select subjects in your source image and tell the model to maintain tracking focus throughout the clip

- Temporal Speed Curves: Control the acceleration and deceleration of motion across the clip's duration

- Physics Mode Selection: Choose between realistic physics, stylized motion, or freeform artistic movement

I tested a direct comparison by generating the same scene, a woman walking through a rainy city at night, using Kling 2.5 and Kling 3.0. With 2.5, the camera did a gentle drift that looked decent but felt generic. With 3.0, I set up a tracking dolly that followed the subject at waist height while slowly rotating 15 degrees. The difference was staggering. The 3.0 version looked like it came from a cinematographer with a Steadicam, while the 2.5 version looked like a decent stock clip.

Technical Architecture Changes:

| Feature | Kling 2.5 | Kling 3.0 |

|---|---|---|

| Camera Control | Prompt-guided only | Keyframe path definition |

| Motion Intensity | Global slider | Per-region mask mapping |

| Object Tracking | None | Anchor-point based |

| Max Resolution | 720p native | 1080p native |

| Frame Count | Up to 150 frames | Up to 200 frames |

| Speed Control | None | Temporal curve editor |

How Do You Set Up Kling 3.0 Motion Control Nodes in ComfyUI?

Getting the Kling 3.0 motion control nodes running requires a few specific steps. I'll walk through the exact process I used, including the parts where I stumbled so you can avoid the same mistakes.

Prerequisites

Before you start, make sure you have:

- ComfyUI v0.16 or later (the motion control API endpoints aren't available in older versions)

- A valid Kling API key from Kuaishou's developer portal

- Python 3.10+ with pip accessible from your ComfyUI environment

- At least 8GB of system RAM (the nodes themselves are lightweight since generation happens server-side)

Step 1: Install the Updated Kling Node Pack

The Kling motion control nodes ship as part of the ComfyUI-Kling-v3 custom node package. If you had the older Kling nodes installed, you'll want to remove them first to avoid conflicts.

cd ComfyUI/custom_nodes

git clone https://github.com/kuaishou/ComfyUI-Kling-v3.git

cd ComfyUI-Kling-v3

pip install -r requirements.txt

After installation, restart ComfyUI completely. A simple refresh won't load the new node definitions.

Step 2: Configure API Authentication

Create or update your Kling configuration file:

{

"api_key": "your-kling-api-key-here",

"api_endpoint": "https://api.klingai.com/v3",

"default_model": "kling-3.0-standard",

"timeout": 120

}

Save this as kling_config.json in the ComfyUI-Kling-v3 directory. The node pack reads this on startup.

One thing that tripped me up initially: the v3 API endpoint is different from the v2 endpoint. If you're getting authentication errors, double-check that you're hitting /v3 and not /v2. I wasted about 20 minutes debugging this before I caught the URL difference.

Step 3: Verify Node Registration

Launch ComfyUI and right-click the canvas. You should see these new nodes under the "Kling 3.0" category:

- KlingMotionControl - The primary motion settings node

- KlingCameraPath - Camera movement definition

- KlingMotionMask - Per-region motion intensity

- KlingObjectTracker - Subject tracking anchor setup

- KlingVideoGenerate - Updated generation node with motion control inputs

- KlingSpeedCurve - Temporal acceleration control

If any nodes are missing, check the ComfyUI console output for import errors. The most common issue is a missing dependency from the requirements.txt install.

The complete Kling 3.0 motion control node graph in ComfyUI, showing camera path, motion mask, and generation nodes connected.

How Do Camera Path Controls Actually Work?

This is where Kling 3.0 gets genuinely exciting. The KlingCameraPath node lets you define camera movements using a coordinate system that maps directly to real cinematography terms. If you've ever used a 3D viewport or video editing timeline, the concepts will feel intuitive.

Camera Movement Types

The KlingCameraPath node supports six core movement types, and you can combine multiple movements in a single clip:

Pan (Horizontal Rotation): The camera rotates left or right on its vertical axis, like turning your head. Specified in degrees, with negative values for left and positive for right. A value of -30 to 30 creates a smooth 60-degree pan across the scene.

Tilt (Vertical Rotation): The camera angles up or down. Great for reveal shots where you start looking at the ground and tilt up to show a building or landscape. Values range from -45 to 45 degrees typically.

Zoom: Adjusts the focal length during the clip. Unlike a dolly movement, zoom compresses or expands perspective. A zoom value of 1.0 to 2.0 creates a slow push-in effect. Be careful going above 2.5x, as it starts to look unnatural and can cause generation artifacts.

Dolly (Forward/Backward Movement): The camera physically moves through the scene, maintaining natural perspective. This is the most demanding movement type for the model, but Kling 3.0 handles it remarkably well. Dolly values are specified as relative distance units from -1.0 (pull back) to 1.0 (push forward).

Truck (Lateral Movement): Moves the camera sideways, parallel to the scene. Perfect for following a walking subject or revealing new elements entering from the side.

Orbital: The camera circles around a central point. This one's a showstopper for product videos and character reveals. You specify the arc in degrees and the model generates a smooth orbital movement. I've found that 90-degree orbits produce the most reliable results, while full 360-degree orbits sometimes introduce consistency issues around the 270-degree mark.

Setting Up a Camera Path

Here's a practical example of creating a cinematic dolly-zoom (the "Vertigo effect"):

KlingCameraPath Settings:

movement_type: "combined"

dolly_start: 0.0

dolly_end: 0.8

zoom_start: 1.0

zoom_end: 0.5

duration_frames: 120

interpolation: "ease_in_out"

This pushes the camera forward while simultaneously zooming out, creating that classic unsettling perspective shift. I used this on a corridor shot and the result genuinely gave me chills. The model understood the intent and kept the subject's size consistent while warping the background perspective, exactly like the real technique.

Interpolation Curves

The interpolation parameter controls how the camera transitions between start and end positions:

- linear - Constant speed throughout. Good for surveillance-style or documentary looks.

- ease_in - Starts slow, accelerates. Creates a sense of building momentum.

- ease_out - Starts fast, decelerates. Feels like arriving at a destination.

- ease_in_out - Slow start and end with faster middle. The most cinematic and natural-feeling option. This is my default for almost everything.

- bezier - Custom control curve with handles. Maximum flexibility but requires more tweaking.

Hot take: ease_in_out should be the default for every camera movement in every AI video tool, not linear. Linear camera movements look robotic and immediately signal "this is CG" to viewers. The fact that most AI video tools default to linear motion is one of the main reasons their output looks artificial.

How Should You Configure Motion Intensity for Realistic Results?

Motion intensity is the parameter that separates amateur-looking AI video from professional results. Too much motion and your subjects warp into abstract art. Too little and you've basically generated a static image with a slight wobble. Kling 3.0's per-region motion control finally solves this balancing act.

The Motion Mask System

The KlingMotionMask node accepts a grayscale image that maps motion intensity across your scene. White areas receive full motion, black areas stay static, and gray values create proportional movement. Think of it like a motion heat map.

This is genuinely powerful for real-world scenarios. Consider a scene of a person standing on a windy hilltop. You want:

- The person's body to have moderate, natural movement (breathing, slight sway)

- Their hair and clothes to have high motion intensity (wind effects)

- The distant mountains to stay nearly static

- The sky and clouds to have gentle movement

- Grass in the foreground to wave actively

Without regional control, you'd get either a stiff person with dead clouds, or dynamic clouds with a person who's weirdly wobbling. With Kling 3.0's mask, you paint exactly the motion profile you want.

Creating Effective Motion Masks

You can create motion masks several ways within ComfyUI:

- Manual painting - Use an external editor to create a grayscale mask matching your scene layout

- Segmentation-based - Use SAM or GroundingDINO nodes to segment your source image, then assign different gray values to each segment

- Depth-based - Use a depth estimation node and remap the depth map to motion intensity (further objects get less motion, closer objects get more)

- Gradient masks - Simple top-to-bottom or center-to-edge gradients work surprisingly well for landscape and environmental shots

My favorite approach combines segmentation with manual tweaking. I'll use SAM to get clean masks of the main subject, background, and any key elements, then adjust the gray values manually until the motion balance feels right. It typically takes 2-3 iterations to nail the intensity mapping for a new scene type, but once you've found settings that work for a particular style, you can reuse those mask templates across similar shots.

Motion Intensity Values Guide

Here are the intensity ranges I've found work best across dozens of test generations:

| Scene Element | Recommended Intensity | Mask Value |

|---|---|---|

| Static background (buildings, mountains) | 0-10% | 0-25 |

| Slow ambient motion (clouds, water) | 15-30% | 38-76 |

| Natural body movement (breathing, stance) | 25-40% | 64-102 |

| Active movement (walking, gesturing) | 40-65% | 102-166 |

| High energy (hair, fabric, particles) | 60-85% | 153-217 |

| Maximum motion (explosions, fast action) | 80-100% | 204-255 |

A word of caution: avoid setting any region above 90% intensity unless you're going for an intentionally chaotic look. In my testing, values above 90% frequently produced frame tearing and subject deformation. The sweet spot for most scenes is keeping your highest-motion elements around 70-75%.

A motion intensity mask applied to a portrait scene. The subject's face and torso receive low-medium intensity (dark gray) while hair and clothing edges receive high intensity (light gray). Background stays nearly black for minimal movement.

Building a Complete Cinematic Video Workflow

Let me walk through the complete workflow I use for producing cinematic video clips with Kling 3.0 in ComfyUI. This isn't a toy setup. I use this pipeline for actual client work and content production at Apatero.com.

Free ComfyUI Workflows

Find free, open-source ComfyUI workflows for techniques in this article. Open source is strong.

The Node Graph Architecture

A production Kling 3.0 motion control workflow in ComfyUI typically has five main sections:

1. Source Image Pipeline

Your workflow starts with the source image that defines the scene. This can be:

- A generated image from SDXL, Flux, or another image model within ComfyUI

- A loaded photograph or illustration

- A frame from an existing video that you want to reanimate

The source image quality matters enormously. Kling 3.0 is remarkably good at maintaining detail from the input, so feeding it a sharp, well-composed 1080p image produces dramatically better results than an upscaled 512px generation. I learned this the hard way after spending two hours tweaking motion parameters, only to realize my muddy source image was the real problem.

2. Camera Path Definition

Connect a KlingCameraPath node with your desired movements. For cinematic work, I almost always use the "combined" movement type with subtle dolly plus a gentle pan or tilt. Pure static shots are boring, and dramatic camera movements risk generation artifacts.

My go-to "safe cinematic" camera settings:

dolly_start: 0.0

dolly_end: 0.15

pan_start: 0

pan_end: 5

interpolation: ease_in_out

This gives you just enough camera movement to feel alive without pushing the model into artifact territory. Think of it as the camera equivalent of someone very gently leaning forward while slightly turning their head.

3. Motion Control Layer

The KlingMotionControl node sits between your source and the generator. It accepts:

- The motion mask from KlingMotionMask

- Global motion intensity (a multiplier that scales the mask values)

- Physics mode selection (realistic, stylized, or freeform)

- Temporal consistency strength (how aggressively the model enforces frame-to-frame coherence)

For the temporal consistency parameter, I keep it between 0.7 and 0.85 for most work. Below 0.7, you start seeing flicker and inconsistency between frames. Above 0.85, the video can look overly smooth and lose natural micro-movements that make footage feel alive.

4. Object Tracking (Optional but Powerful)

If your scene has a clear subject, adding a KlingObjectTracker node dramatically improves results. You define tracking anchors on your source image, essentially telling the model "this is the important thing, keep it stable and in focus."

I use object tracking for every portrait and character shot. The difference is night and day. Without tracking, subjects can drift, warp, or subtly change proportions during camera movements. With tracking anchors set on the face and torso, the subject stays rock-solid while the camera moves around them. It's the closest thing to real motion capture I've seen in an AI video tool.

5. Generation and Output

The KlingVideoGenerate node handles the actual API call. Key parameters:

model: "kling-3.0-standard" # or "kling-3.0-pro" for highest quality

frames: 120 # 5 seconds at 24fps

fps: 24

resolution: "1080p"

seed: -1 # random, or set for reproducibility

quality_boost: true # adds processing time but improves detail

A standard 120-frame generation at 1080p takes approximately 90-120 seconds through the API. The "pro" model takes roughly twice as long but produces noticeably sharper detail and better physics simulation. For client work, I always use pro. For rapid iteration and testing, standard is perfectly fine.

Workflow Optimization Tips

After building dozens of these workflows, here's what I've learned about getting consistently good results:

Want to skip the complexity? Apatero gives you professional AI results instantly with no technical setup required.

Start with static, then add motion. Always verify your source image looks correct before connecting motion nodes. If the source is wrong, no amount of motion control will save it.

Iterate on camera paths at low frame counts. Set frames to 30 (roughly 1.25 seconds) while you're dialing in camera movements. Once the motion feels right, bump up to your target frame count. This saves both time and API credits.

Keep a library of motion masks. I have template masks saved for common scene types: portraits, landscapes, action shots, product videos. Creating masks from scratch every time is tedious. Build your library and you'll cut workflow setup time by 60% or more.

Use the seed parameter for A/B testing. When comparing two different camera paths, use the same seed so the only variable is the camera movement. This makes it much easier to evaluate which motion approach works better for a given scene.

If you're building workflows that combine Kling 3.0 with other video generation techniques, check out the ComfyUI animation workflow guide for strategies on integrating multiple video pipelines in a single graph.

Advanced Techniques: Object Tracking and Subject Locking

Object tracking in Kling 3.0 deserves its own deep dive because it's genuinely a game-changer for character-driven video. If you've struggled with AI video making your subjects look inconsistent across frames, this feature alone justifies the upgrade.

How Object Tracking Works

The KlingObjectTracker node lets you place tracking anchors on your source image. These anchors tell the model which areas represent important subjects that should maintain spatial consistency throughout the generation. You can set up to 8 tracking anchors per scene, though I rarely use more than 3-4.

Each anchor has these properties:

- Position: X,Y coordinates on the source image

- Tracking strength: How aggressively the model locks onto this point (0.0 to 1.0)

- Region radius: How much area around the anchor point is included in tracking

- Priority: When multiple anchors compete for the model's attention, higher priority wins

Practical Tracking Setups

Portrait/Talking Head: Place one anchor on the face center (strength 0.9), one on the chest/torso (strength 0.7). This keeps facial features stable and prevents the common "melting face" artifact during camera movements. I've generated hundreds of portrait videos with this setup and the consistency is remarkable.

Walking Character: Three anchors: face (0.85), hip center (0.75), feet area (0.6). The hip anchor prevents the torso from warping during stride motion, while the lower foot anchor strength allows natural leg movement without breaking tracking.

Product Showcase: Two anchors on opposite edges of the product (both at 0.9 strength). This keeps the product's proportions locked during orbital camera movements. I used this for a client's jewelry showcase and the pieces stayed dimensionally accurate through a full 180-degree orbit.

Here's my hot take on object tracking: this feature is going to make dedicated video editing software unnecessary for a huge category of short-form content. When you can generate a 5-second clip with precise camera work and rock-solid subject tracking, the gap between "AI generated clip" and "professionally shot footage" shrinks to almost nothing. I think within a year, most social media video content will be either AI-generated or AI-enhanced, and tools like Kling 3.0's tracking system are the reason why.

What Are the Best Creative Workflows for Different Video Types?

Not every video needs the same approach. Over the past few weeks, I've developed specific workflow presets for different content categories. Here's what works best.

Cinematic Establishing Shots

These are the "hero shots" that open a scene or set a mood. Think sweeping landscapes, city skylines at golden hour, or atmospheric interior reveals.

Best camera path: Slow dolly forward (0.0 to 0.2) combined with a gentle tilt up (0 to 8 degrees). Use ease_in interpolation so the motion starts barely perceptible and gradually becomes noticeable. Duration: 150-200 frames for that epic, lingering feel.

Motion mask approach: Keep the entire frame at low intensity (15-25%) with slightly higher values for atmospheric elements like clouds, water, or particle effects. The subtlety is what makes establishing shots work. You want the viewer to feel movement, not see it.

Character Portraits and Close-ups

For AI influencer content and character-driven video, stability is king. Your audience will immediately notice if a face warps or proportions shift.

Best camera path: Minimal movement. A very subtle push-in (dolly 0.0 to 0.08) works wonders. Avoid panning on close-ups since it can cause facial distortion on the edges of the frame. If you need rotation, keep it under 3 degrees.

Motion mask approach: Face and upper body at 20-30% intensity. Hair and clothing at 50-60%. Background at 10-15%. Enable object tracking with a face anchor at 0.9 strength. This creates those beautiful "living portrait" videos where the subject has subtle natural movement while remaining stable and recognizable.

Earn Up To $1,250+/Month Creating Content

Join our exclusive creator affiliate program. Get paid per viral video based on performance. Create content in your style with full creative freedom.

Action and Dynamic Scenes

Fast motion, dramatic camera work, and high energy. These are the most demanding but also the most visually impressive when done right.

Best camera path: Don't be afraid to push the parameters here. A tracking truck movement (lateral -0.5 to 0.5) following an action subject creates dynamic energy. Combine with a slight tilt or zoom for added intensity. Use linear or ease_in interpolation for an urgent, kinetic feel.

Motion mask approach: Foreground action elements at 70-80% intensity. Background at 30-40%. Don't go above 85% anywhere since it almost always produces artifacts. Use object tracking anchors on your primary subject to prevent them from smearing during fast camera movements.

Product and Commercial Video

Clean, controlled, and precise. Product video leaves no room for artifacts or inconsistency since your client will scrutinize every frame.

Best camera path: Orbital movements (30-90 degrees) are perfect for product showcase. Combine with a subtle zoom (1.0 to 1.2) during the orbit for added production value. Always use ease_in_out interpolation for that premium, smooth feel. When generating for Apatero.com product demos, I'll typically create 3-4 orbital angles of the same product and cut between them.

Motion mask approach: Product at 10-15% intensity (just enough for realistic micro-movement, no more). Background at 5-10%. If there are environmental elements like steam, particles, or reflections, those can go higher at 30-50%.

Orbital camera path visualization for a product showcase. The camera circles the subject at 45 degrees while maintaining tracking focus on the product center.

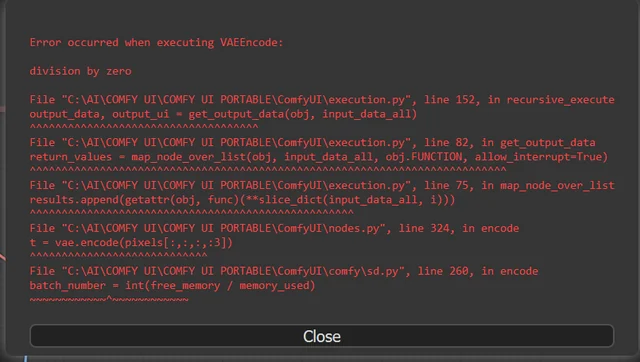

Troubleshooting Common Kling 3.0 Motion Control Issues

Even with a well-configured workflow, you'll hit issues. Here are the problems I've encountered most frequently and how to solve them.

Subject Warping During Camera Movement

Symptom: Your subject stretches, deforms, or shifts proportions when the camera moves.

Fix: Reduce camera movement magnitude by 50% and add object tracking anchors. If you're panning more than 15 degrees, you're probably pushing too hard. Also check your motion mask. If the subject region has too high an intensity value combined with camera movement, you get compounding deformation. Drop subject intensity to 20-25%.

Frame Tearing and Flickering

Symptom: Visible seams, inconsistent lighting between frames, or strobing effects.

Fix: Increase the temporal consistency parameter to 0.8-0.85. If flickering persists, reduce your total frame count. Kling 3.0 handles 120 frames reliably, but longer generations (150+) can introduce temporal drift. For clips longer than 5 seconds, consider generating two shorter clips and stitching them in post.

API Timeout Errors

Symptom: Generation fails with a timeout error, especially on high-resolution or long-duration clips.

Fix: Update the timeout value in your kling_config.json to 180 or even 240 seconds. Pro model generations at 1080p with 150+ frames can exceed the default 120-second timeout. Also check your internet connection stability since large uploads to the API can fail on unreliable connections.

Motion Mask Not Applying Correctly

Symptom: Motion appears uniform across the frame despite having a detailed mask.

Fix: Verify your mask dimensions match your source image exactly. A mismatched mask gets resized and can lose detail. Also confirm the mask is actually connected to the KlingMotionControl node's mask input (not the source image input, which I've accidentally done more than once). Check that the mask is single-channel grayscale, as RGB masks get averaged and produce unexpected results.

Unexpected Camera Behavior

Symptom: Camera moves in a direction you didn't specify, or moves at the wrong speed.

Fix: Check for conflicting camera settings. If you've connected multiple KlingCameraPath nodes in series, the values stack rather than override. Disconnect all but one path node and verify the behavior, then add complexity gradually. Also watch for negative value confusion. In the pan parameter, negative means left and positive means right. I've mixed this up more times than I'd like to admit.

Kling 3.0 vs Other Motion Control Solutions

It's worth putting Kling 3.0's motion control capabilities in context. Several other platforms now offer some form of camera or motion control, but the implementations vary dramatically.

Runway Gen-3 Alpha Turbo has "Motion Brush" which lets you paint motion directions onto regions of the frame. It's more intuitive for beginners but less precise than Kling's approach. You can't define exact camera paths or set numerical motion values, so reproducibility is limited.

Pika 2.0 introduced camera control presets (orbit, zoom, pan) but without keyframe-level customization. You pick a preset and adjust the intensity. Fine for social content, insufficient for production work.

Stable Video Diffusion with AnimateDiff offers camera control through ControlNet adapters, but the quality ceiling is significantly lower than Kling 3.0 and setup complexity is much higher for comparable results.

Sora (OpenAI) has impressive motion understanding but offers essentially zero user control over camera behavior at the time of writing. You describe what you want in text and hope for the best. For creative direction, that's a non-starter.

My honest assessment: Kling 3.0 currently offers the best balance of control precision, output quality, and practical usability for anyone willing to invest in learning the ComfyUI workflow. The only tool that approaches it in quality is Runway Gen-3 Alpha, but Runway's lack of precise numerical camera control keeps it in the "creative tool" category rather than the "production tool" category where Kling 3.0 now sits. For those exploring AI video on Apatero.com, we've been integrating Kling 3.0 into our pipeline and the results speak for themselves.

Cost and Performance Considerations

Real talk about money, because AI video generation isn't cheap and nobody discusses this honestly enough.

Kling 3.0 standard model costs approximately $0.04 per frame through the API. A 120-frame clip at 1080p runs about $4.80. The pro model doubles that to roughly $0.08 per frame, so $9.60 for the same clip. These costs add up fast when you're iterating.

My cost management strategy:

- Prototype at 480p with the standard model. This cuts costs by roughly 60% during the iteration phase. Camera paths and motion behavior look the same at lower resolution, so you can validate your settings cheaply.

- Lock in settings, then render at full quality. Once I'm happy with the motion, I switch to pro model at 1080p for the final render. This means I'm only paying premium prices for the final version.

- Use seeds for reproducibility. When a clip is 90% right, note the seed and tweak one parameter at a time. Random seeds during iteration waste money because you can't isolate what changed.

- Batch similar shots. If you need 5 clips from the same scene, set up all 5 variations in one ComfyUI workflow and queue them. The API handles parallel requests well, and you'll spend less time waiting.

In my experience, a polished 10-second cinematic clip costs between $15-$25 in API credits when you factor in 3-5 iterations plus the final render. That's remarkably affordable compared to stock footage licensing or actual film production, but it's not free. Budget accordingly.

Frequently Asked Questions

Does Kling 3.0 motion control work with the free API tier?

No. Motion control features require the Kling 3.0 API, which is a paid tier only. The free tier limits you to Kling 2.0 standard without motion control parameters. You'll need at least the basic paid plan, which starts around $10/month with included credits.

Can I use Kling 3.0 motion control without ComfyUI?

Yes. Kuaishou's official Kling platform at klingai.com offers a web interface with motion control options. However, the web interface has fewer parameters and doesn't allow the same level of customization. ComfyUI gives you access to every parameter and lets you build reusable, automated workflows.

What's the maximum video length I can generate with motion control?

Kling 3.0 supports up to 200 frames per generation, which translates to about 8.3 seconds at 24fps. For longer videos, you'll need to generate multiple clips and stitch them. The seed and source frame approach works well here: use the last frame of clip 1 as the source image for clip 2.

How does motion control affect generation time?

Adding motion control parameters increases generation time by approximately 30-50% compared to a no-motion-control generation. Camera paths add the least overhead, while object tracking adds the most. A typical 120-frame clip with camera path and motion mask generates in about 2-3 minutes with the standard model.

Can I combine Kling 3.0 with other ComfyUI video nodes?

Absolutely. The Kling 3.0 output integrates cleanly with standard ComfyUI video nodes. You can pipe Kling output into frame interpolation nodes (like RIFE) for smoother playback, color grading nodes for post-processing, or VideoHelperSuite for format conversion. This composability is one of ComfyUI's greatest strengths.

Is Kling 3.0 better than Runway Gen-3 for all use cases?

No. Runway Gen-3 Alpha still produces more aesthetically "polished" results for certain creative styles, particularly stylized and artistic content. Kling 3.0 excels at realistic motion, physics simulation, and scenarios where precise camera control matters. For documentary or cinematic realism, Kling wins. For creative art direction with less technical control needs, Runway is still competitive.

Do I need a powerful GPU to use Kling 3.0 in ComfyUI?

Not for the Kling generation itself, since processing happens on Kuaishou's servers via API. You do need GPU capability if your workflow includes local processing steps like image generation (Flux/SDXL), segmentation (SAM), or post-processing (upscaling). The Kling nodes themselves run fine on CPU-only setups.

What image formats work best as source inputs?

PNG at 1920x1080 produces the best results. JPEG works but introduces compression artifacts that the model can amplify. Always use the highest quality source you can provide. If you're generating source images within ComfyUI, save them as PNG before feeding them to the Kling nodes.

Can I export camera path presets and share them?

Yes. KlingCameraPath node settings can be saved as part of your ComfyUI workflow JSON. You can share entire workflow files or extract just the camera path parameters. Several community members have started sharing preset libraries on CivitAI and the ComfyUI subreddit.

How do I handle scenes with multiple moving subjects?

Use multiple object tracking anchors, one per subject. Set tracking strength based on each subject's importance in the scene. For the primary subject, use 0.85-0.95 strength. For secondary subjects, 0.6-0.75. The motion mask should assign appropriate intensity values for each subject's expected movement level independently.

Final Thoughts

Kling 3.0 motion control in ComfyUI represents a genuine paradigm shift in how we approach AI video generation. We've gone from "generate a clip and hope the motion looks good" to "direct the camera exactly where you want it and control precisely how much each element moves." That's the difference between a toy and a tool.

The learning curve is real. You'll spend time configuring nodes, experimenting with mask values, and iterating on camera paths before you consistently produce great results. But once you internalize the workflow, the creative possibilities open up enormously. I've started using Kling 3.0 for work that I previously would have outsourced to videographers or stock footage libraries, and the turnaround time is measured in minutes instead of days.

If you're already working with Kling in ComfyUI, upgrading to v0.16 and exploring the 3.0 motion control nodes should be your top priority this month. If you're new to AI video, consider starting with the Kling 2.5 Turbo guide to build foundational knowledge before jumping into motion control.

The tools keep getting better. Our job is to keep learning how to use them well.

Ready to Create Your AI Influencer?

Join 115 students mastering ComfyUI and AI influencer marketing in our complete 51-lesson course.

Related Articles

10 Most Common ComfyUI Beginner Mistakes and How to Fix Them in 2025

Avoid the top 10 ComfyUI beginner pitfalls that frustrate new users. Complete troubleshooting guide with solutions for VRAM errors, model loading...

25 ComfyUI Tips and Tricks That Pro Users Don't Want You to Know in 2025

Discover 25 advanced ComfyUI tips, workflow optimization techniques, and pro-level tricks that expert users use.

360 Anime Spin with Anisora v3.2: Complete Character Rotation Guide ComfyUI 2025

Master 360-degree anime character rotation with Anisora v3.2 in ComfyUI. Learn camera orbit workflows, multi-view consistency, and professional...