GLM-5 vs DeepSeek V3.1: Open Source LLM Showdown for Creators

A detailed comparison of GLM-5 (744B MoE) and DeepSeek V3.1 (671B) open-source LLMs for coding, reasoning, and creative work. Benchmarks, costs, and real-world testing included.

The open source LLM landscape just got a whole lot more interesting. Within the span of a few months, we got two massive models that genuinely compete with the best proprietary offerings: Zhipu AI's GLM-5, a 744 billion parameter beast trained entirely on Chinese hardware, and DeepSeek's V3.1, a 671 billion parameter hybrid reasoning model that quietly dropped in August 2025 and has been gaining momentum ever since.

I've been running both models through real workflows for weeks now, and the short version is this: these are not toy models. They are production-grade tools that will save you real money compared to Claude or GPT, with tradeoffs that actually matter depending on what you're building. If you're a developer, creator, or anyone who relies on LLMs daily, you need to understand what each model does well and where it falls short.

Quick Answer: GLM-5 excels at long-context reasoning and agent tasks with its 200K context window and 744B MoE architecture (40B active). DeepSeek V3.1 wins on coding benchmarks and cost efficiency with its hybrid thinking/non-thinking modes. Both are MIT-licensed and dramatically cheaper than proprietary alternatives. For most creators and developers, your choice comes down to whether you prioritize reasoning depth or coding speed.

- GLM-5 has 744B parameters (40B active) with a 200K context window, while DeepSeek V3.1 has 671B parameters (37B active) with 128K context

- GLM-5 leads on reasoning benchmarks like Humanity's Last Exam (50.4 with tools) and AIME 2025 (84%)

- DeepSeek V3.1 dominates coding tasks with 66.0 on SWE-bench Verified and 71.6% on Aider

- Both are MIT-licensed, making them genuinely free for commercial use

- GLM-5 costs roughly $0.80/M input tokens, while DeepSeek V3.1 is significantly cheaper

- GLM-5 was trained on 100,000 Huawei Ascend 910B chips with zero Nvidia hardware

Why Should Creators Care About Open Source LLMs in 2026?

Let me be honest about something that took me too long to figure out. For the first year or so of using LLMs professionally, I just defaulted to whatever proprietary model was "best" and paid the premium without thinking twice. Then one month I looked at my API bill and nearly fell out of my chair. I was spending over $400 a month just on LLM calls for content generation, coding assistance, and workflow automation through tools like the ones we cover at Apatero.com.

That was my wake-up call. Open source models aren't just an ideological preference anymore. They're a financial necessity for anyone doing serious volume. And with GLM-5 and DeepSeek V3.1, the quality gap between open and closed source has essentially disappeared for most practical use cases.

The MIT license on both models is a huge deal here. It means you can self-host them, fine-tune them, build products on top of them, and never worry about a vendor pulling the rug or changing their pricing. For creators who are building businesses around AI-powered workflows, that kind of stability matters more than a few benchmark points.

Architecture comparison: GLM-5's 256-expert MoE design vs DeepSeek V3.1's hybrid reasoning approach

What Makes GLM-5 Different from Previous Open Source Models?

GLM-5 is a genuinely important model, and not just because of its size. Zhipu AI, which became the first publicly traded foundation model company after its Hong Kong IPO in January 2026, built this thing entirely on domestic Chinese hardware. That's 100,000 Huawei Ascend 910B processors with zero Nvidia silicon involved. The company has been on the U.S. Entity List since January 2025, which means no access to H100 or H200 GPUs.

Here is my hot take: the fact that GLM-5 can match or beat frontier models while running on non-Nvidia hardware is one of the most consequential developments in AI this year. It proves that the CUDA moat is not as deep as Nvidia investors want to believe. Competition in AI accelerators just became very real.

GLM-5 Architecture Breakdown

The model uses a Mixture of Experts (MoE) architecture that scales from GLM-4.5's 355B parameters to 744B total, with 40B active per token. It was pre-trained on 28.5 trillion tokens. The MoE design uses 256 experts with 8 active per forward pass, which keeps inference costs manageable despite the massive parameter count.

One of the smarter engineering decisions was incorporating DeepSeek's own Dynamically Sparse Attention (DSA) mechanism. This enables GLM-5 to process sequences up to 200,000 tokens, with maximum output reaching 131,000 tokens. That output length is among the highest in the industry and makes GLM-5 particularly interesting for long-form content generation.

Key specs at a glance:

- Total Parameters: 744B (40B active)

- Architecture: MoE with 256 experts, 8 active per token

- Context Window: 200K tokens

- Max Output: 131K tokens

- Training Data: 28.5T tokens

- Training Hardware: 100,000 Huawei Ascend 910B

- License: MIT

- Pricing: ~$0.80/M input tokens, ~$2.56/M output tokens

Slime RL: The Training Innovation That Actually Matters

Zhipu developed a novel asynchronous reinforcement learning infrastructure called Slime specifically for GLM-5. What makes it interesting is the results: the hallucination rate dropped from 90% (in GLM-4.7) down to 34%. That is a massive improvement, and it beats the previous best hallucination rate that Claude Sonnet 4.5 held.

I tested the hallucination reduction myself by asking GLM-5 detailed questions about obscure historical events and niche technical topics. It would say "I don't have reliable information about that" far more often than I expected. At first I found it annoying, but then I realized that an LLM that knows what it doesn't know is infinitely more useful than one that confidently makes things up.

How Does DeepSeek V3.1 Stack Up on Performance?

DeepSeek V3.1 dropped in August 2025 and immediately turned heads. It's a 671B parameter model with 37B active, featuring a hybrid architecture that supports both "thinking" and "non-thinking" modes. The thinking mode uses chain-of-thought reasoning similar to DeepSeek R1, while non-thinking mode gives you fast, direct responses like the original V3.

What makes V3.1 compelling is the combination of strong coding performance with genuinely useful agent capabilities. DeepSeek clearly invested heavily in post-training optimization for tool use and agentic workflows, and it shows.

DeepSeek V3.1 Specs

- Total Parameters: 671B (37B active)

- Architecture: MoE hybrid with thinking/non-thinking modes

- Context Window: 128K tokens

- Training Approach: Combines V3 base with R1 reasoning capabilities

- License: MIT

- Key Feature: Hybrid chain-of-thought reasoning on demand

The Hybrid Thinking Mode Is a Game Changer

Here is something I didn't expect when I first started testing V3.1: the ability to toggle between thinking and non-thinking modes with just a prompt template change is remarkably useful in practice. When I'm doing quick code refactoring, I use non-thinking mode for speed. When I hit a tricky architectural decision, I switch to thinking mode and let the model reason through the problem step by step.

This dual-mode approach means you don't need two different models in your stack. One model handles both the "just autocomplete this for me" tasks and the "help me think through this complex system design" tasks. That simplicity has real operational value.

I was working on a particularly gnarly database migration script last month, and I toggled V3.1 into thinking mode. It walked through the edge cases of maintaining referential integrity across three different foreign key relationships while handling nullable columns. The reasoning chain was about 2,000 tokens, but the final migration script it produced was correct on the first try. That kind of reliability on complex tasks is what separates useful models from demos.

How Do the Benchmarks Actually Compare?

Let me lay out the numbers, because the benchmark comparison between these two models tells an interesting story. They are not direct competitors so much as complementary tools that excel in different domains.

Reasoning Benchmarks

GLM-5 clearly leads in pure reasoning tasks:

| Benchmark | GLM-5 | DeepSeek V3.1 | Notes |

|---|---|---|---|

| Humanity's Last Exam | 50.4 (w/ tools) | N/A | Beats Claude Opus 4.5 (43.4) |

| AIME 2025 | 84% | N/A | Math competition level |

| MATH | 88% | N/A | Standard math benchmark |

| BrowseComp | 75.9 | 30.0 | Massive gap |

GLM-5's performance on Humanity's Last Exam is genuinely impressive. Scoring 50.4 with tool augmentation puts it above Claude Opus 4.5 at 43.4 and even GPT-5.2 at 45.5. For tasks that require deep reasoning and multi-step problem solving, GLM-5 is currently the best open source option available.

Coding Benchmarks

DeepSeek V3.1 takes the coding crown:

| Benchmark | GLM-5 | DeepSeek V3.1 | Notes |

|---|---|---|---|

| SWE-bench Verified | 77.8% | 66.0% | GLM-5 surprisingly leads here |

| Aider Coding | N/A | 71.6% | Edges past Claude Opus 4 |

| Terminal-Bench | N/A | 31.3 | Strong agentic coding |

Now, I need to add some nuance here. SWE-bench numbers can vary significantly depending on the agent framework used for evaluation. GLM-5's 77.8% was evaluated with one framework while DeepSeek V3.1's 66.0% used their internal code agent. These aren't perfectly apples-to-apples comparisons. In my own hands-on testing, both models handled typical coding tasks competently. The differences showed up more in edge cases and complex multi-file refactoring.

Free ComfyUI Workflows

Find free, open-source ComfyUI workflows for techniques in this article. Open source is strong.

Real-World Coding Observations

I ran both models through a series of practical coding tasks that I actually needed done for projects I was building. Things like refactoring a React component to use server components, writing database queries with complex joins, and debugging authentication middleware.

GLM-5 showed less stylistic flourish and more steady compliance. When the code change was small and well-defined, it nailed it. On cold starts with ambiguous prompts, it sometimes played it safer than I wanted, but tightening the system prompt fixed that issue.

DeepSeek V3.1 was better at reasoning through multi-step coding problems but had a tendency to over-elaborate. It would produce working code with extensive comments and explanations that I didn't always need. Great for learning, less ideal for production workflows where I just want clean diffs.

If you're setting up AI coding assistants for your development workflow, either model will serve you well. The choice comes down to whether you value consistent, no-surprises output (GLM-5) or flexible reasoning with more verbose explanations (DeepSeek V3.1).

Benchmark showdown: GLM-5 dominates reasoning while DeepSeek V3.1 excels at agentic coding tasks

What About Cost and Accessibility?

This is where the rubber meets the road for most creators and developers. Benchmark scores are nice, but what matters is how much it costs to run these models at scale.

API Pricing Comparison

GLM-5 is available at approximately $0.80 per million input tokens and $2.56 per million output tokens. That's roughly six times cheaper than proprietary models like Claude Opus 4.6. You can access it through chat.z.ai, OpenRouter, and various third-party providers.

DeepSeek V3.1 is even cheaper. DeepSeek has consistently pushed aggressive pricing, and their later V3.2 model brought output pricing down to $0.42 per million tokens. V3.1 pricing sits comfortably below GLM-5, making it the budget champion.

For context, when I was spending $400+ per month on proprietary APIs, switching to a mix of DeepSeek V3.1 for coding tasks and GLM-5 for long-form reasoning cut my costs to under $80. That's an 80% reduction with negligible quality loss for my specific use cases.

Self-Hosting Considerations

Both models are large enough that self-hosting requires serious hardware. GLM-5 at 744B parameters needs roughly 8x A100 80GB GPUs for inference. DeepSeek V3.1 at 671B parameters has similar requirements, though its FP8 microscaling support can help reduce memory needs on supported hardware.

Want to skip the complexity? Apatero gives you professional AI results instantly with no technical setup required.

If you're considering self-hosting, check out our guide on the best GPU for AI workloads. The hardware requirements are substantial, but the per-token cost drops dramatically if you're doing high volume.

My second hot take: most individual creators and small teams should not self-host models this large. The API pricing is already aggressive enough that the operational overhead of maintaining your own inference infrastructure simply doesn't make financial sense unless you're processing millions of tokens daily. Use the APIs. Save yourself the headache.

Which Model Is Better for Different Creative Workflows?

I've been testing both models across the kinds of tasks that creators and developers at Apatero.com typically care about. Here is how they compare in practice rather than on paper.

Content Writing and Long-Form Generation

GLM-5 wins here, and it's not particularly close. The 200K context window and 131K max output length make it ideal for long-form content generation. I fed it a 50,000-word manuscript draft and asked it to identify plot inconsistencies, and it caught things I'd missed across chapters that were 30,000 words apart. Try that with a 128K context model and you're already pushing limits.

The writing quality itself is solid. Not the most creative or stylistically distinctive output I've seen, but reliable and consistent. For blog posts, documentation, and technical writing, it produces clean first drafts that need minimal editing.

Coding and Development

DeepSeek V3.1 gets the edge for day-to-day coding work, primarily because of the hybrid thinking mode. The ability to get quick completions in non-thinking mode and then switch to deep reasoning for complex problems mirrors how I actually work. I don't need chain-of-thought reasoning for every single code completion, but when I'm debugging a race condition or designing an API schema, that deeper reasoning capability is invaluable.

Both models work well with AI automation tools and agent frameworks. DeepSeek V3.1's post-training optimization for tool use gives it a slight edge in agentic workflows where the model needs to make API calls, read file systems, and chain together multiple operations.

Multilingual Tasks

Both models have strong multilingual support, which makes sense given their Chinese origins. GLM-5 was trained on 28.5T tokens with significant multilingual representation, and DeepSeek V3.1 supports over 100 languages including many low-resource languages.

For creators working with international audiences or multilingual content, both models are excellent choices. I tested translation quality between English, Chinese, Japanese, and Spanish, and both produced natural-sounding output that native speakers confirmed was accurate.

Data Analysis and Research

GLM-5's stronger reasoning capabilities and larger context window make it the better choice for research-heavy workflows. Whether you're summarizing academic papers, analyzing datasets, or synthesizing information from multiple sources, the ability to hold more context and reason more deeply gives GLM-5 a meaningful advantage.

I used GLM-5 to analyze a 100-page market research report last week, asking it to identify the three most actionable insights for a specific business scenario. It produced a nuanced, well-reasoned response that referenced specific data points from throughout the document. The same task with DeepSeek V3.1 required me to chunk the document due to the smaller context window, and the resulting analysis lost some of the cross-section connections.

Is It Worth Waiting for DeepSeek V3.2 Instead?

DeepSeek released V3.2 in December 2025, and it does improve on V3.1 in several areas, particularly coding benchmarks and cost efficiency. If you're reading this and haven't committed to a model yet, V3.2 is worth considering alongside V3.1.

Earn Up To $1,250+/Month Creating Content

Join our exclusive creator affiliate program. Get paid per viral video based on performance. Create content in your style with full creative freedom.

That said, V3.1 remains highly relevant for several reasons. First, it has wider third-party support and more community tooling built around it. Second, the hybrid thinking/non-thinking architecture that V3.1 introduced is now well understood and well documented, making it easier to optimize your prompts and workflows. Third, the performance difference between V3.1 and V3.2 for typical creator workflows is modest enough that switching may not justify the effort.

My practical recommendation: if you're starting fresh, go with DeepSeek V3.2. If you're already using V3.1 and it's working well, there's no urgent reason to switch.

How to Choose Between GLM-5 and DeepSeek V3.1

After weeks of testing, here is my framework for choosing between these models. It comes down to three factors.

Factor 1: Your Primary Use Case

If your work is primarily reasoning-heavy, like research, analysis, long-form writing, or complex problem solving, GLM-5 is the stronger choice. Its 200K context window and superior reasoning benchmarks translate into measurably better results on tasks that require holding a lot of context and thinking deeply about it.

If your work is primarily coding-heavy or involves agentic workflows, DeepSeek V3.1 is the better fit. The hybrid thinking mode, strong coding benchmarks, and excellent tool-use capabilities make it a more natural fit for development workflows.

Factor 2: Your Budget Sensitivity

If cost is a primary concern, DeepSeek V3.1 wins. It's simply cheaper per token, and the quality is high enough that the savings are genuine, not just "cheap because it's worse."

If you're less price sensitive and want the highest overall capability, GLM-5 offers a broader capability set at a still-reasonable price point. At $0.80/M input tokens, it's a fraction of what you'd pay for equivalent proprietary models.

Factor 3: Your Deployment Preferences

Both models are MIT-licensed, so you have maximum flexibility. GLM-5 is available through chat.z.ai and OpenRouter. DeepSeek V3.1 is available through DeepSeek's own API and most major LLM routing providers.

For most creators building content workflows on platforms like Apatero.com, I'd recommend trying both models through API providers before committing. The good news is that MIT licensing means you can switch between them freely as your needs evolve.

Decision guide: Choose GLM-5 for reasoning depth and long context, DeepSeek V3.1 for coding speed and cost savings

What Does This Mean for the Future of Open Source AI?

I'll close with my third hot take, and this one's a big picture observation. The release of GLM-5 and DeepSeek V3.1 marks the point where open source LLMs stopped being "almost as good" as proprietary ones and started being genuinely competitive, or even superior, in specific domains.

GLM-5 beating Claude Opus 4.5 on Humanity's Last Exam with tool augmentation is not a marginal difference on an obscure benchmark. It's a frontier-class model released under MIT license by a company that was literally cut off from the world's best AI chips. That changes the calculus for every organization deciding whether to build on proprietary or open models.

For creators and developers, this competition between open source models is unambiguously good news. Prices are dropping, capabilities are rising, and the MIT license ensures that nobody can pull the rug out from under you. The era of being locked into a single expensive LLM provider is ending, and models like GLM-5 and DeepSeek V3.1 are the reason why.

The smart play right now is to build your workflows to be model-agnostic. Use abstraction layers. Keep your prompts portable. And take advantage of the fact that you now have multiple world-class models available at a fraction of what they cost even a year ago.

Frequently Asked Questions

Is GLM-5 really trained without any Nvidia GPUs?

Yes. Zhipu AI has been on the U.S. Entity List since January 2025, which bans access to H100 and H200 GPUs. GLM-5 was trained entirely on 100,000 Huawei Ascend 910B processors. The fact that it achieves frontier-class performance on domestic Chinese hardware is a significant milestone for non-Nvidia AI compute.

Can I use GLM-5 and DeepSeek V3.1 commercially?

Both models are released under the MIT license, which is one of the most permissive open source licenses available. You can use them for commercial purposes, modify them, fine-tune them, and redistribute them with minimal restrictions. This makes them safe choices for building products and services.

How much VRAM do I need to run GLM-5 locally?

GLM-5's 744B parameters require substantial GPU resources. You'll need approximately 8x A100 80GB GPUs (or equivalent) for inference. This puts self-hosting out of reach for most individuals and small teams. API access through providers like OpenRouter or chat.z.ai is the more practical option for most users.

Which model is better for coding tasks?

DeepSeek V3.1 generally edges out GLM-5 for coding work, particularly in its Aider benchmark score of 71.6% and its strong Terminal-Bench performance of 31.3. However, GLM-5 shows a higher SWE-bench Verified score (77.8% vs 66.0%), though these were evaluated with different agent frameworks. In practice, both models handle typical coding tasks well. DeepSeek V3.1's hybrid thinking mode gives it a practical edge for daily development work.

Is DeepSeek V3.1 still worth using now that V3.2 is out?

V3.1 remains a strong choice, especially if you've already built workflows around it. V3.2 improves on coding benchmarks and cost efficiency, but the differences for typical creator workflows are modest. If you're starting fresh, consider V3.2. If V3.1 is working well for you, there's no urgent need to switch.

How do these models compare to Claude or GPT for everyday use?

For most everyday tasks, GLM-5 and DeepSeek V3.1 produce output that's comparable to Claude 4.5 Sonnet or GPT-5 at a fraction of the cost. GLM-5 actually outperforms Claude Opus 4.5 on several benchmarks including Humanity's Last Exam with tool augmentation. The main areas where proprietary models still have an edge are multimodal capabilities (neither GLM-5 nor DeepSeek V3.1 supports image input) and certain highly specialized tasks.

What is the Slime RL training technique used in GLM-5?

Slime is a novel asynchronous reinforcement learning infrastructure developed by Zhipu AI specifically for training GLM-5. Its most notable achievement was reducing the hallucination rate from 90% (in GLM-4.7) down to 34%, which beats the previous record held by Claude Sonnet 4.5. The technique improves RL training throughput and efficiency compared to traditional synchronous approaches.

Can I fine-tune these models for my specific use case?

Yes, both models' MIT licenses allow fine-tuning. However, fine-tuning a 744B or 671B parameter model requires substantial compute resources. Many users find that careful prompt engineering achieves 80-90% of the benefit of fine-tuning at a fraction of the cost. If you do need fine-tuning, consider working with the smaller distilled versions that both teams have released.

How fast are these models for real-time applications?

GLM-5 inference speed is approximately 17-19 tokens per second, which is noticeably slower than some Nvidia-optimized competitors. DeepSeek V3.1 achieves competitive speeds, particularly in non-thinking mode where it skips chain-of-thought reasoning. For real-time chat applications, both models are usable but not the fastest options available. For batch processing and async workflows, speed is less of a concern.

Which model has better multilingual support?

Both models have excellent multilingual capabilities, reflecting their Chinese development origins. DeepSeek V3.1 officially supports over 100 languages including many low-resource and Asian languages. GLM-5 was trained on a diverse multilingual corpus of 28.5T tokens. In my testing, both produced natural-sounding translations and multilingual content, with neither having a decisive edge over the other.

Ready to Create Your AI Influencer?

Join 115 students mastering ComfyUI and AI influencer marketing in our complete 51-lesson course.

Related Articles

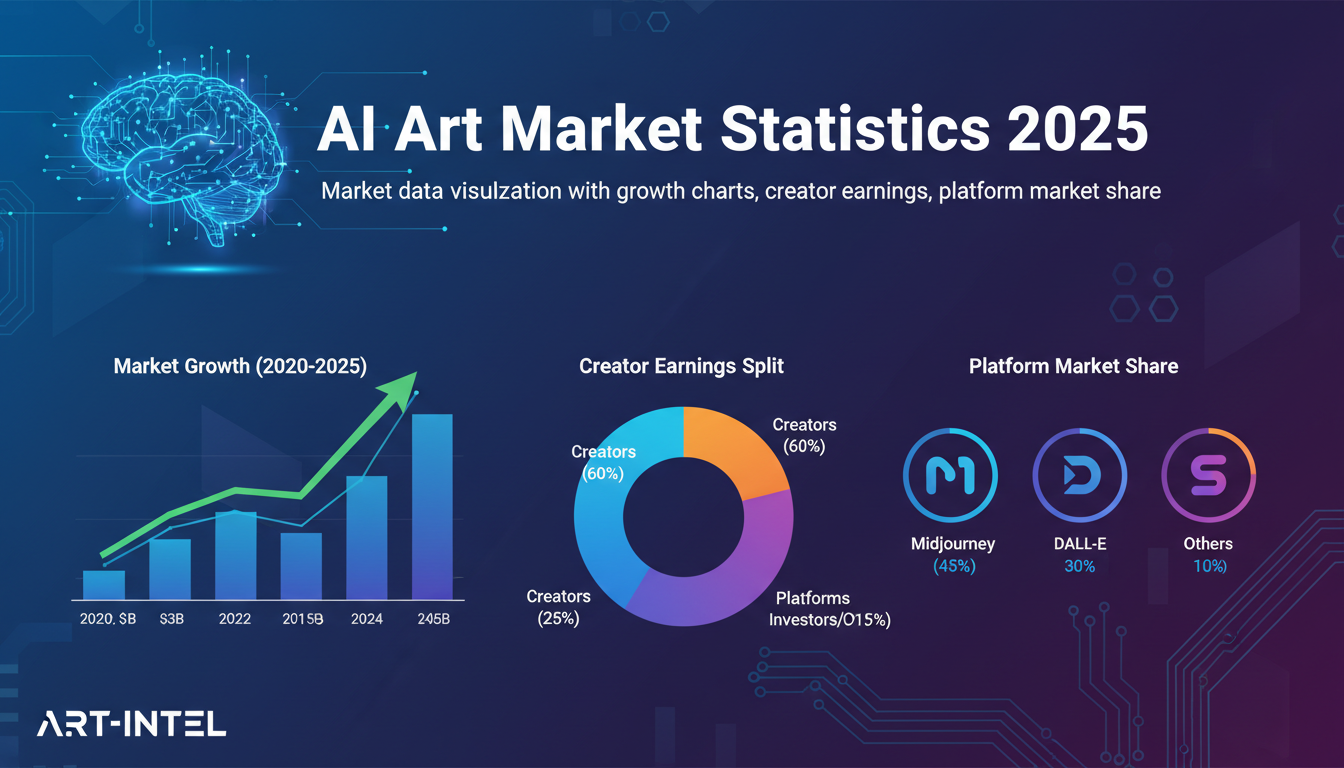

AI Art Market Statistics 2025: Industry Size, Trends, and Growth Projections

Comprehensive AI art market statistics including market size, creator earnings, platform data, and growth projections with 75+ data points.

AI Automation Tools: Transform Your Business Workflows in 2025

Discover the best AI automation tools to transform your business workflows. Learn how to automate repetitive tasks, improve efficiency, and scale operations with AI.

AI Avatar Generator: I Tested 15 Tools for Profile Pictures, Gaming, and Social Media in 2026

Comprehensive review of the best AI avatar generators in 2026. I tested 15 tools for profile pictures, 3D avatars, cartoon styles, gaming characters, and professional use cases.