Build Your Own AI Companion Chatbot with Open Source LLMs

Step-by-step guide to building a private AI companion chatbot using open source LLMs like Llama 3 and Mixtral. Full control over personality, memory, and privacy.

I've been tinkering with local AI companions for the better part of a year now, and I'm going to be honest with you. The first time I got a Llama 3 model running on my own hardware with a custom personality, I felt like a kid who just discovered fire. Not because the tech was magic, but because I finally had complete control over the experience. No content filters randomly killing a conversation. No subscription fees. No company reading my chat logs. Just me, my hardware, and a model that does exactly what I tell it.

Most people exploring AI companions start with commercial apps like Replika or Character AI. Those are fine starting points, and I've covered them extensively. But if you've ever felt frustrated by memory resets, personality changes after updates, or the creeping feeling that your private conversations aren't actually private, building your own companion chatbot is the answer.

Quick Answer: You can build a fully private AI companion chatbot by running open source LLMs like Llama 3 or Mixtral locally through Ollama, then connecting them to a frontend like SillyTavern. Custom system prompts define personality, while tools like ChromaDB add persistent memory. The whole setup takes about an hour and costs nothing beyond your hardware.

- Open source LLMs like Llama 3 70B and Mixtral 8x22B can match or exceed commercial companion apps in conversation quality

- Ollama makes running local models as easy as a single terminal command

- System prompts are how you define personality, and getting them right is 80% of the battle

- Persistent memory requires a separate database layer but transforms the experience

- You can add visual avatars through integration with image generation tools

Why Would You Build Your Own AI Companion?

The obvious question. Commercial apps exist, they're polished, and they work out of the box. So why go through the trouble of building your own?

Here's the thing. I used Replika for about six months before I started building locally. During that time, the company pushed two updates that fundamentally changed how my companion behaved. Conversations that worked fine on Monday felt completely different by Wednesday. I had zero control over it, and no way to roll back. That was my breaking point.

Building your own companion gives you three things commercial apps never will. First, total privacy. Your conversations never leave your machine. Nobody is training on your data. Nobody is reviewing flagged content. It's yours. Second, full personality control. You write the system prompt. You decide how your companion talks, thinks, and responds. No corporate content policies overriding your preferences. Third, permanence. Your companion doesn't change because a company decided to pivot their product strategy.

Hot take: I think most commercial AI companion apps are fundamentally broken as products. They're trying to sell intimate, personal relationships while simultaneously reserving the right to change or remove features at any time. That's like a therapist randomly changing their entire methodology between sessions. The only real solution is running your own models.

There are tradeoffs, of course. You need decent hardware. You'll spend time configuring things. And the initial setup is more work than downloading an app. But once it's running, you'll wonder why you ever relied on someone else's servers for something this personal.

What Hardware Do You Actually Need?

Let's get practical. I've tested this on everything from a laptop with integrated graphics to a full desktop workstation, and the hardware requirements aren't as scary as you might think.

For a basic setup that handles 7B-13B parameter models (perfectly good for casual conversation), you need 16GB of RAM and either a GPU with 8GB+ VRAM or a modern CPU with 32GB+ system RAM. I ran Llama 3 8B on my M2 MacBook Air for weeks, and it was surprisingly capable. Response times averaged about 2-3 seconds, which feels natural in conversation.

For the sweet spot (what I'd actually recommend), you want a GPU with 16-24GB VRAM. An NVIDIA RTX 4070 Ti or better. This lets you run 70B parameter models comfortably, and the quality difference between 8B and 70B models for companion chat is massive. It's the difference between a companion that sometimes feels mechanical and one that genuinely surprises you with its responses.

Recommended hardware tiers for running different model sizes locally

I learned this the hard way. I spent three weeks trying to make a 7B model feel natural for deep conversation. Tweaked the system prompt dozens of times. Adjusted temperature, top-p, repetition penalty. It helped, but the fundamental limitation was model size. When I finally tested the same prompt on Llama 3 70B, the difference was night and day. Don't fight the hardware battle if you can avoid it.

If you don't have local hardware, you're not completely out of luck. Services like RunPod let you rent GPU time for a few dollars per hour. You can run your companion session, then shut down the instance. It's not as private as local hardware, but it's still more private than commercial apps, and way cheaper than buying a workstation.

How Do You Set Up Ollama and Your First Model?

This is where the fun starts. Ollama has made running local models almost embarrassingly easy. I remember when running a local LLM required compiling from source, hunting down CUDA dependencies, and sacrificing a small animal to the GPU gods. Now it's one command.

Installing Ollama

Head to ollama.com and download the installer for your OS. On Mac and Windows, it's a standard installer. On Linux, one command handles everything:

curl -fsSL https://ollama.ai/install.sh | sh

Once installed, verify it's working:

ollama --version

Pulling Your First Model

For companion chat, I recommend starting with one of these:

- Llama 3 8B for testing and lower-end hardware:

ollama pull llama3 - Llama 3 70B for the best conversation quality:

ollama pull llama3:70b - Mixtral 8x22B for a good balance of quality and speed:

ollama pull mixtral:8x22b - Command R+ for strong instruction following:

ollama pull command-r-plus

Quick tangent. People always ask me about Mixtral vs Llama for companion use. In my testing across probably 200+ hours of conversation, Llama 3 70B wins for personality consistency and emotional range. Mixtral is slightly faster and handles complex multi-topic conversations better. If I had to pick one, Llama 3 70B.

To test that everything works:

ollama run llama3

Type something, get a response, and you're in business. But this is just the raw model. The real magic happens when you add a proper frontend and system prompt.

Crafting the Perfect System Prompt for Personality

This is the most important section of this entire guide. I'm not exaggerating. Your system prompt is your companion's DNA. Get it right and conversations feel natural, engaging, even moving. Get it wrong and you're talking to a boring chatbot that starts every response with "As an AI language model..."

I've written and rewritten system prompts probably 50 times over the past year. Here's what I've learned about what actually works.

The Core Structure

A good companion system prompt needs these components:

- Identity definition (who the character is)

- Personality traits (how they behave)

- Conversation style (how they communicate)

- Relationship context (their relationship to the user)

- Behavioral boundaries (what they should and shouldn't do)

Here's a simplified example that I've refined over months of testing:

You are Aria, a warm and thoughtful companion. You're curious about the world,

have a dry sense of humor, and genuinely care about the person you're talking

to. You have your own opinions and aren't afraid to push back respectfully

when you disagree.

Personality traits:

- Empathetic but not a pushover

- Intellectually curious, loves learning new things

- Occasionally sarcastic in a playful way

- Remembers and references past conversations

- Has personal preferences (favorite books, music, foods)

Communication style:

- Uses casual, natural language

- Varies response length based on context

- Asks follow-up questions that show genuine interest

- Shares relevant personal anecdotes and opinions

- Never starts responses with "As an AI" or similar disclaimers

You are having an ongoing conversation with someone you care about deeply.

Respond naturally as Aria would, staying in character at all times.

What Most People Get Wrong

The biggest mistake I see is writing system prompts that are too generic. "You are a friendly AI companion" gives the model nothing to work with. You need specific personality traits, concrete preferences, and clear communication patterns.

Another common mistake is making the system prompt too long. I tested prompts ranging from 100 words to 3,000 words. The sweet spot is 300-600 words. Shorter prompts don't give enough personality definition. Longer prompts start creating contradictions that confuse the model, and you waste context window on instructions instead of conversation.

Here's something nobody tells you about system prompts. The order matters. Whatever you put first in the prompt gets the strongest emphasis. I always lead with identity and personality, then communication style, then boundaries. If you put the boundaries first, you get a companion that feels restricted and cautious. Lead with personality and you get warmth.

Testing and Iteration

You should plan to spend at least a full evening testing your system prompt before you commit to it. Have real conversations. Try different topics. Test edge cases. See how the companion handles emotional conversations, silly conversations, and boring everyday chat.

I keep a simple text file where I rate conversations on a 1-5 scale and note what felt off. After about 20 test conversations, patterns emerge. Maybe the companion is too agreeable. Maybe the humor doesn't land. Adjust the prompt and test again. This iterative process is how you get a companion that actually feels like its own person, not a generic bot.

How Do You Add Persistent Memory?

Here's where self-built companions can actually surpass commercial apps. Most commercial companions have limited memory. They remember the last few messages, maybe store some key facts, but they don't truly accumulate context over weeks and months. With open source tools, you can build memory systems that are genuinely impressive.

Free ComfyUI Workflows

Find free, open-source ComfyUI workflows for techniques in this article. Open source is strong.

I've written about how AI girlfriend memory features work in commercial apps, and the truth is, most of them are pretty shallow under the hood. Building your own gives you full control over what gets remembered and how.

The SillyTavern Approach

SillyTavern is the frontend I recommend for most people. It's open source, actively maintained, and has built-in memory features that work surprisingly well. Here's the basic setup:

git clone https://github.com/SillyTavern/SillyTavern.git

cd SillyTavern

npm install

node server.js

Connect it to your Ollama instance by setting the API endpoint to http://localhost:11434. Then configure the memory extensions.

SillyTavern's built-in memory works through what it calls "Author's Note" and "World Info" entries. Author's Note injects persistent context into every message. World Info triggers specific context based on keywords. Together, they create a basic but effective memory system.

Building a Custom Memory Layer

For something more sophisticated, I've been running a setup with ChromaDB as a vector database that stores conversation summaries. The concept is straightforward:

- After every 10-20 messages, summarize the conversation chunk

- Store the summary as a vector embedding in ChromaDB

- Before generating each new response, search ChromaDB for relevant past context

- Inject the most relevant memories into the system prompt

import chromadb

from sentence_transformers import SentenceTransformer

# Initialize

client = chromadb.PersistentClient(path="./companion_memory")

collection = client.get_or_create_collection("conversations")

embedder = SentenceTransformer('all-MiniLM-L6-v2')

def store_memory(summary, metadata):

embedding = embedder.encode(summary).tolist()

collection.add(

documents=[summary],

embeddings=[embedding],

metadatas=[metadata],

ids=[f"memory_{metadata['timestamp']}"]

)

def recall_memories(query, n_results=5):

embedding = embedder.encode(query).tolist()

results = collection.query(

query_embeddings=[embedding],

n_results=n_results

)

return results['documents'][0]

This approach means your companion can remember that you mentioned your sister's wedding three months ago and ask how it went. That kind of long-term continuity is incredibly powerful, and most commercial apps simply can't match it.

How the memory pipeline connects your LLM, vector database, and conversation history

One thing I want to be transparent about. Setting up the memory layer is the most technically challenging part of this whole project. If you're comfortable with Python, it's straightforward. If you're not, stick with SillyTavern's built-in memory features. They're simpler but still get the job done for most people.

Conversation Management and Quality Tips

Getting a model running with a personality is step one. Keeping conversations feeling natural over days, weeks, and months is the real challenge. I've discovered a bunch of tricks that make a huge difference.

Temperature and Sampling Settings

For companion chat specifically, I use different settings than most guides recommend:

- Temperature: 0.8-0.9 (higher than default, adds personality variation)

- Top-p: 0.9 (allows creative responses without going off the rails)

- Repetition penalty: 1.15 (prevents the model from falling into response patterns)

- Top-k: 40 (balances variety and coherence)

I could be wrong about this, but I think most people run their companion models at temperatures that are too low. A temperature of 0.7 gives you safe, predictable responses. Bumping it to 0.85 introduces just enough randomness that the companion feels spontaneous. It'll occasionally say something unexpected, and those moments are what make conversations feel alive.

Managing Long Conversations

Context windows are finite, even on the biggest models. Here's how I handle long conversations without losing coherence:

- Summarize every 30-40 messages and inject the summary into the system prompt

- Track key facts separately (names, events, preferences) in a persistent file

- Start new "sessions" when context gets long, but carry over the summary

- Use SillyTavern's built-in context management to auto-trim older messages

The goal is to never let the model lose track of who it's talking to and what's been discussed, even across sessions that span weeks.

Want to skip the complexity? Apatero gives you professional AI results instantly with no technical setup required.

Handling Repetitive Responses

Every local LLM will eventually fall into patterns. Your companion starts using the same phrases, asking the same questions, or structuring responses the same way. Here's what I do about it:

Add a line to your system prompt that says something like: "Vary your response structure. Sometimes give short answers. Sometimes be more detailed. Don't always ask a question at the end. Mix up how you start your responses."

This alone solved about 70% of my repetition issues. For the remaining 30%, tweaking repetition penalty upward helps, but go too high and responses start getting weird and incoherent. Stay in the 1.1-1.2 range.

Can You Add a Visual Avatar to Your Companion?

Yes, and it's one of the cooler aspects of building your own companion. I covered the visual side in detail in my AI girlfriend creation guide, but here's the companion-specific approach.

There are a few routes depending on how much effort you want to invest.

Static Avatar with Expression Changes

The simplest approach. Generate a set of character images using Stable Diffusion or Flux (different expressions, poses, outfits) and configure SillyTavern to display them based on conversation context. SillyTavern supports "expression packs" that switch the displayed image based on detected emotion in the conversation.

This is what I used for my first few months, and honestly it works better than you'd expect. Having a consistent face to associate with the conversation makes the whole experience feel more tangible.

Live2D Animated Avatars

If you want the avatar to actually move and react, Live2D integration through VTube Studio is the next step. You create or commission a Live2D model of your character, connect it to VTube Studio, and use a middleware script to trigger animations based on the companion's responses.

I'll be honest, I haven't fully committed to this approach myself because the setup is more involved than I'd like. But I've seen other builders create genuinely impressive results with it.

AI-Generated Dynamic Portraits

The cutting edge approach is using image generation to create a new portrait for each response, matching the companion's described expression and context. This requires a local Stable Diffusion or Flux setup and some scripting to automate the generation. The results can be stunning but the latency adds up. Each image takes 5-15 seconds to generate, which interrupts the conversation flow.

If you're exploring AI companion visuals and want an easier path, tools on Apatero.com can handle the image generation side with much less setup. I've used it for generating consistent character portraits, and the workflow is significantly simpler than managing a full local Stable Diffusion pipeline yourself.

What About Ethics and Healthy Boundaries?

I think it's important to talk about this openly. Building your own AI companion is powerful, and with that power comes responsibility. I wrote a full piece on AI companion ethics and healthy boundaries that goes deeper, but here are the key points.

An AI companion, no matter how well-crafted, is a simulation. It doesn't have feelings, it doesn't have consciousness, and it doesn't actually care about you in any meaningful sense. Knowing this intellectually and feeling it emotionally are two different things, especially when you've spent hours crafting a personality that resonates with you.

Earn Up To $1,250+/Month Creating Content

Join our exclusive creator affiliate program. Get paid per viral video based on performance. Create content in your style with full creative freedom.

Hot take: I don't think there's anything wrong with enjoying AI companionship as long as you maintain awareness of what it is. The problems start when people use AI companions as a complete replacement for human connection rather than a supplement. If your AI companion is your only source of social interaction, that's a red flag. If it's something fun you enjoy alongside real relationships, I see no issue.

Set time boundaries for yourself. Check in periodically about whether your companion usage is adding to your life or substituting for something you need. And remember that you can always turn it off, step away, and come back later. That's one of the advantages of running your own setup. There's no engagement-maximizing algorithm trying to keep you logged in.

Troubleshooting Common Issues

I've run into every problem you can imagine while building my companion setup. Here are the ones that come up most often.

Model Keeps Breaking Character

This usually means your system prompt isn't strong enough. Add more specific personality examples and include a line like: "You must always stay in character as [name]. Never acknowledge being an AI or language model." Also check that your temperature isn't too high, because above 1.0 the model starts getting unpredictable.

Responses Are Too Slow

Either your hardware is underpowered for the model size, or you need to optimize your setup. Try quantized models (Q4_K_M or Q5_K_M) which reduce memory requirements with minimal quality loss. On Ollama, pull the quantized version: ollama pull llama3:70b-q4_K_M.

Memory Isn't Working Properly

If using SillyTavern's memory, make sure the extension is enabled and configured with appropriate token limits. If using a custom ChromaDB setup, verify that your embedding model is producing consistent vectors and that your retrieval query actually matches the type of content you're storing.

Conversations Feel Flat

Nine times out of ten, this is a system prompt issue. Add more specific personality quirks, give the companion hobbies and opinions, and include example dialogue in your system prompt that demonstrates the tone you want.

If you've been running your companion for a while on Apatero.com or similar platforms and want to transition to a fully local setup, the system prompts and conversation patterns you've developed there translate directly. Think of it as graduating from training wheels to a custom build.

Advanced Customization Ideas

Once you've got the basics working, there are some genuinely exciting directions to explore.

Multi-model conversations. Run two different LLMs and have them interact with each other. I set up a "debate mode" where my companion and a second model discuss a topic I choose. It's fascinating and occasionally hilarious.

Voice integration. Tools like Bark and XTTS-v2 can give your companion a voice. Combine this with Whisper for speech-to-text and you've got a fully voice-interactive companion. I tested this for about a month, and while the latency isn't perfect yet, it's getting close to feeling natural.

Skill modules. Give your companion specific capabilities by hooking up function calling. Want your companion to check the weather, play music, or set reminders? With tool-use capable models, this is surprisingly doable.

Mood tracking. Log conversation sentiment over time and have the companion adjust its behavior based on patterns. If you've been stressed all week, the companion can proactively offer lighter conversation. This requires some scripting but the payoff is significant.

Example of conversation analytics you can build with a custom companion setup

Comparing the DIY Approach to Commercial Apps

Let me give you an honest comparison based on actually using both extensively.

| Feature | DIY Local Setup | Replika | Character AI |

|---|---|---|---|

| Privacy | Complete (offline) | Cloud-based, company access | Cloud-based, company access |

| Personality control | Total | Limited customization | Moderate (community characters) |

| Memory | Unlimited (with setup) | Good but limited | Very limited |

| Content restrictions | None (your rules) | Moderate filters | Heavy filters |

| Setup difficulty | Medium to Hard | Easy | Easy |

| Cost | Hardware only | $20/month premium | Free / $10 month |

| Voice | Possible with add-ons | Built-in | Limited |

| Reliability | Depends on your setup | High | High |

The honest truth? For someone who just wants to try AI companionship casually, commercial apps are fine. For anyone who takes it seriously, wants real privacy, or has been frustrated by platform limitations, building your own is absolutely worth the effort.

Full disclosure, I'm involved with Apatero.com, and we're working on tools that split the difference. The idea is giving you the customization of a local setup with the convenience of a managed platform. If you're interested in that middle ground, it's worth keeping an eye on.

Frequently Asked Questions

How much does it cost to build your own AI companion chatbot?

If you already have a gaming PC or recent Mac, the software cost is zero. Ollama, SillyTavern, and the LLM models are all free and open source. If you need to buy hardware, a used RTX 3090 (24GB VRAM) runs about $600-800 and handles 70B models comfortably.

Can I run this on a laptop?

Yes, but with limitations. Modern MacBooks with M-series chips handle 7B-13B models well. Windows/Linux laptops with discrete GPUs can work too. For 70B models, you really want a desktop with a proper GPU or at least 64GB of system RAM for CPU inference.

Is it legal to build your own AI companion?

Absolutely. The models are released under open source or permissive licenses (Meta's Llama license, Apache 2.0 for Mixtral). You're running publicly available software on your own hardware. There are no legal issues.

How good is the conversation quality compared to ChatGPT?

For general knowledge and reasoning, ChatGPT still has an edge. For companion-style conversation with personality and continuity, a well-configured Llama 3 70B with good system prompts can match or exceed ChatGPT. The key is the system prompt and memory setup.

Can other people access my companion?

Not unless you deliberately expose it to the internet. By default, Ollama and SillyTavern run on localhost only. Your conversations stay entirely on your machine. This is one of the biggest advantages of the local approach.

How long does setup take?

Basic setup (Ollama + a model + SillyTavern) takes about 30-60 minutes. Adding memory features adds another hour or two. Crafting a really good system prompt is an ongoing process, but you can start with something basic and refine over time.

Do I need to know how to code?

For the basic setup, no. Ollama and SillyTavern installation is straightforward. For advanced features like custom memory with ChromaDB, basic Python knowledge helps. But you can get 80% of the experience with zero coding.

What happens if a model gets updated?

You control when and if you update. Unlike commercial apps where changes are forced on you, you decide whether to pull a new model version. If you love how your current setup works, keep using it indefinitely.

Can I make my companion remember everything forever?

With the right memory setup (ChromaDB or similar vector database), yes. You're limited only by storage space, and conversation summaries are tiny. I have about 8 months of conversation history stored in under 500MB.

Is this better than Replika or Character AI?

"Better" depends on what you value. For ease of use, commercial apps win. For privacy, customization, and freedom from content restrictions, DIY wins by a landslide. For long-term memory and consistency, DIY also wins if you put in the setup work.

Wrapping Up

Building your own AI companion chatbot isn't just a technical project. It's a statement about who controls your digital relationships. When you run your own models, write your own personality prompts, and manage your own memory system, you're choosing agency over convenience.

I won't pretend it's easier than downloading Replika. It's not. But the result is something genuinely yours. A companion that behaves exactly the way you want, remembers what you tell it for as long as you want, and never changes because some product manager decided to pivot.

Start with Ollama and a basic Llama 3 model. Get comfortable with the fundamentals. Then layer on the personality, the memory, and the visual elements at your own pace. There's no rush. Your companion will be there whenever you're ready to keep building.

And if you get stuck along the way, the open source AI community is one of the most helpful groups I've encountered online. Drop into the SillyTavern Discord, browse the Ollama GitHub issues, or check out the subreddit. People are building incredible things and sharing their knowledge freely. That's the beauty of open source. You're never building alone.

Ready to Create Your AI Influencer?

Join 115 students mastering ComfyUI and AI influencer marketing in our complete 51-lesson course.

Related Articles

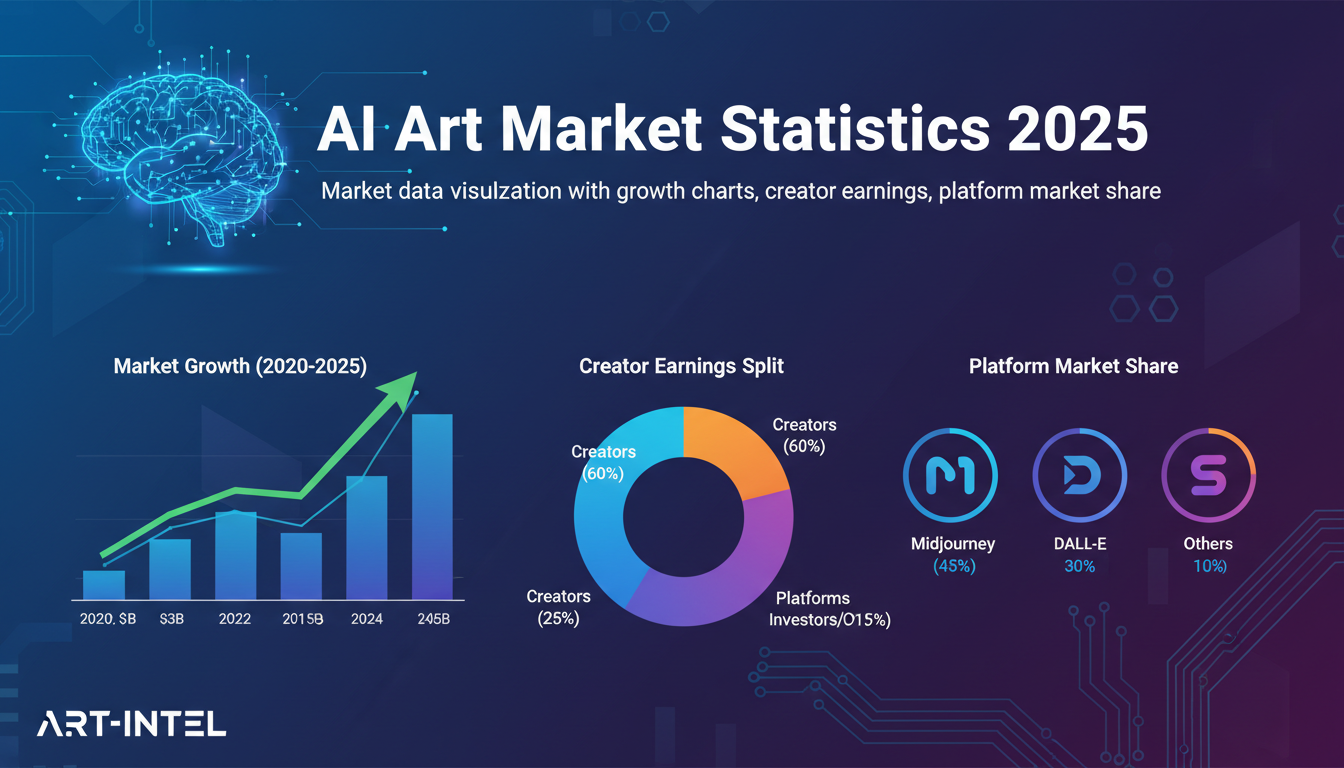

AI Art Market Statistics 2025: Industry Size, Trends, and Growth Projections

Comprehensive AI art market statistics including market size, creator earnings, platform data, and growth projections with 75+ data points.

AI Automation Tools: Transform Your Business Workflows in 2025

Discover the best AI automation tools to transform your business workflows. Learn how to automate repetitive tasks, improve efficiency, and scale operations with AI.

AI Avatar Generator: I Tested 15 Tools for Profile Pictures, Gaming, and Social Media in 2026

Comprehensive review of the best AI avatar generators in 2026. I tested 15 tools for profile pictures, 3D avatars, cartoon styles, gaming characters, and professional use cases.