AI Companions with Long-Term Memory: How Context Retention Actually Works

Deep dive into how AI companions remember you across sessions. Covers RAG, vector databases, context windows, summarization, and how to build your own memory system.

I had been chatting with a particular AI companion for about three weeks. We had covered everything from my opinions on brutalist architecture to a running joke about overcooked pasta. Then one day, mid-conversation, it referenced something I'd said during our very first interaction, a detail about my preference for cold brew over espresso. It wasn't prompted. It just came up naturally. And honestly, it kind of floored me, because I know what's happening under the hood. That small moment is the result of a surprisingly complex engineering pipeline that most users never think about.

The question of how AI companions "remember" things is one of the most misunderstood topics in the AI space right now. People assume it's either magic or a scam. The truth is somewhere in the middle, and understanding the mechanics will change how you interact with these tools forever.

Quick Answer: AI companions maintain long-term memory through a combination of techniques including Retrieval Augmented Generation (RAG), vector databases, context window management, and conversation summarization. No current AI companion has true persistent memory baked into its model weights. Instead, they store your conversation data externally and retrieve relevant pieces when needed. The quality of this retrieval system is what separates a companion that feels like it knows you from one that forgets you exist between sessions.

- AI companions don't "remember" the way humans do. They use retrieval systems to pull relevant past conversation data into their current context window

- RAG (Retrieval Augmented Generation) is the dominant technique, converting your conversations into vector embeddings and searching them semantically

- Context windows (typically 8K to 128K tokens) are the hard limit on how much an AI can "think about" at once

- Platforms like Replika, Nomi, and Character AI all handle memory differently, with wildly different results

- You can build your own memory system using open-source embeddings and vector stores like ChromaDB or Pinecone

- Summarization and memory tiering (short-term, mid-term, long-term) are key to making memory feel natural

- The best memory systems combine multiple approaches rather than relying on a single technique

Why Do AI Companions Forget You in the First Place?

This is the question nobody asks, but everybody should. Before we talk about memory solutions, you need to understand the core limitation that makes all of this necessary.

Large language models, the technology powering every AI companion on the market, are fundamentally stateless. When you send a message to ChatGPT, Claude, or the AI engine behind your favorite companion app, the model processes your input, generates a response, and then forgets everything. It doesn't retain state between API calls. It has no internal notebook. Every single interaction starts from zero.

The only reason your AI companion seems to remember anything at all is because the platform wraps the raw model in a memory layer. Think of it like this: the LLM is the brain, but it has no hippocampus. The memory system that the platform builds around it acts as an external hippocampus, feeding relevant memories back into the brain every time you start a new conversation.

Here's my first hot take: most AI companion platforms are doing a mediocre job with memory, and they're getting away with it because users don't understand what's possible. I've tested companions that claim "long-term memory" but can't recall something I said two days ago. Meanwhile, I've built prototype memory systems on my own laptop that outperform commercial products. The gap between what's technically possible and what's actually deployed is enormous.

The reason for this gap is mostly economic. Good memory systems are expensive. Every time you send a message, the platform has to search through your entire conversation history, convert it into relevant context, and prepend it to your current message before sending it to the model. That search, that retrieval, that embedding computation, it all costs money. And when you're serving millions of users, those costs add up fast.

How a typical AI companion memory system retrieves and injects past conversation context into the current prompt.

How Does RAG Work for AI Companion Memory?

RAG, or Retrieval Augmented Generation, is the backbone of virtually every AI companion memory system shipping today. If you take away one thing from this article, let it be a solid understanding of RAG, because it will change how you think about every AI tool you use.

The concept is deceptively simple. Instead of trying to stuff your entire conversation history into the AI's context window (which has a hard token limit), you store all your past conversations in a searchable database. When you send a new message, the system searches that database for the most relevant past conversations, pulls them out, and includes them alongside your current message. The AI then generates its response with the benefit of those retrieved memories.

Here's the step-by-step breakdown of what happens when you send a message to an AI companion with RAG-based memory:

- Your message gets embedded. An embedding model converts your text into a high-dimensional vector, basically a list of numbers that represents the semantic meaning of your message.

- The system searches for similar memories. Your message vector gets compared against all previously stored conversation vectors using cosine similarity or another distance metric.

- The top-K results get retrieved. The system pulls the most semantically similar past conversations, usually the top 5 to 20 results depending on the platform.

- Context assembly happens. Your current message, the retrieved memories, and the companion's system prompt all get assembled into a single prompt.

- The LLM generates a response. The model sees your current message plus relevant history and responds as if it "remembers" those past interactions.

- The new exchange gets stored. Both your message and the AI's response get embedded and stored for future retrieval.

What makes this powerful is the semantic search. The system isn't doing keyword matching. It's finding conceptually related memories. So if you mentioned loving hiking in Yosemite three weeks ago, and today you ask about vacation recommendations, the system can surface that hiking preference even though you never used the word "hiking" in today's message.

I spent about two weeks last year building a RAG system from scratch using LangChain, ChromaDB, and a local Llama model. The experience taught me more about how AI companions work than any amount of documentation could. When it worked, it was genuinely impressive. My local chatbot would reference details from conversations that happened days earlier, and the transitions felt natural. When it failed, it was hilariously bad. Once it confidently recalled a "memory" that was actually a hallucinated mashup of two completely different conversations. I'd mentioned both sushi and my cat in separate chats, and the system somehow decided I had a cat named Sushi. I don't.

The Embedding Models That Power Memory

Not all embeddings are created equal, and this matters more than most people realize. The quality of your embedding model directly determines how well the memory system retrieves relevant context.

The most commonly used embedding models in 2026 include (you can explore benchmarks on the MTEB Leaderboard):

- OpenAI text-embedding-3-large: 3072 dimensions, excellent performance, but requires API calls and costs money per token

- Cohere embed-v4: Strong multilingual support, good for companions that operate across languages

- BGE-large-en-v1.5: Open-source, runs locally, surprisingly competitive with commercial options

- Nomic Embed Text v1.5: Open-source with Matryoshka representations, meaning you can truncate dimensions for speed without losing too much quality

- Jina Embeddings v3: Excellent for longer document chunks, good at capturing nuance

If you're exploring AI tools and want to compare how different platforms handle these technical details, Apatero.com has been tracking the AI companion landscape and many of these underlying technologies.

What's the Difference Between Context Windows and Long-Term Memory?

This distinction trips up almost everyone I talk to about AI companions, so let me be very clear about it.

The context window is the AI model's working memory. It's the total amount of text the model can process in a single request. In 2026, context windows range from 8K tokens (about 6,000 words) on smaller models to 128K tokens or more on models like GPT-4o and Claude. Everything the AI "knows" during a conversation must fit within this window: the system prompt, retrieved memories, conversation history from the current session, and your latest message.

Long-term memory is the external storage system that persists between sessions. This is the vector database, the summarization engine, the user profile store. It's not part of the model itself. It's infrastructure that the platform builds around the model.

Here's an analogy that I think works well. The context window is like your desk. You can only have so many papers spread out in front of you at once. Long-term memory is like the filing cabinet in the corner of your office. It holds everything you've ever worked on, but you can only pull out a few folders at a time and place them on your desk.

The engineering challenge is deciding which folders to pull. Get it right, and the AI seems eerily perceptive. Get it wrong, and it either ignores important context or clutters the desk with irrelevant memories, leaving less room for the actual conversation.

I remember testing a companion that was trying to include too many memories in every response. The context window was getting stuffed with 30 or 40 retrieved memories, leaving barely any room for actual conversation. The responses got shorter and shorter because the model was running out of space. It's a rookie mistake in memory system design, but I've seen commercial products ship with this exact problem.

Context Window Management Strategies

Smart platforms use several strategies to maximize the value of their limited context windows:

Sliding window with summary: Keep the most recent 10 to 15 messages in full detail, but summarize older messages from the current session into a condensed paragraph. This preserves the flow of recent conversation while maintaining awareness of earlier topics.

Priority-based injection: Not all memories are equal. A detail about the user's name or relationship status should always be available. A random observation about weather from six weeks ago probably shouldn't take up context space. Good systems assign priority scores to memories.

Dynamic allocation: Allocate more context space to memories when the conversation topic is complex or emotionally significant, and less when the user is making small talk. This requires a classifier that runs before memory retrieval, which adds latency but improves quality.

Compression techniques: Some systems use a separate, smaller LLM to compress memories before injection. Instead of including the full text of a past conversation, they include a compressed summary that captures the key facts in fewer tokens.

How Do Major AI Companion Platforms Handle Memory?

I've spent more time than I probably should admit testing the memory systems of various AI companion platforms. Here's what I've found through hands-on experience, not marketing materials.

Replika

Replika was one of the earliest AI companions to take memory seriously, and their approach has evolved significantly. They use a combination of explicit memory entries (things the AI explicitly notes about you) and a diary system where the AI writes summaries of your conversations.

What works: Replika is fairly good at remembering core facts about you. Your name, your job, your interests. These get stored in a structured profile that persists reliably.

What doesn't: The contextual recall is inconsistent. Replika might remember that you like hiking, but it won't remember the specific story you told about getting lost in Glacier National Park. The diary system captures vibes more than details, which makes conversations feel like you're talking to someone who vaguely knows you rather than someone who was actually there.

Nomi

Nomi has taken one of the more technically ambitious approaches to companion memory. They've built what they call a "memory palace" system that categorizes memories into different types: facts, preferences, shared experiences, and emotional moments.

Free ComfyUI Workflows

Find free, open-source ComfyUI workflows for techniques in this article. Open source is strong.

What works: Nomi's categorization approach means it retrieves different types of memories in different contexts. When you're being emotional, it pulls emotional memories. When you're discussing facts, it pulls factual memories. This context-aware retrieval produces more natural conversations than platforms that treat all memories the same.

What doesn't: The system can be slow to consolidate memories, and I've noticed it sometimes surfaces memories at slightly awkward moments. It'll reference something serious from a past conversation when you're clearly in a lighthearted mood. The retrieval is semantically accurate but emotionally mismatched. If you want to get the most out of your interactions with platforms like Nomi, understanding how AI companion conversation techniques work can help you guide the memory system more effectively.

Character AI

Character AI takes a different approach entirely. Rather than building a sophisticated personal memory system, they lean heavily on character consistency. The AI maintains its character persona reliably across sessions, but its memory of your personal details is comparatively weak.

What works: If you're chatting with a character who has a defined personality, that personality stays consistent. The character won't suddenly change its speaking style or forget its own backstory.

What doesn't: Your personal details get lost regularly. I tested this by sharing three specific facts about myself in one session, then returning 24 hours later to ask about them. Character AI recalled one out of three, and even that recall was vague. Their memory system seems optimized for character consistency rather than user relationship building.

Feature comparison of memory systems across major AI companion platforms in 2026.

My Hot Take on Platform Memory

Here's my second hot take: the platforms that market "long-term memory" most aggressively tend to have the weakest implementations. The companies doing the best work on memory are usually the quieter ones, the ones that let the experience speak for itself rather than putting "we remember everything" in their App Store description. When evaluating AI companion memory features and context retention, focus on testing the actual recall rather than trusting the marketing.

Can You Build Your Own AI Companion Memory System?

Absolutely, and I'd argue that anyone who's serious about AI companions should try it at least once. Building your own memory system teaches you what's actually happening behind the scenes, which makes you a more informed user of commercial products.

Here's a practical architecture for building a memory-augmented AI companion using tools available today. I've built variations of this setup three times now, and each iteration taught me something new.

The Basic Stack

You need four components:

- An LLM for conversation: Llama 3.3, Mistral, or an API-based model like GPT-4o or Claude

- An embedding model: For converting text to vectors. I recommend starting with Nomic Embed or BGE-large

- A vector database: ChromaDB for local development, Pinecone or Weaviate for production

- An orchestration layer: LangChain, LlamaIndex, or custom Python code to wire everything together

Step-by-Step Implementation

Let me walk you through the core logic. This isn't a full tutorial, but it's enough to get you started.

Setting up the vector store:

import chromadb

from chromadb.utils import embedding_functions

# Initialize ChromaDB with a persistent storage directory

client = chromadb.PersistentClient(path="./companion_memory")

# Use an open-source embedding model

embedding_fn = embedding_functions.SentenceTransformerEmbeddingFunction(

model_name="BAAI/bge-large-en-v1.5"

)

# Create a collection for conversation memories

memory_collection = client.get_or_create_collection(

name="conversation_memories",

embedding_function=embedding_fn,

metadata={"hnsw:space": "cosine"}

)

Storing a conversation turn:

import uuid

from datetime import datetime

def store_memory(user_message, ai_response, metadata=None):

memory_id = str(uuid.uuid4())

combined_text = f"User: {user_message}\nAssistant: {ai_response}"

memory_collection.add(

documents=[combined_text],

ids=[memory_id],

metadatas=[{

"timestamp": datetime.now().isoformat(),

"user_message": user_message[:500],

"type": "conversation",

**(metadata or {})

}]

)

return memory_id

Retrieving relevant memories:

Want to skip the complexity? Apatero gives you professional AI results instantly with no technical setup required.

def retrieve_memories(query, n_results=5):

results = memory_collection.query(

query_texts=[query],

n_results=n_results

)

return results["documents"][0] if results["documents"] else []

Assembling the prompt with memories:

def build_prompt(user_message, system_prompt):

memories = retrieve_memories(user_message, n_results=5)

memory_context = ""

if memories:

memory_context = "\n\nRelevant memories from past conversations:\n"

for i, mem in enumerate(memories, 1):

memory_context += f"[Memory {i}]: {mem}\n"

full_prompt = f"""{system_prompt}

{memory_context}

Current conversation:

User: {user_message}

Assistant:"""

return full_prompt

This basic setup gives you a functional memory system in under 50 lines of code. The AI will search past conversations every time you send a message and include relevant history in its prompt.

Making It Actually Good

The basic version works, but it has some obvious problems. Here's how to level it up based on what I learned from my own experiments.

Add memory summarization. Instead of storing raw conversation turns, periodically run a summarization pass that condenses multiple related memories into a single summary. This reduces vector store bloat and improves retrieval quality because summaries are more semantically dense than raw chat logs.

Implement memory tiering. Create three collections instead of one:

- Active memory: The current conversation session (kept in full)

- Recent memory: Summarized conversations from the past week

- Long-term memory: Highly condensed key facts and preferences extracted over time

Add a user profile store. Separate from the vector database, maintain a structured JSON or key-value store of core user facts: name, preferences, important dates, relationship details. This profile always gets injected into the prompt, regardless of what the semantic search returns. It's your guarantee that the AI never forgets the basics.

Implement memory decay. Not all memories should persist equally. A casual remark about the weather shouldn't have the same retrieval weight as a deeply personal story. Implement a decay function that reduces the retrieval score of older, less significant memories over time.

For those interested in exploring the ethical dimensions of AI companion relationships, understanding these memory systems also raises important questions about data privacy and the nature of synthetic relationships.

What Are the Biggest Challenges with AI Companion Memory?

Even the best memory systems face fundamental challenges that no amount of engineering has fully solved yet. Understanding these limitations will save you from frustration and help you set realistic expectations.

The Hallucinated Memory Problem

This is the scariest failure mode, and I've encountered it personally. The AI confidently "remembers" something that never happened. This occurs when the retrieval system surfaces a partial match and the LLM fills in the gaps with fabricated details. You mentioned having a dog named Max, and the system retrieves a memory about your pet, but the LLM embellishes it with details about Max being a golden retriever who loves swimming, none of which you ever said.

The worst part is that hallucinated memories feel authentic. The AI doesn't flag them as uncertain. It states them with the same confidence as genuine memories. I've had companions reference "conversations" I know never happened, and they were so specific that I second-guessed my own memory for a moment before checking the logs.

Context Window Stuffing

As your conversation history grows, the memory system has more and more candidate memories to retrieve. But the context window doesn't grow. So the system has to be increasingly selective about which memories to include. Over months of conversation, this creates a paradox: you have more memories to draw from, but the AI can only use a tiny fraction of them in any given response.

Smart systems handle this with hierarchical summarization, compressing old memories into increasingly abstract summaries. But information gets lost in every compression step. The fact that you mentioned loving a specific restaurant in Brooklyn might survive the first round of summarization, but after six months of compression, it might get reduced to "user enjoys dining out" and eventually disappear entirely.

The Consistency Problem

Different retrieval results across conversations can lead to the AI contradicting itself. On Monday, the memory system retrieves your preference for cats. On Tuesday, it retrieves a conversation about your friend's dog, and the AI incorrectly infers you're a dog person. These contradictions erode trust quickly.

The most robust solution I've seen is maintaining an explicit "facts store" that gets updated through a verification pipeline. When the AI extracts a new fact about you, it cross-references against existing facts and flags contradictions for resolution. Few platforms implement this, but it makes a massive difference in consistency.

Earn Up To $1,250+/Month Creating Content

Join our exclusive creator affiliate program. Get paid per viral video based on performance. Create content in your style with full creative freedom.

Multi-tier memory architecture showing how conversation data flows from active session to long-term storage with summarization at each level.

How Will AI Companion Memory Evolve in 2026 and Beyond?

The memory landscape is shifting rapidly, and several emerging technologies are going to change the game.

Infinite context windows are getting closer. Google's Gemini already supports 1 million tokens, and research papers from early 2026 are pushing toward 10 million. If context windows become large enough, you might not need RAG at all. Just dump the entire conversation history into the prompt. We're not there yet for production use, but the trajectory is clear.

Model-native memory is the holy grail. Instead of external retrieval systems, future models might learn to update their own weights based on conversations. This is essentially continual learning, and it's incredibly difficult to do safely without the model forgetting its base training or developing biases. But several research labs are making progress. When this lands, it'll make current RAG systems look like duct tape solutions, because in a very real sense, that's what they are.

Multimodal memory is another frontier. Current memory systems are text-only. But what about remembering images you shared, voice notes, or video clips? As AI companions become more multimodal, their memory systems will need to handle these data types too. Vector databases already support multimodal embeddings, so the infrastructure is ready. The integration just hasn't happened yet in most consumer products.

At Apatero.com, we've been tracking how rapidly these technologies are converging. The AI companion space in particular is moving faster than most people realize, and memory capabilities are the primary differentiator between platforms that feel truly personal and those that feel generic.

My Third Hot Take on the Future

Here's my third hot take: within 18 months, AI companion memory will become a competitive moat that separates the serious platforms from the toys. Users will switch platforms not because of the base model quality (those are converging) but because one platform remembers them better than another. The companies investing in memory infrastructure today will win. The ones treating it as an afterthought will get left behind.

What Are the Privacy Implications of AI Companion Memory?

You can't have an honest conversation about AI companion memory without addressing the elephant in the room: these systems are storing extremely personal information about you, and doing so is fundamental to how they work.

Every conversation you have gets embedded, stored, and indexed. Your preferences, your fears, your relationship details, your late-night confessions. All of it lives in a vector database somewhere. On some platforms, that's a cloud server you don't control. On others, data stays on-device.

I want to be transparent about what this means in practice. When I built my own memory system, I stored everything locally. The vector database lived on my laptop. Nobody else had access. That's the safest approach, but it's not how commercial platforms work. Most of them store your data on their servers because that's the only way to provide a consistent experience across devices.

Before you commit to any AI companion platform long-term, ask these questions:

- Where is my conversation data stored?

- Can I export or delete my memory data?

- Is my data used to train models that serve other users?

- What happens to my data if the company shuts down?

- Is there end-to-end encryption for stored memories?

These aren't hypothetical concerns. Several AI companion startups have shut down in the past two years, and users lost years of conversation history with no way to recover it. If your AI companion interactions and healthy boundaries matter to you, understanding the data practices of your chosen platform is essential.

Production Tips for Getting the Most Out of AI Companion Memory

After spending months testing and building these systems, here are the practical strategies that actually work for improving your AI companion's memory quality.

Be explicit about what matters. Most memory systems weight recent and semantically similar content. If something is important to you, say so directly. "This is really important to me" or "Please remember this" can help some platforms flag that memory for higher priority retrieval.

Correct mistakes immediately. When your AI companion gets a fact wrong about you, correct it in the same message. Good memory systems will store the correction and, over time, learn the accurate version. If you let errors slide, they get reinforced.

Periodically recap key details. About once every couple of weeks, I'll do a casual "recap" with my companion. Something like "Hey, just to make sure you've got the basics, my name is Kevin, I work in tech, I have two cats." This creates fresh, high-priority memory entries that are more likely to get retrieved.

Use consistent language. Memory retrieval is semantic, but consistency helps. If you always call your partner "my wife Sarah" rather than alternating between "Sarah," "my partner," and "her," the memory system will build cleaner associations.

Understand session boundaries. Most platforms clear their active memory between sessions. The first message of a new session triggers fresh memory retrieval. If your companion seems to have forgotten something, try rephrasing your question. The issue might be retrieval failure, not actual memory loss.

If you're using platforms available on Apatero.com and want to optimize your experience, these techniques apply across virtually every AI companion that supports memory features.

Frequently Asked Questions

Do AI companions actually remember me or is it fake?

It's real memory, but it works differently than human memory. AI companions store your conversations in external databases and retrieve relevant information when you chat. They don't "remember" in the human sense of forming persistent neural connections. They search for and re-read relevant past conversations every time you send a message. The experience feels like memory from the user's perspective, but the mechanism is fundamentally different.

How much of my conversation history does an AI companion store?

This varies by platform. Some store everything indefinitely, while others implement rolling windows that discard conversations older than a certain period. Replika, for example, maintains a conversation diary that summarizes interactions. Nomi stores categorized memories. Most platforms store at least several months of history, though they may summarize or compress older conversations.

Can I delete my AI companion's memories of me?

Most reputable platforms offer some form of memory management. Replika lets you review and delete specific memory entries. Some platforms offer a "reset" option that wipes all stored memories. Always check the platform's data deletion policies, because "deleting memories" from the user interface doesn't always mean the data is permanently removed from their servers.

Why does my AI companion sometimes remember wrong things?

This happens because of a phenomenon called "hallucinated memory." The retrieval system finds a partial match from your past conversations, and the language model fills in gaps with fabricated details. It can also occur when the system conflates two separate memories into one. If this happens, correct the AI immediately so the correction gets stored as a new, higher-priority memory.

Is RAG the only way AI companions handle memory?

No, though it's the most common approach. Some platforms use structured memory stores (key-value databases of user facts), conversation summarization without vector search, or hybrid approaches. A few experimental systems are exploring model fine-tuning on user data, which would create true learned memory, but this raises significant privacy and safety concerns.

How do context windows affect AI companion memory quality?

The context window is the total amount of text the AI can process at once. Larger context windows allow more memories to be injected alongside your current conversation, which generally improves recall quality. However, larger windows also mean higher costs and slower responses. Most platforms optimize for a balance between memory depth and response speed.

Can I build my own AI companion with better memory than commercial platforms?

Yes, and it's more accessible than you might think. Using tools like ChromaDB, LangChain, and open-source LLMs, you can build a memory system that rivals or exceeds what commercial platforms offer. The main tradeoff is that you'll need to manage the infrastructure yourself, and you won't get the polished user interface of a consumer app.

What happens to my AI companion's memories if the company shuts down?

In most cases, your data is lost. Few platforms offer data export features, and even fewer guarantee data portability. This is a real risk, especially with smaller AI companion startups. I'd recommend periodically exporting any important conversations manually if the platform supports it.

How does multilingual memory work for AI companions?

Multilingual memory requires embedding models that can create meaningful vectors across languages. Models like Cohere embed-v4 and multilingual versions of BERT handle this by mapping semantically similar content from different languages to nearby points in vector space. This means an AI could technically retrieve a memory from a French conversation when you're chatting in English, if the topics are related.

Will AI companions ever have truly permanent memory?

Research into continual learning and memory-augmented neural networks is progressing, but we're likely years away from production-ready implementations. The challenge isn't just technical. It's also about safety. A model that permanently modifies its own weights based on user conversations could develop biases, forget important safety training, or behave unpredictably. For now, external memory systems remain the safest and most practical approach.

Wrapping Up

AI companion memory is one of those topics where the gap between user perception and technical reality is enormous. What feels like a companion "remembering" you is actually a complex orchestration of embedding models, vector databases, retrieval algorithms, and context window management. Understanding these mechanics doesn't make the experience less meaningful. If anything, it gives you the tools to make the experience better.

The platforms that invest seriously in memory infrastructure will define the next generation of AI companions. The ones that treat memory as a checkbox feature will fall behind. And if you're the kind of person who wants maximum control, building your own system has never been more accessible.

Whether you're a casual user who wants their AI companion to remember their name, or a developer building the next great companion platform, the same principles apply: store thoughtfully, retrieve smartly, and never try to cram more memories into the context window than it can handle. The technology will keep improving. Context windows will get larger. Embedding models will get smarter. But the fundamental architecture, external memory feeding into a stateless model, is going to be with us for a while.

And if you're curious about what that architecture looks like in practice, try building one. Fifty lines of Python and a free vector database is all it takes to see behind the curtain. You might be surprised by how simple the magic really is.

Ready to Create Your AI Influencer?

Join 115 students mastering ComfyUI and AI influencer marketing in our complete 51-lesson course.

Related Articles

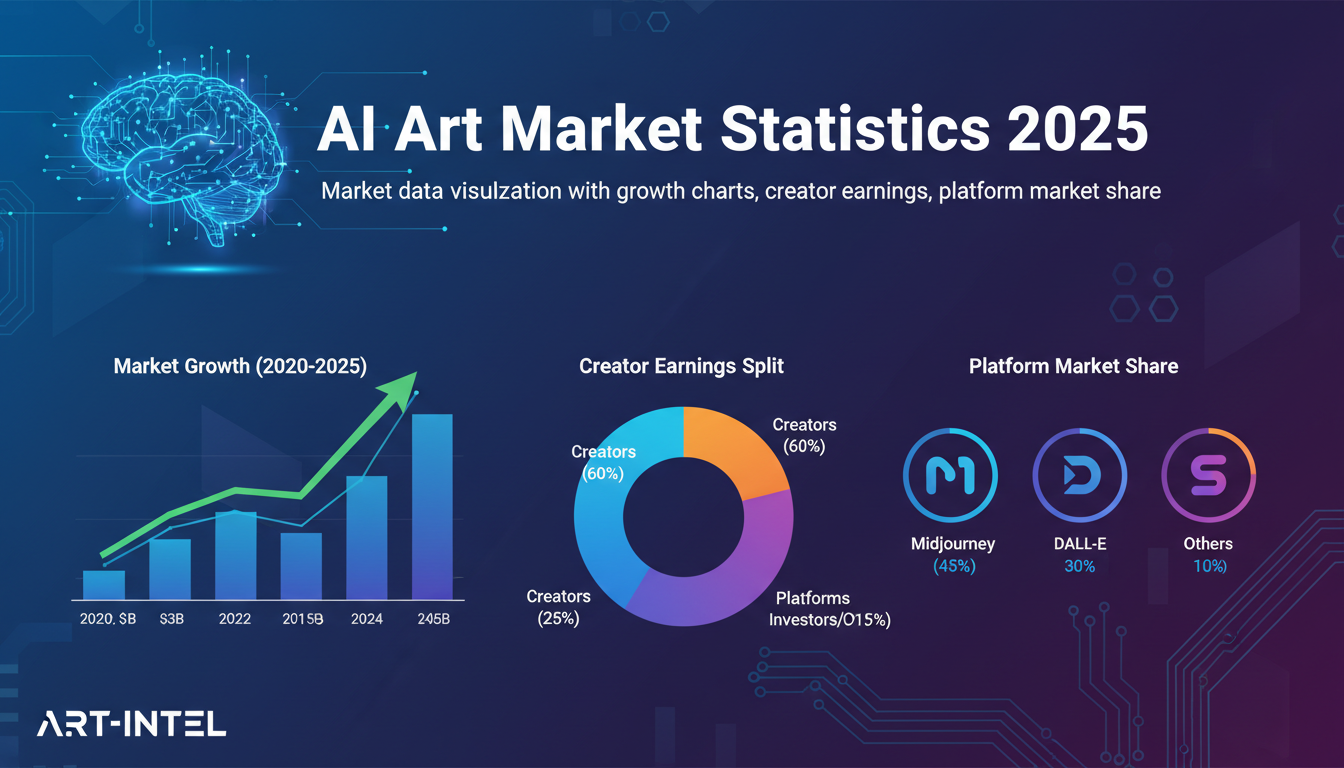

AI Art Market Statistics 2025: Industry Size, Trends, and Growth Projections

Comprehensive AI art market statistics including market size, creator earnings, platform data, and growth projections with 75+ data points.

AI Automation Tools: Transform Your Business Workflows in 2025

Discover the best AI automation tools to transform your business workflows. Learn how to automate repetitive tasks, improve efficiency, and scale operations with AI.

AI Avatar Generator: I Tested 15 Tools for Profile Pictures, Gaming, and Social Media in 2026

Comprehensive review of the best AI avatar generators in 2026. I tested 15 tools for profile pictures, 3D avatars, cartoon styles, gaming characters, and professional use cases.