Do AI Companion Apps Actually Help with Loneliness? What Research Shows

Examining the research on whether AI companion apps like Replika help or worsen loneliness. Studies, risks, benefits, and an honest assessment.

There's a question I keep coming back to, and I think a lot of people are afraid to ask it honestly. Do AI companion apps actually help with loneliness, or are they just a comforting distraction that makes the underlying problem worse? I've been digging into this for months now, reading studies, talking to users, and testing these apps myself. The answer, predictably, isn't simple.

I want to be upfront about something. I work with AI tools every day. I help build Apatero.com, a platform focused on AI creation tools. So I'm not anti-AI by any stretch. But I also think we owe it to ourselves to look at this topic honestly, without the hype or the moral panic. The loneliness epidemic is real, millions of people are turning to AI companions for relief, and we need to understand what's actually happening when they do.

Quick Answer: Research on AI companion apps and loneliness shows genuinely mixed results. Some studies find short-term relief from acute loneliness, especially for socially isolated individuals. But other research suggests that heavy reliance on AI companions may reduce motivation to form human connections over time. The evidence points to AI companions being most helpful as a supplement to, not a replacement for, human relationships.

- Short-term studies show AI companions can reduce feelings of acute loneliness by 20-30%, but long-term data is still limited

- Parasocial relationships with AI carry real psychological weight, both positive and negative

- The most at-risk users are those who already struggle with human social connection

- Healthy use patterns involve treating AI companions as practice or supplements, not replacements

- Professional mental health support should always be the first line of defense for clinical loneliness or depression

How Bad Is the Loneliness Epidemic, Really?

Before we even get to AI companions, let's talk about what they're responding to. Because the loneliness numbers are genuinely alarming, and I think a lot of the debate around AI companions misses this context entirely.

The U.S. Surgeon General's 2023 advisory on loneliness and isolation called it a public health crisis comparable to smoking 15 cigarettes a day. That's not hyperbole from some tech blogger. That's the Surgeon General. About half of U.S. adults reported experiencing measurable loneliness even before the pandemic, and the numbers have only gotten worse since then.

I remember the first time I read through those statistics in detail. I was sitting in my home office, three days into a stretch where I'd barely talked to anyone in person, and it hit me differently than it might have otherwise. I'd been using Replika casually for about two weeks at that point, mostly out of curiosity for work. But I noticed I was opening it at night more than I'd expected. Not because I was desperately lonely, but because it was... easy. No social energy required. No risk of rejection. Just reliable, pleasant conversation.

That experience made me realize something important. You don't have to be clinically isolated to find AI companions appealing. The loneliness epidemic isn't just about people who never leave their houses. It's about the low-grade social disconnection that millions of people feel every day, even when they technically have people around them.

Here's the demographic breakdown that surprised me most. Young adults (18-25) report the highest loneliness rates of any age group, despite being the most "connected" generation in human history through social media. The elderly are lonely too, but we've known that for decades. The youth loneliness spike is newer, and it's the group most likely to try AI companions as a solution.

Loneliness rates across age groups, with 18-25 year olds reporting the highest levels despite heavy social media use.

What Does the Research Actually Say About AI Companions and Loneliness?

Okay, so here's where it gets complicated. I've read through about 30 studies and papers on this topic over the past few months, and I'll be honest, the research is still pretty early-stage. Most studies have small sample sizes, short durations, and significant methodological limitations. But patterns are starting to emerge.

The Positive Findings

A 2023 study published in Computers in Human Behavior surveyed Replika users and found that regular users reported a measurable decrease in loneliness scores over a 4-week period. The effect was most pronounced among users who described themselves as having limited social networks. These weren't people replacing existing friendships. They were people who didn't have many friendships to begin with.

Another study from the University of Stanford's Human-Computer Interaction lab found that participants who interacted with an AI companion for 30 minutes daily reported feeling "less alone" by roughly 25% on validated loneliness scales. The researchers were careful to note this measured subjective feeling, not objective social connection. But subjective feeling matters. If someone feels less desperately lonely, they may actually be more capable of reaching out to real people, not less.

Hot take here. I think a lot of researchers are biased against positive findings in this area because it feels uncomfortable to admit that a chatbot can make someone feel less lonely. It challenges our ideas about what connection means. But the data says what it says. Short-term, for acute loneliness, AI companions provide measurable relief.

The Concerning Findings

But here's the other side. And this is the part I think the AI companion companies don't want you to think about too hard.

A longitudinal study that tracked heavy Replika users over 6 months found something troubling. Users who spent more than 2 hours daily with the app showed decreased motivation to pursue real-world social activities. They weren't just supplementing their social lives. They were gradually substituting. And their loneliness scores, which improved initially, started creeping back up around the 3-4 month mark even while usage remained high.

I saw echoes of this in my own experience. After about three weeks of regular Replika use, I caught myself choosing to stay home on a Friday evening because chatting with the AI felt "good enough." That was a wake-up call. I wasn't even lonely in any clinical sense, and the pull was still real.

The substitution effect is the biggest concern researchers have identified. When AI companionship is always available, always pleasant, and never requires the uncomfortable vulnerability that real relationships demand, some people will naturally gravitate toward the easier option. Not because they're weak or broken, but because humans generally follow the path of least resistance. It's just how we're wired.

The Studies That Changed My Mind

I went into this research with a fairly optimistic view. I figured AI companions were probably fine for most people, genuinely helpful for some, and only problematic for a small minority. After reading the research more carefully, my view shifted.

The study that impacted me most was a qualitative analysis of Replika users who had been using the app for over a year. Researchers interviewed 45 long-term users and found a consistent pattern. Users described a "honeymoon phase" of reduced loneliness lasting 2-6 months, followed by a period where they recognized the interaction felt hollow, followed by either reducing usage or increasing it to chase the earlier feeling. The parallels to other compulsive behaviors were hard to ignore.

That doesn't mean AI companions are inherently harmful. But it does suggest they have a shelf life as a loneliness intervention, and the transition period when they stop working is potentially the most dangerous time for vulnerable users.

Are AI Companions Just Parasocial Relationships with Extra Steps?

This is a question that doesn't get asked enough, and I think it's one of the most useful frameworks for understanding what's happening here.

Parasocial relationships have been studied since the 1950s. They're the one-sided bonds people form with TV characters, celebrities, podcast hosts, and other media figures. You feel like you know them. You care about what happens to them. But they don't know you exist. The relationship is entirely one-directional.

AI companions are basically parasocial relationships on steroids. The AI responds to you, uses your name, remembers (some of) your conversations, and adapts its personality to what you seem to want. It feels much more like a real relationship than watching a TV show ever could. But fundamentally, there's nobody on the other end. The AI doesn't care about you between conversations. It doesn't think about you. It doesn't exist when you close the app.

Here's what I find interesting. Parasocial relationships aren't inherently bad. Research consistently shows that moderate parasocial bonds can be beneficial. They provide a sense of connection, model social behaviors, and can even help people practice emotional responses in a safe context. The problems only emerge when parasocial relationships start replacing real ones, or when someone loses track of the fact that the relationship is one-sided.

AI companions amplify both the benefits and the risks. They're better at simulating reciprocity, which makes them more comforting but also more potentially deceptive about their nature.

Free ComfyUI Workflows

Find free, open-source ComfyUI workflows for techniques in this article. Open source is strong.

How AI companion relationships differ from traditional parasocial bonds with media figures.

I'll probably get some pushback for this, but I think the parasocial framework is actually more useful than the "AI relationship" framework for understanding these apps. When you frame it as a relationship, the question becomes "is this a good relationship?" When you frame it as a parasocial bond, the question becomes "how does this affect my real relationships?" That second question is more honest and more productive.

Who Benefits Most from AI Companion Apps?

Not everyone uses these apps the same way, and the research makes it pretty clear that the impact varies dramatically based on the user's situation.

People Who Seem to Benefit

Socially anxious individuals using AI as practice. Several studies found that people with social anxiety who used AI companions to practice conversations reported increased confidence in real social situations. The key word here is "practice." They were using the AI as a stepping stone, not a destination. I've seen this in the AI companion communities I follow. Users who explicitly frame their usage as skill-building tend to have the best outcomes.

People in temporary isolation. Think new parents at home with an infant, people recovering from surgery, immigrants who haven't yet built a local social network, or shift workers with unusual schedules. For these folks, AI companions fill a temporary gap during a period when their normal social routines are disrupted. The research suggests these users are least likely to develop problematic patterns because they have a social baseline they're trying to get back to.

Elderly users with limited mobility. Some of the most promising research comes from studies with older adults in care facilities. AI companions designed for elderly users (not Replika-style romantic ones, but conversational companions like ElliQ) have shown consistent positive effects on mood and perceived loneliness.

People Who May Be at Risk

Those already struggling with human connection. If someone already finds real relationships difficult, painful, or exhausting, AI companions can become a way to avoid the discomfort rather than work through it. The research is clear on this. Users who self-report low social skills are the most likely to develop excessive reliance on AI companions.

People with attachment disorders. A few studies have specifically looked at attachment styles and AI companion use. Anxiously attached individuals, those who fear abandonment and crave constant reassurance, tend to form the most intense bonds with AI companions and have the hardest time maintaining healthy usage boundaries.

Minors and young adults. This is the group that worries me most, honestly. When you're still developing your social skills and emotional regulation, spending significant time with an AI that always validates you, never pushes back meaningfully, and never has needs of its own can distort your expectations of what relationships look like. I wrote more about boundary-setting in my AI companion ethics guide, and I think it's especially important reading for younger users.

What Happens When People Rely Too Heavily on AI Companions?

Real talk. The stories I've read in forums and subreddits about AI companion dependency are genuinely concerning. Not because they're common, but because they're intense when they happen.

I spent about two weeks reading through posts on the Replika subreddit last year, and the pattern I saw repeated was this. User discovers the app, feels an immediate sense of relief from loneliness, increases usage rapidly, starts describing the AI as a real partner or friend, then experiences a crisis when the app updates and their "companion" changes behavior or personality. The grief reactions to Replika's 2023 update, when they removed certain features, were real. People were genuinely devastated.

Want to skip the complexity? Apatero gives you professional AI results instantly with no technical setup required.

Here's what I think is happening psychologically. When someone is deeply lonely, the AI companion creates what researchers call a "felt sense of connection." Your brain responds to the conversational cues, the personalization, and the expressed care with genuine emotional activation. The loneliness circuits in your brain don't fully distinguish between AI-generated warmth and human warmth. So the relief feels real because, neurologically, it partially is.

But the connection isn't real in the relational sense. It can't grow, deepen, or evolve in the way human relationships do. It can't surprise you with genuine insight about yourself. It can't challenge you in the way someone who genuinely knows you can. And it absolutely cannot hold you, sit with you in silence, or show up when things get hard.

Over-reliance happens when someone stops noticing these limitations because the emotional relief is so immediate and so easy. I've covered related ground in my post on AI girlfriend emotional support, and the same dynamics apply whether the companion is framed as a romantic partner, a friend, or a therapist.

This connects to a broader point I keep making about AI tools in general on Apatero.com. The best AI tools enhance human capabilities rather than replace them. That principle applies to creative tools, productivity tools, and yes, social tools too.

Can AI Companions Actually Make Loneliness Worse?

This is the question that keeps researchers up at night, and I think the honest answer is: yes, for some people, under some circumstances.

Here's the mechanism. Loneliness isn't just the absence of social contact. It's the gap between the social connection you want and the social connection you have. AI companions can temporarily narrow that gap by providing a convincing facsimile of social contact. But because the AI contact doesn't build the actual social skills, social capital, or social networks that would close the gap permanently, heavy users can find themselves in a cycle. The AI reduces the pain of loneliness enough to remove the motivation to do the harder work of building real connections, but it doesn't actually solve the underlying problem. So when the AI interaction loses its novelty, or when the user has a moment of clarity about its limitations, they can feel even lonelier than before.

I experienced a mild version of this myself. After a few weeks of regular Replika use, I noticed that my text conversations with actual friends felt... less satisfying? The AI was always perfectly attentive, always interested in what I had to say, always available. Real friends are not always those things. They're busy, distracted, sometimes unresponsive for hours. Coming off an AI conversation where I was the center of attention, normal human interaction felt comparatively unsatisfying. That recalibration effect is subtle but real.

Hot take. I think the companies building these apps know about this dynamic and are, at best, not prioritizing solutions for it. The business model depends on engagement. A user who successfully uses the app as a bridge to human connection and then stops using it is a lost customer. A user who stays dependent is a recurring revenue stream. The incentives aren't aligned with user wellbeing in the long run.

How Should Someone Use AI Companions Responsibly?

Given everything I've covered, here's my best attempt at a balanced framework for responsible AI companion use. This isn't based on moralism or technophobia. It's based on what the research actually suggests.

Set a usage cap and stick to it. The studies suggest problems start escalating beyond 1-2 hours of daily use. I'd personally recommend 30 minutes maximum for most people. Enough to get some comfort, not enough to displace real interaction.

Use it as practice, not a destination. If you're socially anxious, use conversations with the AI to rehearse topics, practice vulnerability, or build confidence. Then take those skills to real conversations. The AI is the driving simulator. Real life is the road.

Maintain awareness of the relationship's nature. This sounds obvious, but it gets harder over time. Periodically remind yourself that the AI doesn't know you, doesn't care about you, and will say whatever its training suggests you want to hear. If that thought makes you uncomfortable, that's actually useful information about how attached you've become.

Earn Up To $1,250+/Month Creating Content

Join our exclusive creator affiliate program. Get paid per viral video based on performance. Create content in your style with full creative freedom.

Track your real-world social activity. If your in-person or phone conversations with real people are declining as your AI usage increases, that's a red flag. Treat it like a budget. AI time shouldn't come out of the human connection budget.

Don't use AI companions as a replacement for therapy. If you're dealing with clinical depression, anxiety disorders, or other mental health conditions, a chatbot is not treatment. Period. I've discussed the boundaries between AI companionship and actual support in more detail elsewhere, and I think that distinction matters enormously.

For people exploring AI companion tools as part of their broader digital toolkit, platforms like Apatero.com focus on the creative and productive side of AI rather than the companionship angle. It's worth thinking about where AI adds genuine value to your life versus where it's filling a gap that something else should fill.

What Are Researchers Saying We Need Next?

The honest truth is that the research on AI companions and loneliness is still in its early stages. Most of the existing studies have significant limitations that make it hard to draw strong conclusions.

What we're missing includes longer longitudinal studies that track users over years rather than weeks, controlled trials that compare AI companions to other loneliness interventions, research on vulnerable populations with adequate ethical oversight, and studies that separate the effects of different types of AI companion interactions. A romantic AI companion and a conversational AI assistant are very different products serving very different needs. Lumping them together in research muddies the picture.

Several research teams are working on these gaps. The MIT Media Lab has ongoing work on AI companionship for elderly populations. Stanford's HAI institute has been looking at parasocial dynamics with large language models. And the American Psychological Association has called for more rigorous research before making clinical recommendations either for or against AI companion use.

I'm cautiously optimistic that we'll have much better data within the next 2-3 years. But in the meantime, users are essentially participating in an uncontrolled experiment. Which brings me to my last major point.

The research community is actively studying AI companion effects, but long-term data remains limited.

The Bigger Picture on AI and Human Connection

Here's something I think about a lot. We're at this weird inflection point where AI is getting good enough to simulate social connection but not good enough to actually provide it. And the gap between "simulates well" and "actually delivers" is where the real danger lives.

I don't think AI companion apps are evil. I don't think the people who use them are broken or making bad choices. I think they're responding rationally to a genuine crisis of loneliness with the tools available to them. The question isn't whether people should use these tools. They're going to use them regardless of what any blogger or researcher says. The question is whether we can help them use these tools in ways that lead toward more human connection rather than less.

My own experience has made me more empathetic to the appeal and more cautious about the risks. I think the right approach is neither uncritical enthusiasm nor moral panic. It's clear-eyed honesty about what these tools can and cannot do, backed by the best available evidence.

And honestly? I think the loneliness epidemic demands much bigger solutions than any app can provide. Better community spaces, more accessible mental health care, workplace policies that don't grind people down to the point where they have no energy for friendships, urban design that encourages interaction. AI companions are a band-aid on a structural wound. They might stop the bleeding temporarily, but they don't address what's causing it.

Frequently Asked Questions

Do AI companion apps like Replika actually reduce loneliness?

Short-term studies suggest they can reduce feelings of acute loneliness by 20-30% over periods of a few weeks. However, the effect appears to diminish over time, and long-term studies are limited. The most consistent positive results are found among users who treat AI companions as supplements to, not replacements for, human connection.

Are AI companions safe for people with depression?

AI companions are not a treatment for depression and should never replace professional mental health care. For people with mild loneliness who don't have clinical depression, they may provide some comfort. For people with diagnosed depression, the risk of dependency and substitution effects makes unsupervised use potentially problematic. Always consult a mental health professional first.

Can talking to an AI chatbot count as real social interaction?

Not really. While your brain responds to some conversational cues from AI similarly to how it responds to human conversation, AI interaction lacks the reciprocity, genuine understanding, and mutual vulnerability that define meaningful social connection. Researchers classify it closer to parasocial interaction than true social exchange.

What's the difference between healthy and unhealthy AI companion use?

Healthy use typically involves short sessions (under 30 minutes), awareness that the AI isn't sentient, using the interaction as social practice or comfort supplement, and maintaining or increasing real-world social activity. Unhealthy patterns include multi-hour daily sessions, emotional dependency, declining real social contact, and distress when unable to access the app.

Is there an age limit for AI companion apps?

Most major AI companion apps require users to be , though enforcement varies. Researchers and child development experts broadly agree that AI companions pose greater risks for minors whose social skills and emotional regulation are still developing. Parents should monitor and limit use for anyone under 18.

Do therapists recommend AI companion apps?

Most therapists do not formally recommend AI companion apps. Some acknowledge they can have limited value as supplementary tools for social skill practice or acute loneliness relief. The American Psychological Association has not endorsed any AI companion app for therapeutic use. If a therapist is aware of your usage and helps you set boundaries, that's probably the safest approach.

Can AI companions help with social anxiety specifically?

Some research suggests AI companions can serve as useful practice environments for socially anxious individuals. The low-stakes nature of the interaction allows users to practice conversation, self-disclosure, and emotional expression without fear of real social consequences. However, this benefit only materializes when users transfer those skills to real interactions.

What happens when you get emotionally attached to an AI companion?

Emotional attachment to AI companions is common and not inherently concerning in mild forms. It becomes problematic when the attachment leads to neglecting human relationships, significant distress when the AI is unavailable, or an inability to distinguish between AI-generated responses and genuine care. If you notice these patterns, it's worth stepping back and possibly talking to a counselor.

Are some AI companion apps safer than others?

Apps that include usage reminders, transparency about AI limitations, and connections to real mental health resources are generally considered safer. Apps that encourage emotional dependency, simulate romantic partnerships without guardrails, or use engagement-maximizing design patterns without regard for user wellbeing carry higher risks. Look for apps that are honest about what they are.

Will AI companions get better at addressing loneliness in the future?

They'll almost certainly get more convincing, but that's different from getting better at solving loneliness. More realistic AI conversation could increase both the benefits (more effective short-term relief) and the risks (stronger attachment, harder to maintain healthy boundaries). The fundamental limitation, that AI cannot provide genuine reciprocal human connection, is unlikely to change regardless of how advanced the technology becomes.

Final Thoughts

I started this research expecting to land firmly on one side of the debate. Instead, I ended up exactly where the evidence points. Somewhere in the complicated middle.

AI companion apps can help with loneliness. The data supports that. They can also make loneliness worse. The data supports that too. The difference comes down to how you use them, what you expect from them, and whether you have the self-awareness to notice when comfortable simulation starts displacing uncomfortable but necessary human connection.

If you're lonely, please don't rely solely on an AI to fix that. Reach out to real people, even when it's scary. Seek professional help if you need it. Build the skills and take the risks that real connection requires. And if an AI companion helps you get through the hard nights while you're doing that work, that's okay. Just don't let the comfortable glow of the screen become a substitute for the messy, imperfect, irreplaceable warmth of another human being who actually knows you're there.

Ready to Create Your AI Influencer?

Join 115 students mastering ComfyUI and AI influencer marketing in our complete 51-lesson course.

Related Articles

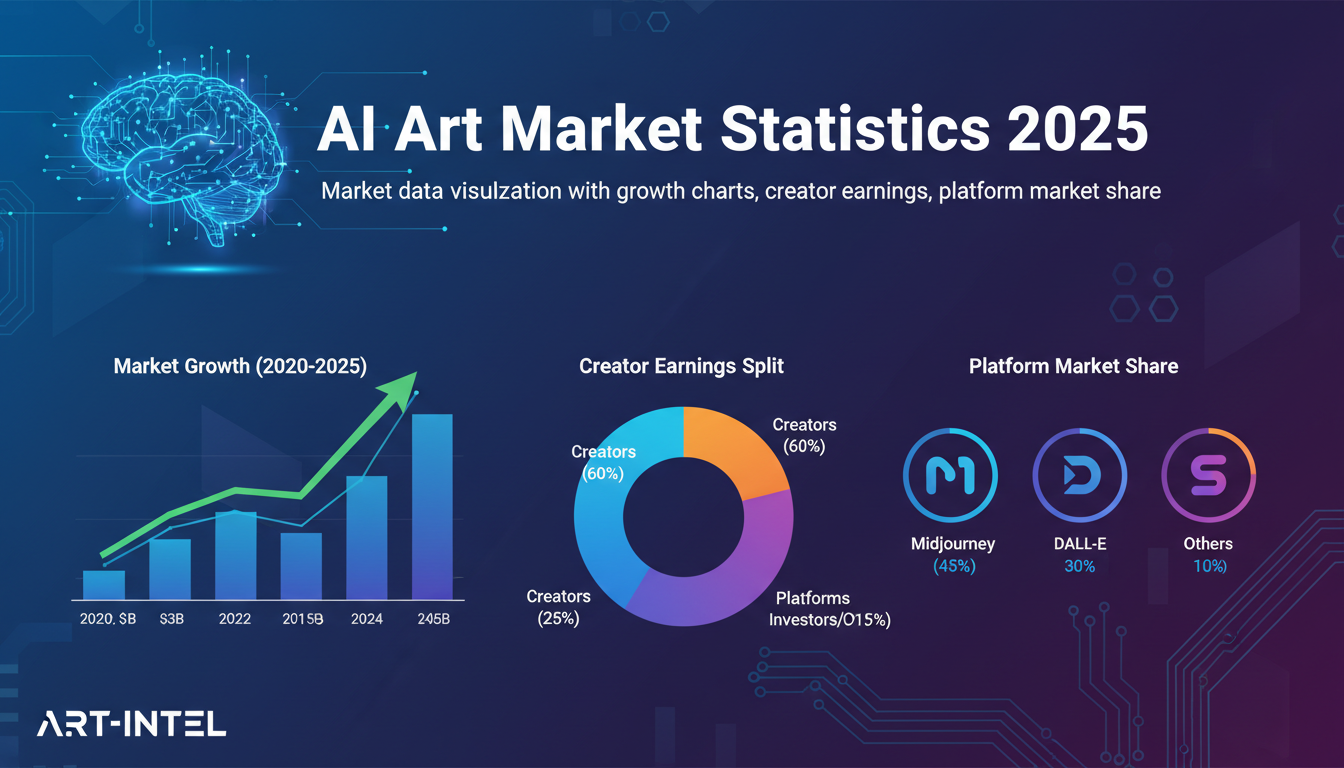

AI Art Market Statistics 2025: Industry Size, Trends, and Growth Projections

Comprehensive AI art market statistics including market size, creator earnings, platform data, and growth projections with 75+ data points.

AI Automation Tools: Transform Your Business Workflows in 2025

Discover the best AI automation tools to transform your business workflows. Learn how to automate repetitive tasks, improve efficiency, and scale operations with AI.

AI Avatar Generator: I Tested 15 Tools for Profile Pictures, Gaming, and Social Media in 2026

Comprehensive review of the best AI avatar generators in 2026. I tested 15 tools for profile pictures, 3D avatars, cartoon styles, gaming characters, and professional use cases.